Sam Ade Jacobs

194 posts

@samadejacobs

PhD Comp. Science (Texas A&M University), R&D expertise and experience in advanced large-scale big data (graph) analytics, machine (deep) learning, and robotics

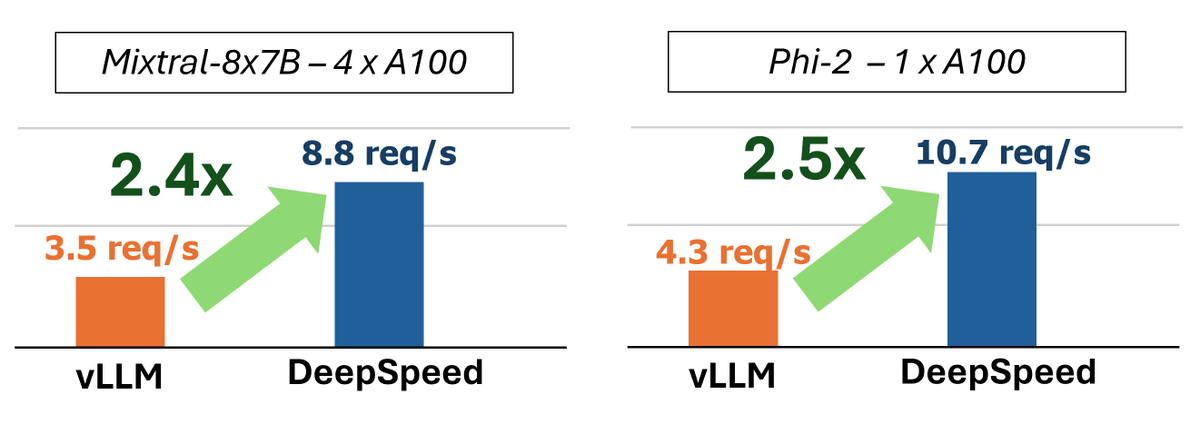

Dr. Ammar Ahmad Awan from Microsoft DeepSpeed giving a presentation at MUG '24 over Trillion-parameter LLMs and optimization with MVAPICH. @OSUengineering @Microsoft @OhTechCo @mvapich @MSFTDeepSpeed @MSFTDeepSpeedJP #MUG24 #MPI #AI #LLM #DeepSpeed

Gemini 1.5 pro is STILL under hyped I uploaded an entire codebase directly from github, AND all of the issues (@vercel ai sdk,) Not only was it able to understand the entire codebase, it identified the most urgent issue, and IMPLEMENTED a fix. This changes everything

How far does one billion parameters take you? As it turns out, pretty far!!! Today we're releasing phi-1.5, a 1.3B parameter LLM exhibiting emergent behaviors surprisingly close to much larger LLMs. For warm-up, see an example completion w. comparison to Falcon 7B & Llama2-7B