DeepSpeed

105 posts

DeepSpeed

@DeepSpeedAI

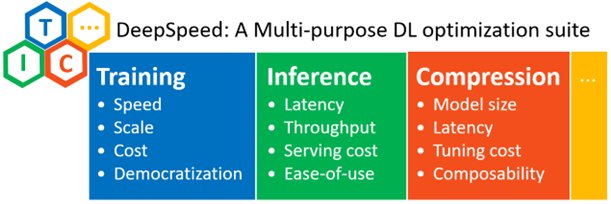

Official account for DeepSpeed, a library that enables unprecedented scale and speed for deep learning training + inference. 日本語 : @DeepSpeedAI_JP

Want to train LLMs on longer contexts without re-engineering your entire systems stack? Introducing AutoSP — the first compiler-based solution that automatically optimizes LLM training for long contexts. Under the hood, AutoSP applies a series of compiler passes that trigger sequence parallelism, paired with a curated activation-checkpointing scheme tailored for long-context training. It's integrated directly into DeepSpeed, so enabling long-context training is just a config change away. No more rewiring your stack to push context lengths. Read the blog to learn more 🖇️ pytorch.org/blog/introduci… ✍ @AhanGupta13, Zhihao W., Neel Dani, @toh_tana, Tunji Ruwase, @_Minjia_Zhang_ #PyTorch #DeepSpeed #AutoSP #OpenSourceAI

New @DeepSpeedAI updates make large-scale multimodal training simpler and more memory-efficient. Our latest blog introduces a PyTorch-identical backward API that helps code multimodal training loops easy, plus low-precision model states (BF16/FP16) that can reduce peak memory by up to 40% when combined with torch.autocast. 🖇️ Read the full post for details: hubs.la/Q044yYVs0 #DeepSpeed #PyTorch #MemoryEfficiency #MultimodalTraining #OpenSourceAI

🎙️ Mic check: Tunji Ruwase, Lead, DeepSpeed Project & Principal Engineer at Snowflake, is bringing the 🔥 to the keynote stage at #PyTorchCon! Get ready for big ideas and deeper learning October 22–23 in San Francisco. 👀 Speakers: hubs.la/Q03GPYFn0 🎟️ hubs.la/Q03GPXVH0

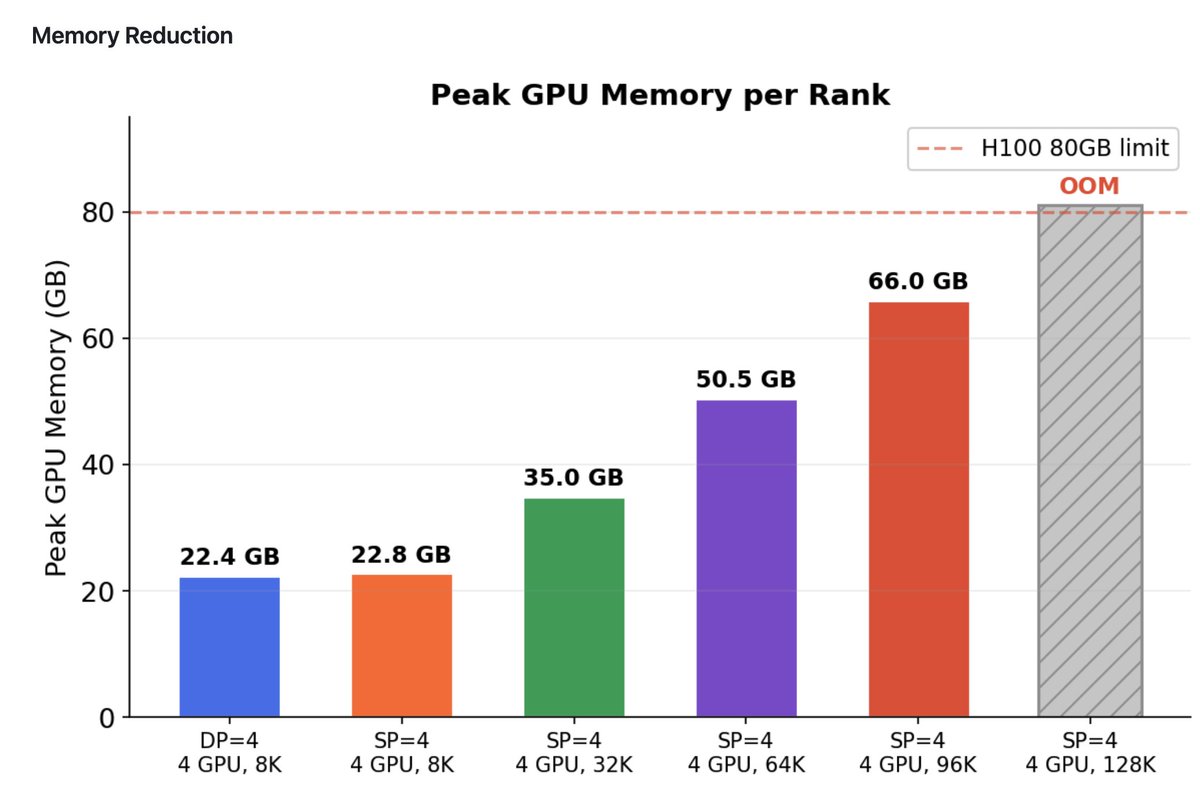

🚀 SuperOffload: Unleashing the Power of Large-Scale LLM Training on Superchips Superchips like the NVIDIA GH200 offer tightly coupled GPU-CPU architectures for AI workloads. But most existing offloading techniques were designed for traditional PCIe-based systems. Are we truly tapping into their full potential for LLM training? 🎯 SuperOffload is our answer to this challenge, a new DeepSpeed component rethinking offloading from the ground up, specially designed for LLM training on Superchips. ✨ SuperOffload is exact -- no approximation, no heuristics, and no changes to your training algorithm. Just faster, larger model with longer sequence training using the same code, which are made possible by system-level optimizations exploiting Superchip architecture. 🧪 SuperOffload allows you: - Finetune models like GPT-OSS-20B, Qwen3-14B, and Phi-4 on a single GH200 - Up to 4X faster speed than previous approaches like ZeRO-Offload - Effortlessly scales to: -- Qwen3-30B-A3B and Seed-OSS-36B on 2 x GH200s -- LLaMA2-70B on 4 x GH200s -- 1M sequence length on 8x GH200 with 55% MFU - Easy-to-use: Fully integrated and open-sourced in DeepSpeed. Just a few lines of code to enable! 📚 Read more through official PyTorch blog: pytorch.org/blog/superoffl… 🧠 For more technical details, please read our technical report: arxiv.org/abs/2509.21271 🛠️ SuperOffload is fully open-sourced through DeepSpeed. Try it now: github.com/deepspeedai/De… 📄 SuperOffload has been accepted to ASPLOS 2026! Kudos to Xinyu Lian (@Alexlian0806), Masahiro Tanaka (@toh_tana), and Olatunji Ruwase. 🎤 Featured at PyTorch Conference 2025 SuperOffload will be featured in the DeepSpeed & vLLM keynote at this year's PyTorch Conference in San Francisco. 🔥Come see how we're rethinking large-scale LLM training for the Superchip era: events.linuxfoundation.org/pytorch-confer…

Introducing #ZenFlow: No Compromising Speed for #LLM Training w/ Offloading 5× faster LLM training with offloading 85% less GPU stalls 2× lower I/O overhead 🚀 Blog: hubs.la/Q03DJ6GJ0 🚀 Try ZenFlow and experience 5× faster training with offloading: hubs.la/Q03DJ6Vb0

📢 Yesterday at USENIX ATC 2025, Xinyu Lian from UIUC SSAIL Lab presented our paper on Universal Checkpointing (UCP). UCP is a new distributed checkpointing system designed for today's large-scale DNN training, where models often use complex forms of parallelism, including data, tensor, pipeline, and expert parallelism. Existing checkpointing systems struggle in this setting because they are tightly coupled to specific training strategies (e.g., ZeRO-style data parallelism or 3D model parallelism), which break down when the training configs need to dynamically reconfigure over time. This makes it difficult to have resilient and fault-tolerant training. UCP solves this by decoupling distributed checkpointing from parallelism strategies. Our design introduces a unified checkpoint abstraction -- atomic checkpoint, and a full pattern matching-based transformation pipeline, which enables scalable and low-overhead checkpointing with reconfigurable parallelism across arbitrary model sharding strategies. We show that UCP supports state-of-the-art models trained with hybrid 3D/4D parallelism (ZeRO, TP, PP, SP) while incurring less than 0.001% overhead of the total training time. UCP is fully open-sourced in DeepSpeed. It has been adopted by Microsoft, BigScience, UC Berkeley and others for large-scale model pre-training and fine-tuning, including Phi-3.5-MoE (42B), BLOOM (176B), and many more. It also has been selected for presentation at PyTorch Day 2025 and FMS 2025(the Future of Memory and Storage). Big thanks to the amazing collaborators from Microsoft and Snowflake: @samadejacobs , @LevKurilenko, @MasahiroTanaka, @StasBekman , and @TunjiRuwase. 🔗 Project: lnkd.in/gG6j4vJe 📄 Paper: lnkd.in/gUiC5kcR 💻 Code: lnkd.in/g6uS29nH 📚 Tutorial: lnkd.in/gi_zWSWh #ATC2025 #LLM #Checkpointing #SystemsForML #DeepLearning #DistributedTraining #UIUC #DeepSpeed