Samuel Danso

1.7K posts

@samueldans0

Engineer | 🫂 @developer_dao

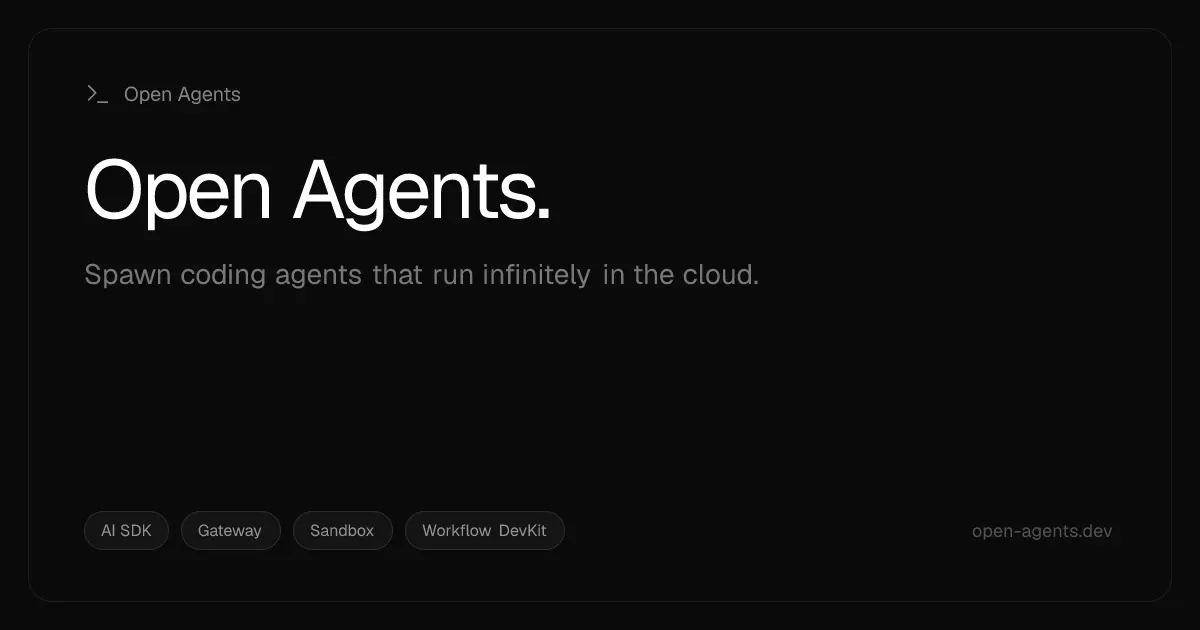

We’re introducing the Cursor SDK so you can build agents with the same runtime, harness, and models that power Cursor. Run agents from CI/CD pipelines, create automations for end-to-end workflows, or embed agents directly inside your products.

We’ve identified a security incident that involved unauthorized access to certain internal Vercel systems, impacting a limited subset of customers. Please see our security bulletin: vercel.com/kb/bulletin/ve…

Introducing Claude Design by Anthropic Labs: make prototypes, slides, and one-pagers by talking to Claude. Powered by Claude Opus 4.7, our most capable vision model. Available in research preview on the Pro, Max, Team, and Enterprise plans, rolling out throughout the day.

Introducing Claude Managed Agents: everything you need to build and deploy agents at scale. It pairs an agent harness tuned for performance with production infrastructure, so you can go from prototype to launch in days. Now in public beta on the Claude Platform.