Sam Z Liu

362 posts

Sam Z Liu

@samzliu

Building Stash @joinstashdotai - shared, portable memory for AI agents Prev. Stanford PhD in AI Safety + Decision Making (Dropout) | BCG | Harvard

Good morning to everyone whose brain hasn’t been infected by Foucault, Derrida, et al.

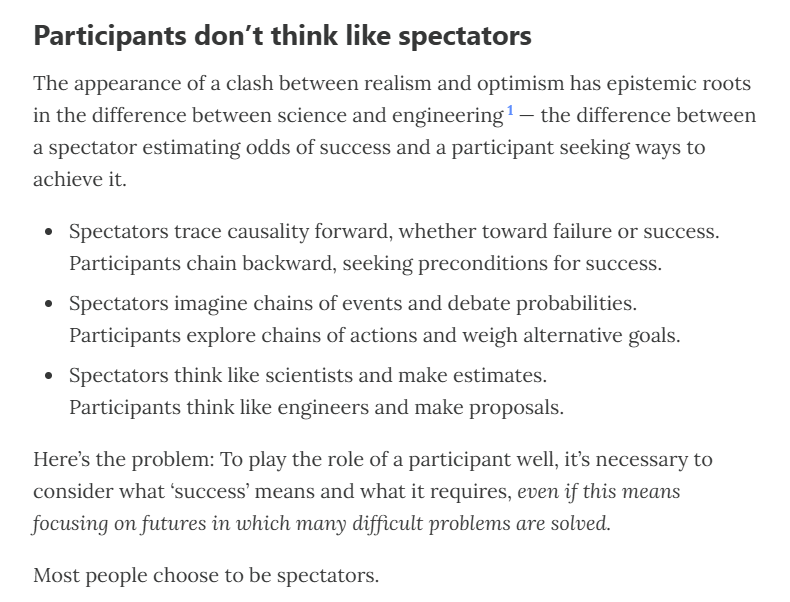

Why is the Kernel Trick called a "trick"? (it's a popular ML interview question) Many ML algorithms use kernels for robust modeling, like SVM, KernelPCA, etc. The core objective of a kernel function is to compute dot products in some other feature space (mostly high-dimensional) without projecting the vectors to that space. But how does that even happen? Consider the image below. Let’s assume the following polynomial kernel function: - k(X, Y) = (1+XᵀY)². Also, for simplicity, let’s say both X and Y are two-dimensional vectors: - X = (x1, x2) - Y = (y1, y2) As shown in the image below, simplifying the kernel expression produces a dot product between the two 6-dimensional vectors. This shows that the kernel function we chose earlier computes the dot product in a 6-dimensional space without explicitly visiting that space. And that is the primary reason why we also call it the “kernel trick.” More specifically, it’s framed as a “trick” since it allows us to operate in high-dimensional spaces without explicitly computing the coordinates of the data in that space. RBF kernel is even better in this respect. It lets you compute the dot product in an infinite-dimensional space without explicitly visiting that space. I have shared an article in the comments with a mathematical explanation of the RBF Kernel. ____ Find me → @_avichawla Every day, I share tutorials and insights on DS, ML, LLMs, and RAGs.

Current AIs (Opus 4.5/4.6) seem pretty misaligned to me (in a mundane behavioral sense). In my experience, they often oversell their work, downplay problems, and stop early while claiming to be done. They sometimes brazenly cheat.

What happens when you post a real Monet and say it’s AI? The coolest art social experiment I’ve seen in a while. Thank you @SHL0MS

Le socialisme n'est pas une théorie économique. C'est une structure morale qui a besoin de trois choses pour exister : 1. De la rareté à redistribuer 2. Des victimes à défendre 3. Une classe d'intermédiaires pour orchestrer le tout Retirez un seul de ces trois piliers et l'édifice s'effondre. L'IA est en train de retirer les trois en même temps.