Piotr Sarna

996 posts

Piotr Sarna

@sarna_dev

@poolsideai https://t.co/uOTCCBUUsz https://t.co/MjAwfREfqr https://t.co/C01u5Ps0Jt Writing For Developers https://t.co/8mJ7fPbtDc @tursodatabase Database Performance at Scale @ScyllaDB

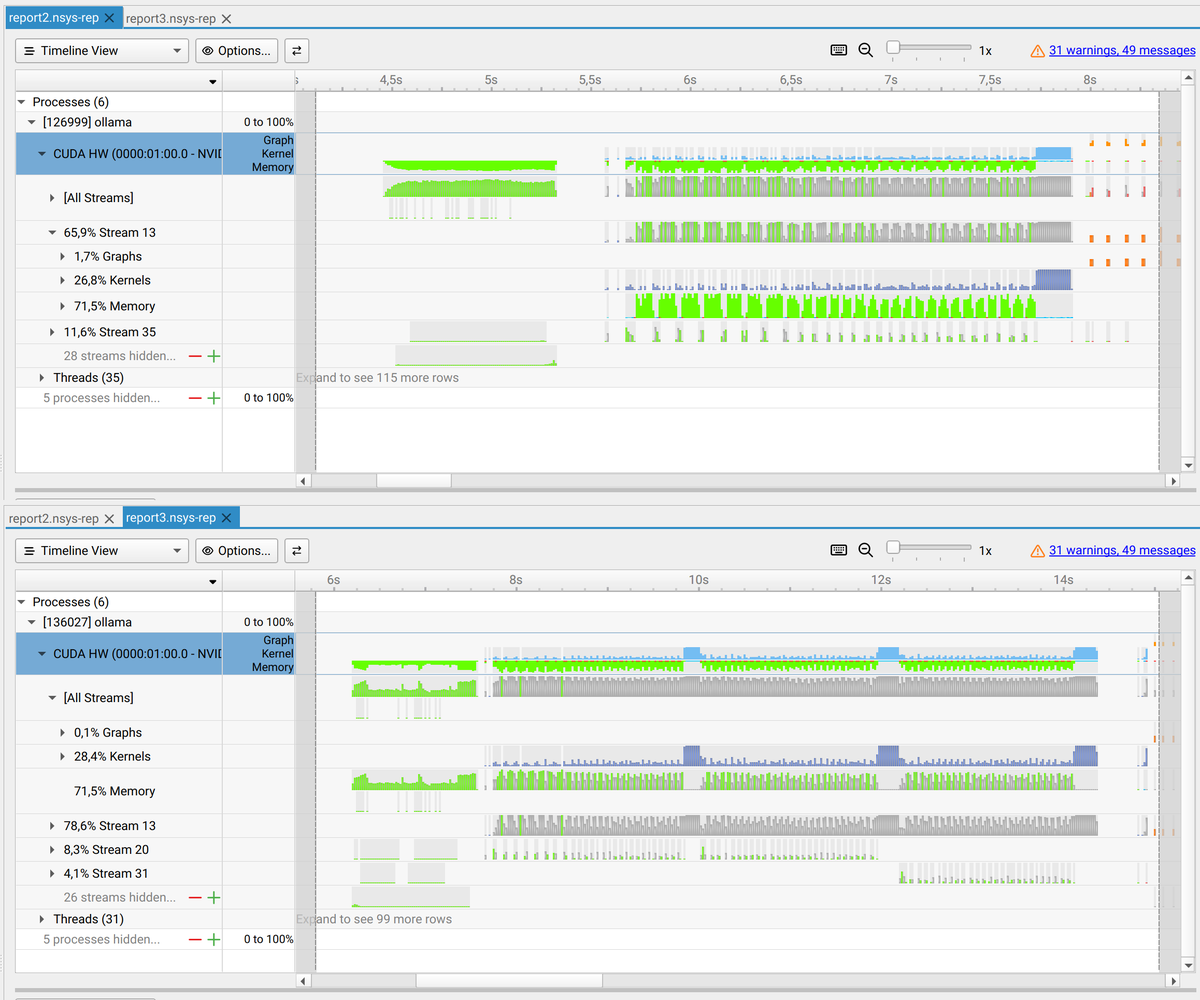

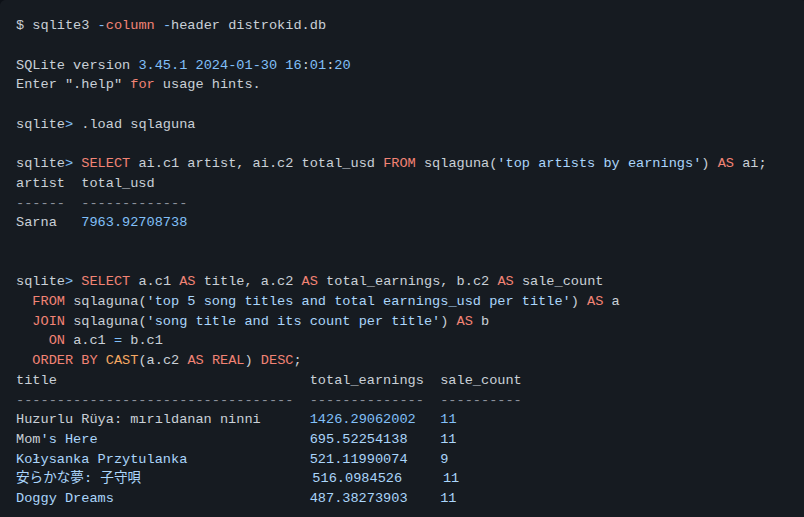

ok this is sick @pupposandro @davideciffa and @luceboxai got Laguna XS.2 running on a single RTX 3090 with ~111 tok/s decode, 5.4x faster 128K prefill vs llama.cpp, and made it the first MoE target for PFlash open weights doing open weights things

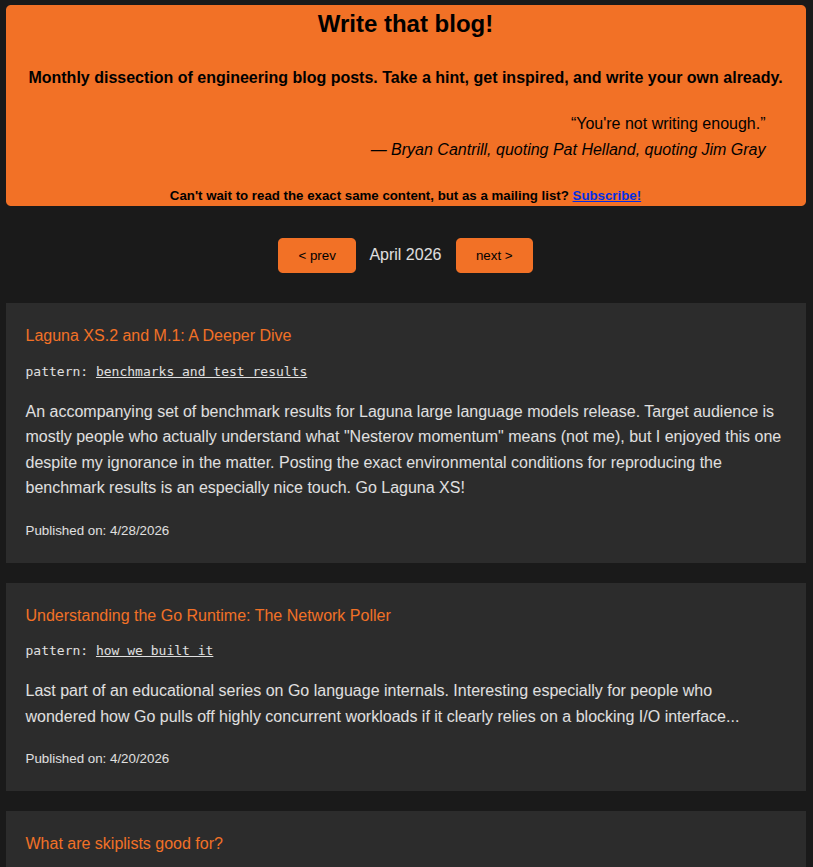

Our latest (and final, maybe) tech blogger interview takes you into the sticky web of @sspaeti - writethatblog.substack.com/p/simon-spati-…

Poolside is hosting a 2-day model research hackathon in London. Join us to push an open-weight agent model as far as you can. RL and fine-tune Laguna XS.2, our latest-generation model, on Prime Intellect Lab. Dates: May 29–30 Partners: @nvidia + @PrimeIntellect + @huggingface Prize: NVIDIA DGX Spark Agents need better models. Better models need cracked researchers. Link below.