Lucebox retweetledi

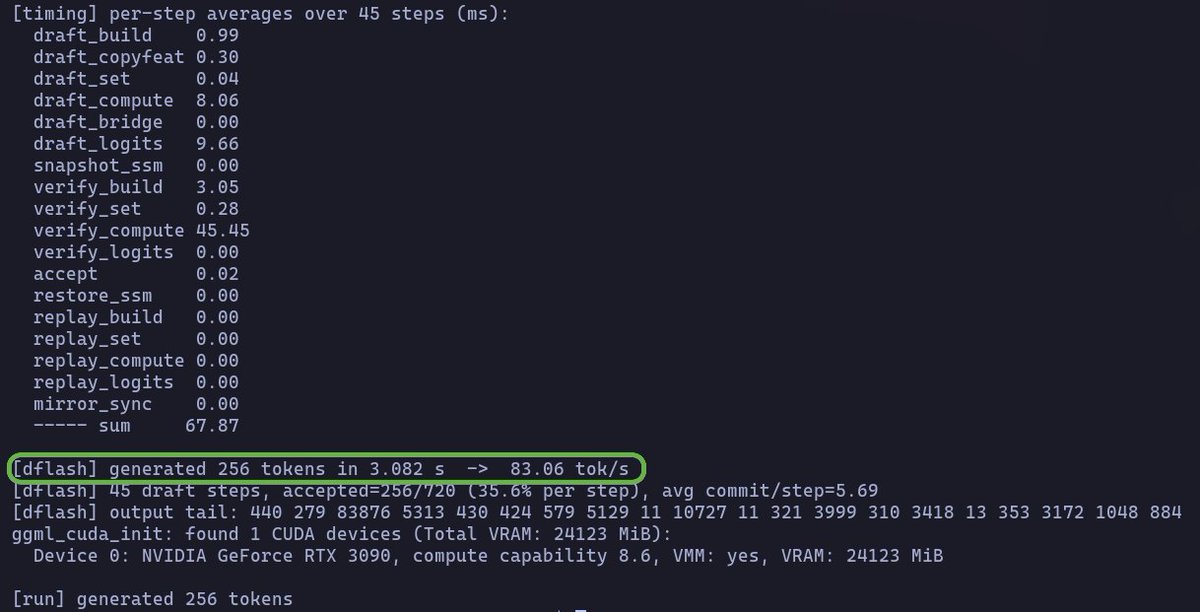

ok this is sick

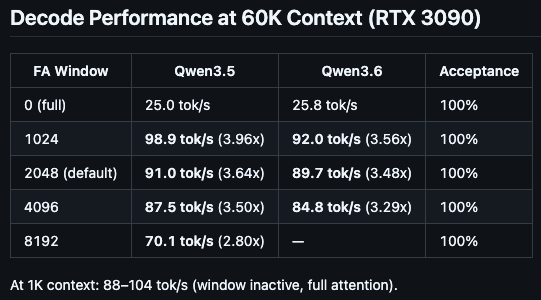

@pupposandro @davideciffa and @luceboxai got Laguna XS.2 running on a single RTX 3090 with ~111 tok/s decode, 5.4x faster 128K prefill vs llama.cpp, and made it the first MoE target for PFlash

open weights doing open weights things

English