Sarthak Mittal

316 posts

Sarthak Mittal

@sarthmit

Graduate Student at @Mila_Quebec and Student Researcher at @GoogleResearch. Previously interned at @Meta @Apple @MorganStanley @NVIDIAAI and @YorkUniversity

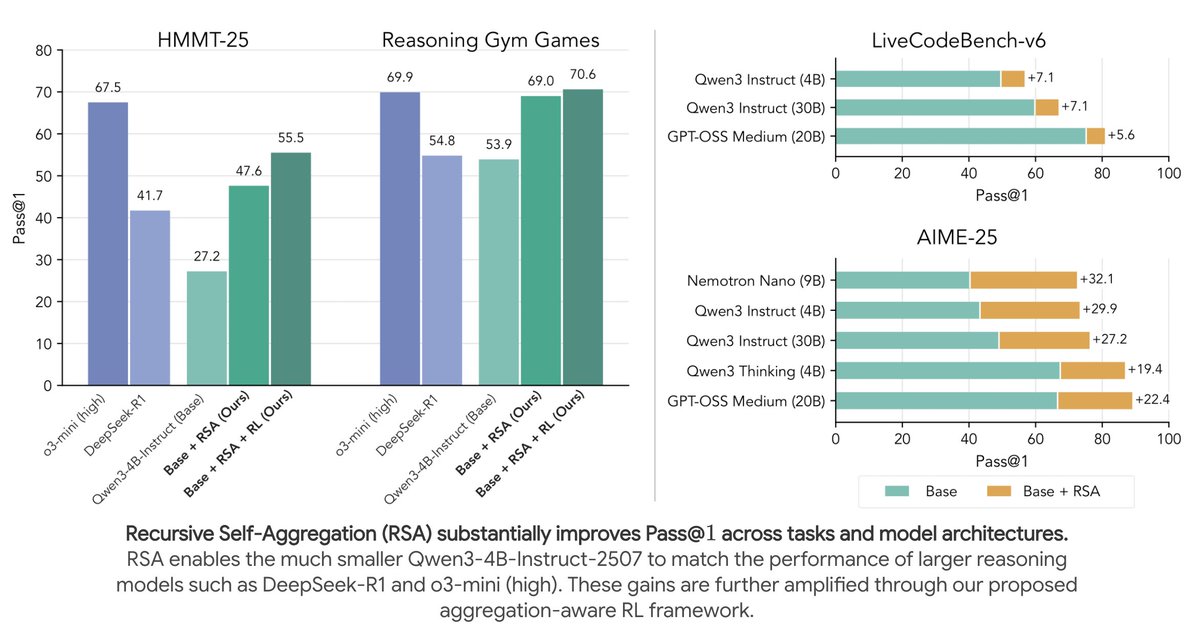

We have been pushing the limits of test-time scaling with RSA for single-turn reasoning problems in science and math. Check out our blog post with new results on ARC-AGI-2, ArXivMath, and FrontierScience! A lot of gains with just test-time scaling! rsa-llm.github.io/blog

Recursive Self-Aggregation (RSA) + Gemini 3 Flash scores 59.31% on the public ARC-AGI-2 evals, placing it firmly among the top performers! Here are the highlights: > Outperforms Gemini DeepThink at about 1/10th the cost > Bridges the performance gap with GPT-5.2-xHigh for a similar cost > Nearly matches Poetiq while using a much simpler pipeline. Poetiq uses scaffolded refinement (often via generated code), while RSA does not Also, Gemini 3 Flash is impressive; the Gemini team cooked with this one! We're also eager to run evals with GPT-5.2 + RSA in the future (anyone with credits? :P)

Recursive Self-Aggregation > Gemini DeepThink. it really is the best test-time scaling algorithm does that claim sound too bold? well, we just crushed ARC-AGI 2 public evals with Gemini 3 Flash and RSA. stay tuned, more details tomorrow :) @arcprize

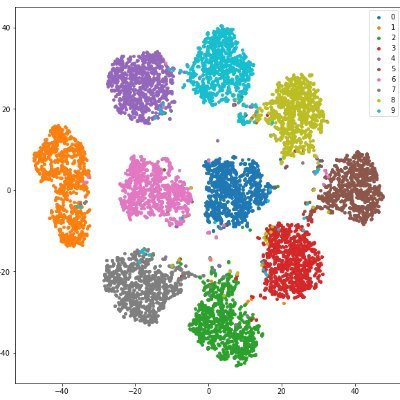

Standard reinforcement learning in raw tokens is a disaster for sparse rewards! Here, we propose 𝗜𝗻𝘁𝗲𝗿𝗻𝗮𝗹 𝗥𝗟: acting on abstract actions emerging in the residual stream representation. A paradigm shift in using pretrained models to solve hard, long-horizon tasks! 🧵

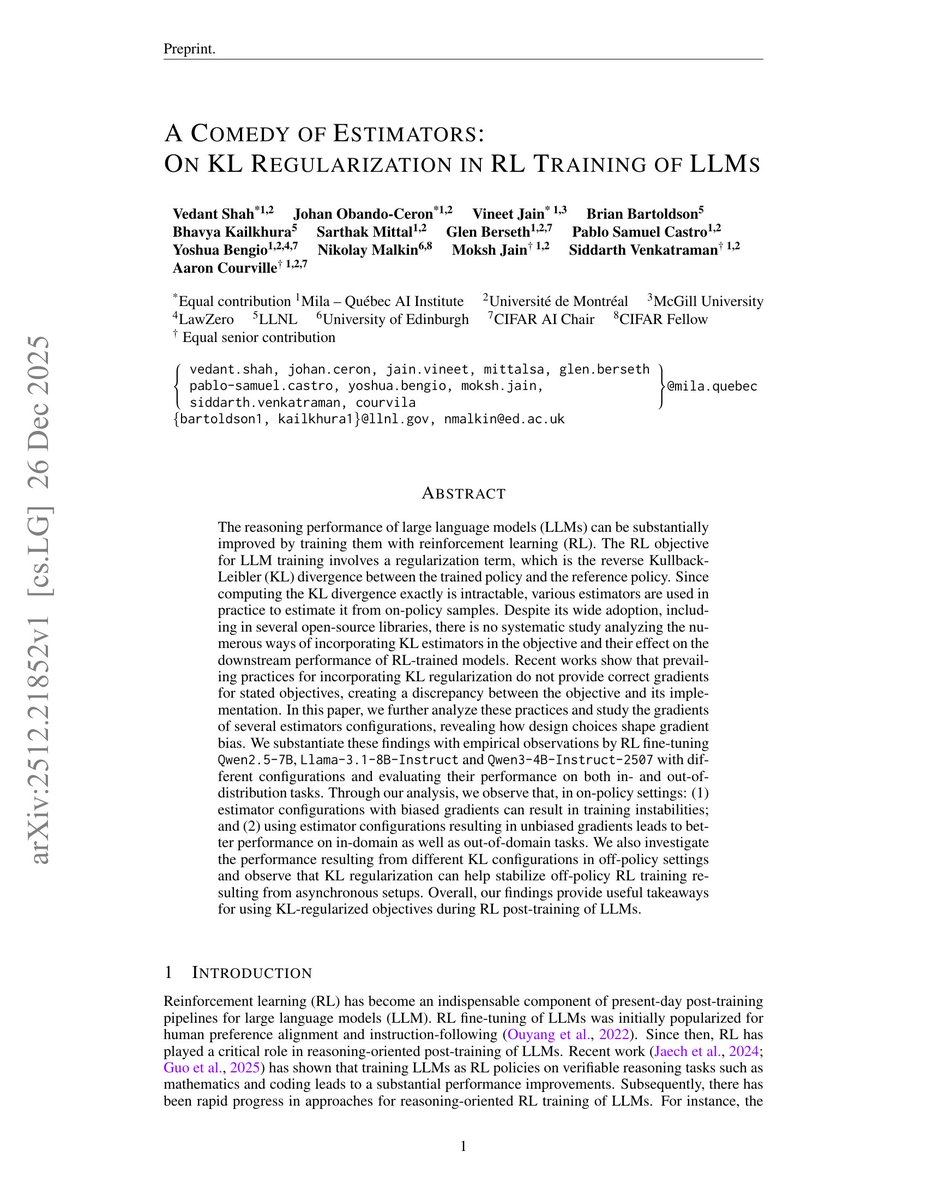

LOTs of discourse lately about the correctness of the KL-regularization term used in RLVR fine-tuning of LLMs. Which estimator to use? Whether to add it to the reward or loss? What’s even the difference? 🤔 In our new preprint, we evaluate these choices empirically. 🧵 1/n

😅arxiv.org/abs/2512.21852 who said that "using k3 in loss = using path-wise grad"??? the correct way to use k3 in loss is to use the FULL grad. og GRPO used k3 without IS-correction (= path-wise grad), which is wrong. but it's not k3's fault!!!

One more study (arxiv.org/abs/2510.01555) on KL penalty with K1, K3 estimators as a reward or a loss.

Pay attention to the hospital staff in this video; Not only there are no one around, considering this men should have just gotten full hands on deck emergency care but the 2 that are standing there have like zero care or empathy to the situation while the wife is pouring her heart out while her husband laying dead next to her because of nothing short of negligence. The man waited in an Edmonton hospital ER for 8 hours for care, and even had a heart beat on 210 which should have alarmed everyone there but no one cared. Every single person involved should be fired, never work in healthcare again and some of them jailed. He should every single sign that points to something catastrophic might happen. But "free healthcare". Ironically, this would have never happened to them in India.

I'm glad this paper of ours is getting attention. It shows that there are more efficient and effective ways for models to use their thinking tokens than generating a long uninterrupted thinking trace. Our PDR (parallel/distill/refine) orchestration gives much better final accuracy, while avoiding context bloat. (So it might be much cheaper to serve than today's thinking models.) I'm guessing that so-called "deep research models" rely on such orchestrations.

wait so the GRPO everyone are drooling about is just REINFORCE with the baseline computed as an average over a large sample (and the usual kl regularization in llm models)?

We’ll be presenting this at the FoRLM workshop between 10:15-11:30am room 33 tomorrow! Drop by if you’d like to chat about this paper, or RL for LLMs in general (I got some juicy new insights)

NO verifiers. NO Tools. Qwen3-4B-Instruct can match DeepSeek-R1 and o3-mini (high) with ONLY test-time scaling. Presenting Recursive Self-Aggregation (RSA) — the strongest test-time scaling method I know of! Then we use aggregation-aware RL to push further!! 📈📈 🧵below!