Sabitlenmiş Tweet

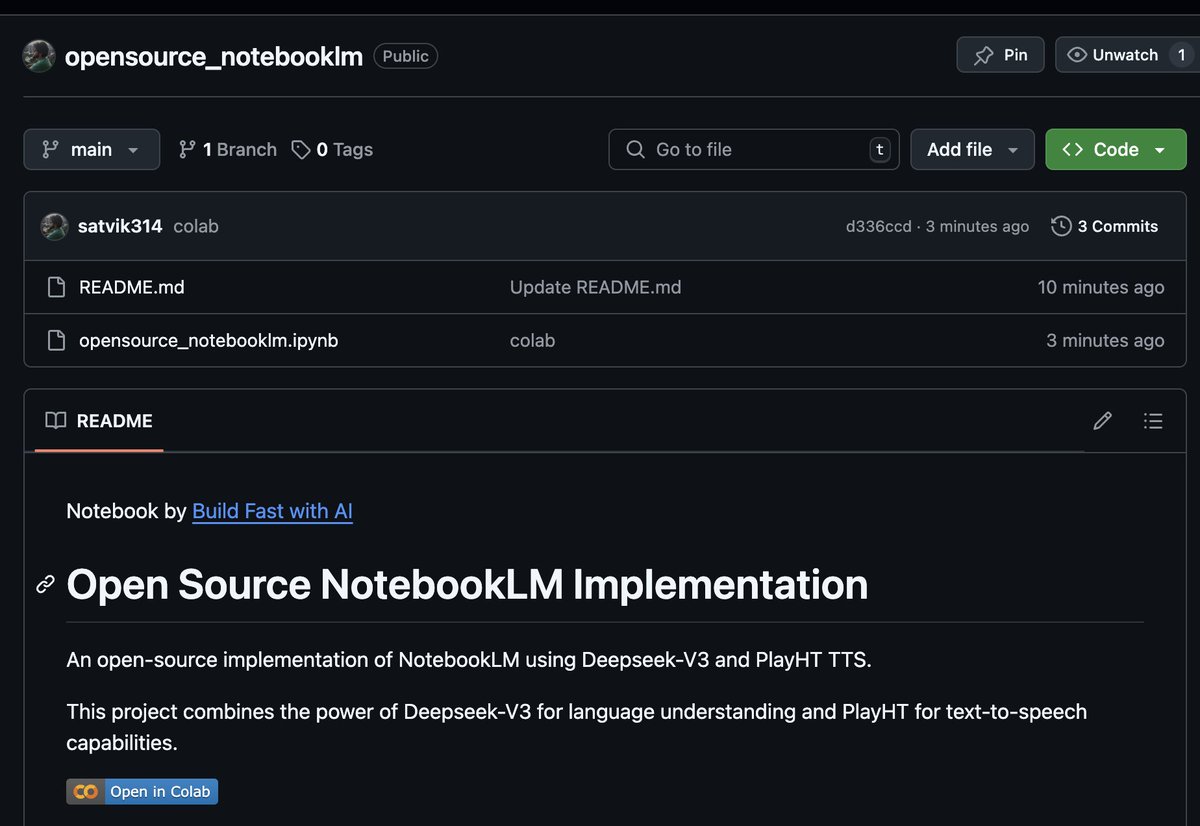

🤯 I created an open-source implementation of @notebooklm!

🤖 Deepseek-V3 API using @openrouter

🎙️ @play_ht TTS using @FAL API

📚 Create AI podcasts on ANY topic

🆓 100% Customizable

All this in <50 lines of code!

⭐️ Check out the GitHub repo: git.new/opensource-not…

English