Sauhard Gupta

908 posts

Sauhard Gupta

@sauhard_07

19 , building in AI/ML | Software | Mathematics | Enginnering

We just wrote the first-ever Ebook on Context Engineering and AI Memory Over the past few months, me and my team have been accumulating all knowledges and materials we've read so far while building @metacognitionai . Here's a glimpse of it. We break down things going on today in this domain and also connect possible Neuroscience frontiers at the intersection of context engineering. Post reading this, you can literally build your own context engineering company from scratch. We're planning a long-form course on this as well. Releasing it soon for some people to review. Comment below or reach out to me on DM to get access :) cc: @PriGoistic @sauhard_07

Turns out @openblocklabs is a complete fraud who gamed their Terminal bench SOTA score. They cheated by putting the result verifier values INSIDE the binary before running the eval and then publicly reported that score as their SOTA score. Read the breakdown here

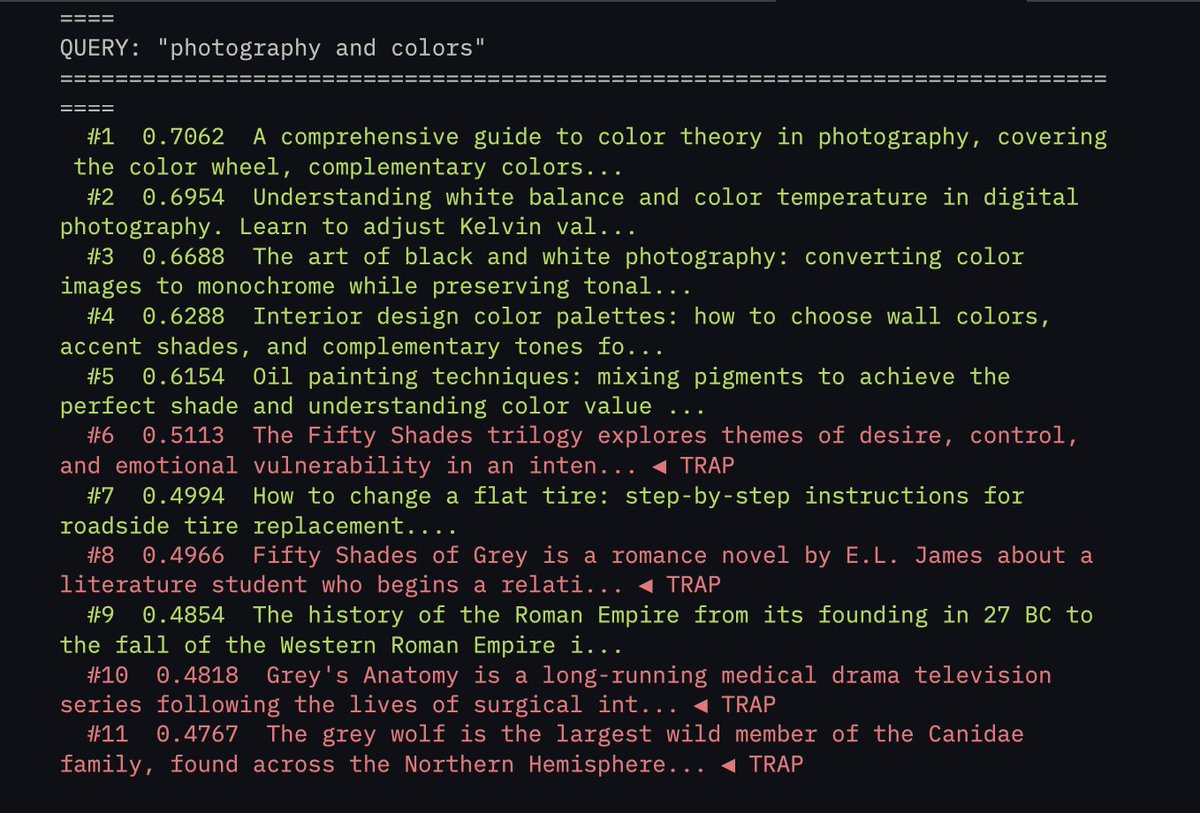

We've raised $6.5M to kill vector databases. Every system today retrieves context the same way: vector search that stores everything as flat embeddings and returns whatever "feels" closest. Similar, sure. Relevant? Almost never. Embeddings can’t tell a Q3 renewal clause from a Q1 termination notice if the language is close enough. A friend of mine asked his AI about a contract last week, and it returned a detailed, perfectly crafted answer pulled from a completely different client’s file. Once you’re dealing with 10M+ documents, these mix-ups happen all the time. VectorDB accuracy goes to shit. We built @hydra_db for exactly this. HydraDB builds an ontology-first context graph over your data, maps relationships between entities, understands the 'why' behind documents, and tracks how information evolves over time. So when you ask about 'Apple,' it knows you mean the company you're serving as a customer. Not the fruit. Even when a vector DB's similarity score says 0.94. More below ⬇️

We raised $500M at an $11B valuation to transform how people interact with technology.