Our position paper arguing against the anthropomorphization of intermediate tokens has been accepted to #ICML2026! I am tickled pink as I reversed the roles and did the writing and rebutting myself with "advise" from my students.. 😎

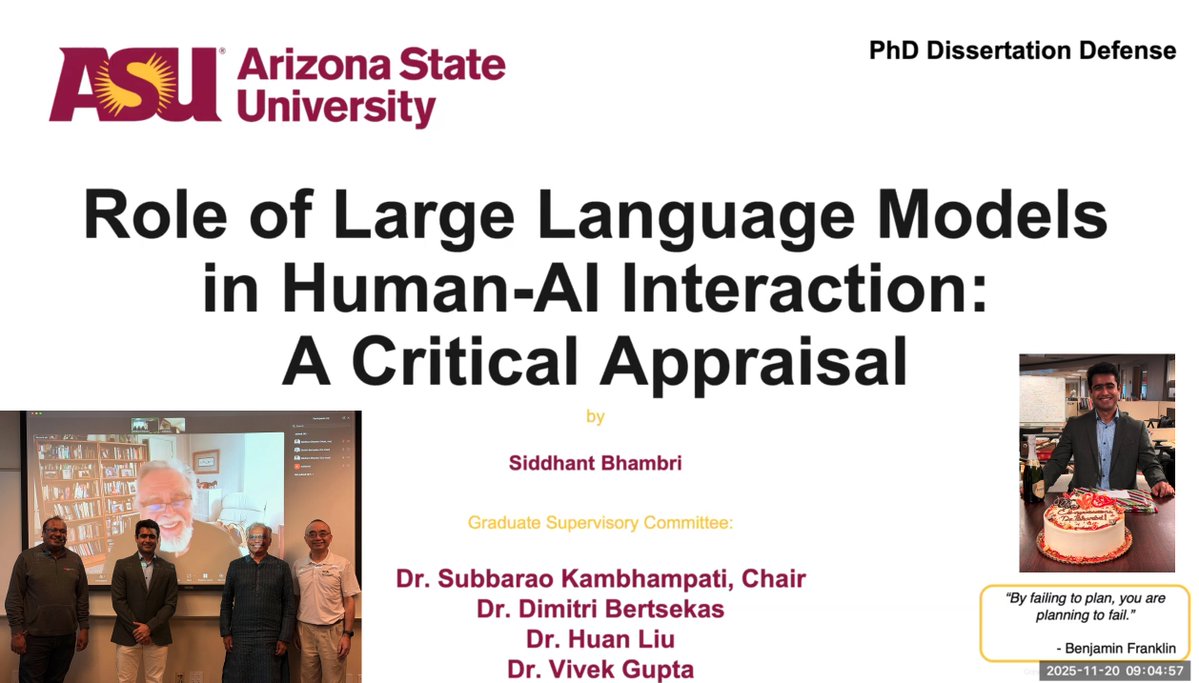

Siddhant (Sid) Bhambri

88 posts

@sbhambr1

PhD @ Yochan Lab, ASU

Our position paper arguing against the anthropomorphization of intermediate tokens has been accepted to #ICML2026! I am tickled pink as I reversed the roles and did the writing and rebutting myself with "advise" from my students.. 😎

#ACL2026 just accepted a paper lead by Yochanites @sbhambr1 & @biswas_2707 that shows, in a Q&A setting, that the intermediate tokens in LRMs (1) don't necessarily need to have user interpretable semantics and (2) distilling models with traces having semantics doesn't necessarily improve accuracy. 1/

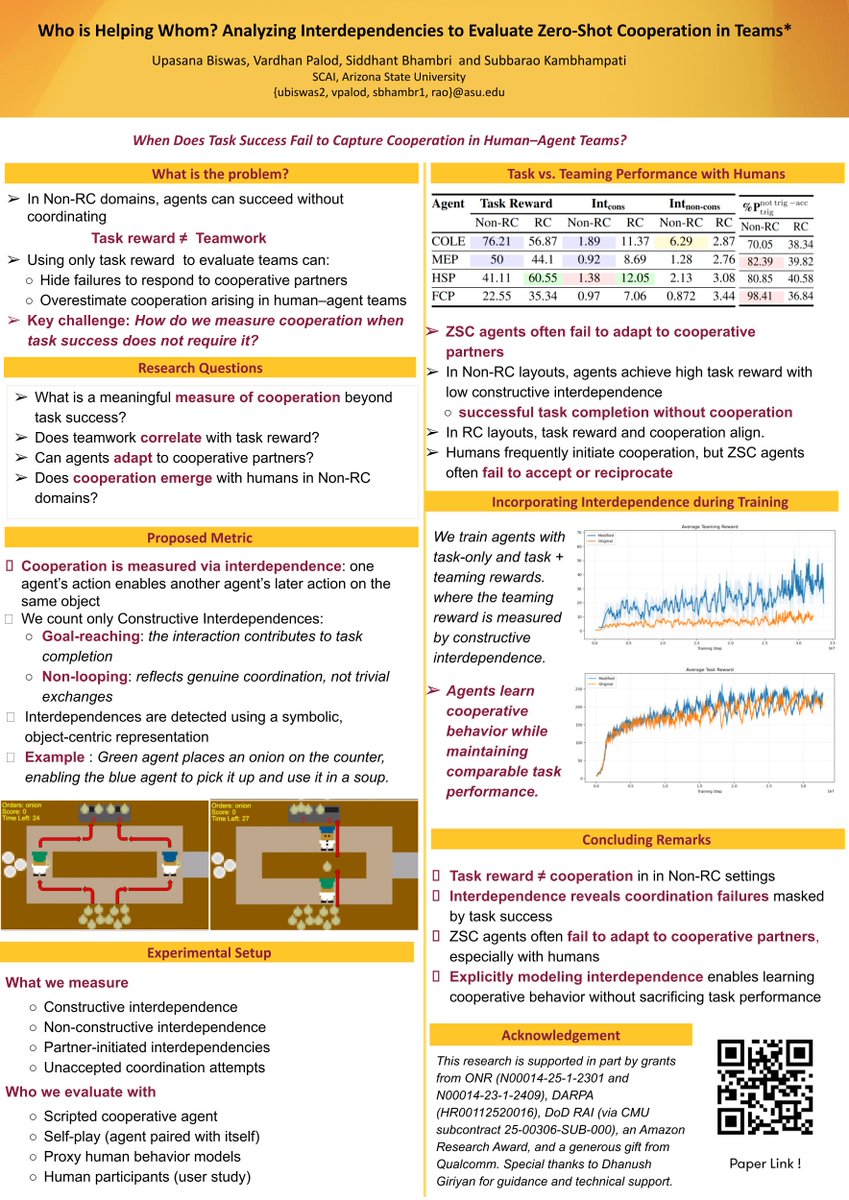

What if your cooperative AI agent is actively avoiding you? Despite significant interest in having human and AI agents teaming constructively to solve problems, most work in the area focuses on the bottom line task reward rather than any actual cooperation between the agents. In many cases, where the task can, in principle, be completed by either agent alone albeit with additional burden (i.e., the task doesn't require cooperation), task reward itself doesn't give any indication of whether there is any actual cooperation between the agents. In a paper to be presented at #AAAI2026, Yochanite @biswas_2707 (w/ @PalodVardh12428 and @sbhambr1) develop a novel metric to analyze inter-dependencies between human and AI agents, and use that measure to evaluate cooperation induced by several SOTA AI agents trained for cooperative tasks. We see that most SOTA AI agents that claim to be RL trained for "Zero-shot cooperation" actually don't induce much inter-dependence between the AI and human agents at all. This calls into question the prevalent approach of training AI agents on task reward, and hoping for cooperation to emerge as a side effect!

Our recent research efforts have questioned the narrative that the LRM intermediate tokens have semantics (see x.com/rao2z/status/1… ). Some may counter these with "..but I read the traces, and they do seem to make sense.." and claim RLVR post-training must be making the traces correct. We analyze this disconnect in terms of local coherence vs. global validity/correctness of the trace. 1/

Semantics of Intermediate Tokens in Trace-based distillation in Q&A tasks: Yochanites @sbhambr1 and @biswas_2707 looked at distillation on a Q&A task, and found a disconnect between the validity of derivational traces and the correctness of the solution.. 🧵 1/

📢 ReAct popularized the "Think 🤔" magic by claiming to help LLMs plan by "synergizing reasoning and acting." @v_mudit & @sbhambr1 investigated the claims, and have a thing are two to say about the extreme brittleness of ReAct style prompting. 👉arxiv.org/abs/2405.13966 1/