Michele Sciabarra

328 posts

Michele Sciabarra

@sciabarracom

Founder at https://t.co/naGmtcz2GM - building Trustable the all-in-one simple solution for Vibe Coding with Private AI - think Lovable but private

London, England Katılım Aralık 2016

428 Takip Edilen506 Takipçiler

@trikcode AI understands perfectly non english - I use english because I feel to be standard but occasionally I drop requests in italian and it does not make any difference

English

@Jackson__Price Do you have already a plan or... will you discover it?

English

Apache OpenServerless website is now live and you can install a pre release of OpenServerless! Locally with docker, on a Linux server, on AKS and EKS. openserverless.apache.org Try it and let us know.

English

MastroGPT (dot com), our builder for GPT Apps, is a secret but not too secret. We registered the domain and in order not to leave the site empty, we put a simple form for a waitlist, which did not go unnoticed! Dozens of requests came in!

At that point, while we were experimenting with the various OpenAI APIs, in particular the Assistant API, Antonio had the idea of making an assistant for... the waitlist! Said and done.

Now the waitlist is a chatbot, not a form.

Below is a short video showing how it works: if you go to mastroGPT.com you will find an assistant that shows you what you can do with mastroGPT. We are still training him, but he is capable. And if you want to be among the first to experience it, you can ask to register for early access. We will be notified and give you access as soon as it is available.

All powered by Nuvolaris. Which should combine the power of serverless with the development of the new wave of AI applications...

Whad do you think?

Ah, it's a bit slow but we use the OpenAI API and it's not super responsive yet...

English

Learning, in our age, is a bit like cutting off the head of Hydra, the famous three-headed serpent of mythology. As soon as you learn something, another is born that renews or challenges it.

Therefore, I always try to make a synthesis of what is valuable and that is also what we do every day with Nuvolaris, when we are faced with the next integration or technological challenge: what to hold back?

Among the innovations, there is something we put the spotlight on that is still little talked about: are you familiar with the Assistants API?

Let me tell you about some of the concepts we are integrating.

In a nutshell, OpenAI offers the possibility of creating PERSONALISED models of its core engine. You provide context, data, instructions, code and you can shape your AI. You train it, teach it what to do, define its tone and behaviour.

Similar to GPT apps, with the possibility of integrating libraries into your environment via the REST API technology, hence the name Assistant API.

One important point: Assistants have a thread-based interaction life cycle and memory. During a conversation or as long as that thread exists, they take into account the data communicated by the user.

This is valuable, for example, within a chat to guide a customer to the best choice based on his or her tastes or for any business need.

With the code interpreter, which has the ability to read and run code (this is only available on some models), you can invoke capabilities such as turning your text into audio.

You can have function calling and a world of functions still in beta that we are studying and integrating within Nuvolaris and MastroGPT.

We will not tire of repeating that to integrate these solutions to the best of our ability, you need an environment that is easy to manage and highly scalable.

We will show you some examples shortly!

English

OpenAI recently introduced GPT apps, also known as GPTs, which have the potential to become a business as big as mobile apps.

They were announced a few weeks ago and have been talked about by various commentators, but it seems that the scale of the news was lost behind the hype that followed after the sudden and unexpected dismissal (and return) of Sam Altman as CEO of OpenAI.

Returning to the technical aspect, GPT apps are 'customised' versions of ChatGPT that instruct it to impersonate precise roles. OpenAI provides a number of examples: there is a creative writing assistant, a negotiator, a colouring image generator, a cocktail expert and even ... Father Christmas!

The important thing is that these customisations can be created by anyone using a creation function. The creation of a GPT goes through a series of configuration elements, in which in addition to the name and description, instructions are given on how it should behave and a knowledge base.

But probably the most interesting and revolutionary aspect is the backend 'actions'. A GPT app can be configured to execute a series of functions to perform a requested task. These functions are provided to ChatGPT along with the app with a configuration telling it how they are to be used.

The amazing thing is that if you configure the functions correctly, ChatGPT will actually query them and use them to answer your question.

To give an example, suppose you create a GPT app that assists an accountant, and you ask ChatGPT for the turnover of a given client. If this information is in a database accessible with one of the functions provided, ChatGPT will actually use it to answer your question.

So you can ask ChatGPT: what is the turnover of X, and ChatGPT will answer you. But it can also get much more complex by applying analysis to various clients.

Note that functions are in all respects REST APIs, and Nuvolaris is a FaaS which in itself makes it very easy to implement these functions precisely API REST and we have equipped our UI with an interface to easily create both functions and descriptors.

The possibilities offered by GPT apps are unlimited: in this way it is possible to give ChatGPT access to any amount of external documents and information.

Interestingly enough, in many areas people think about 'fine tuning' LLM without realising that it can give unpredictable results and that the data set must be very clean and is 'expert' business. Instead, what is needed in 99% of cases is to extract the information that is needed and then have ChatGPT rework it.

My belief is that this is the new frontier of development, a new world that integrates 'traditional' software with AI assistants.

English

"Guys, we just have to wait for the Internet for our Windows NT".

This is a phrase I've often repeated in our startup meetings explaining how whatever technology you develop, it doesn't sell until... it becomes indispensable. But that the only way to develop something successful is to get ahead of the curve. The key phrase is 'when it is clear that it is a good idea it is too late'.

When your technology becomes something that everyone wants, the fact that you developed it earlier gives you an advantage that your competitors cannot quickly close.

I always use the example of Windows NT. It was born for the development of the 'next generation' OS/2, but Microsoft and IBM had fallen out in the meantime, given the poor sales of OS/2. So Microsoft was left with an advanced operating system 'on its back' (so to speak), but at this point it would no longer be sold with IBM.

All this at a time when Microsoft was the market leader with Windows running on DOS. And nobody gave a damn about an advanced operating system! Running Word and Excel on Windows was what most people needed!

Microsoft, however, did not abandon the project for a new operating system, knew full well that DOS sucked, renamed it 'Windows New Technology' meaning that it would replace DOS in the future, and put it on sale anyway, as a network operating system (alongside DOS-based Windows).

Windows NT had initially poor sales results. Because no-one gave a damn about an advanced operating system when for all the applications that existed, the patched DOS-based Windows, although prone to frequent 'blue screens', was good enough.

Windows NT did poorly until the internet came along, and the need emerged for an advanced operating system to handle internet servers. At that point, sales took off.

The same thing is happening to us. With Nuvolaris, we created a distribution of a rather advanced FaaS system, based on Apache OpenWhisk, which is a real gem (and is used in production in many clouds). But most people don't seem to care much about it, all they need is to get their cloud applications up more or less as they are on the patched-up patch that is Kubernetes. An advanced FaaS system seemed to be of no use to anyone.

Until ChatGPT came along, and the new wave of applications based on it. Which just happen to require their own functions as a backend, and our Nuvolaris FaaS system is an incredible fit.

ChatGPT is to Nuvolaris what the Internet was to WindowsNT. And we're seeing this with our own eyes (or rather, we're crunching them) by seeing the number of people who are registering on the waitlist of mastroGPT (dot com) which we haven't even publicised yet...

English

Let me tell you a familiar story, one we have often heard before: that of unstoppable growth, of success that seems to blossom from one day to the next.

But if we dig a little deeper, we discover that behind that sudden triumph there is often a long path of tireless work, sometimes spanning decades.

Well, my story is following the same pattern.

Ever since I announced our commitment to creating a solution for GPTApps with Nuvolaris, requests have been pouring in, and this is only the beginning.

It is clear that Nuvolaris is the ideal choice for several reasons:

- GPT Apps are based on REST APIs, and Nuvolaris was specifically designed for this type of use case.

- It allows the easy development of REST APIs, making the process much simpler than creating a custom server.

- When the number of REST APIs to be developed is large, the practicality of Nuvolaris becomes even more apparent.

- Its structure is inherently SCALA-based, guaranteeing an automatic and facilitated implementation.

- If you are not a SCALA follower, the solution becomes a little more complicated, but clearly, it is a standard worth embracing!

Imagine launching a successful GPT App, with a hundred thousand visitors using it. Well, now imagine that you find yourself holding the bag because behind it is a micro-server on Amazon that cannot support more than three users at the same time, an application constrained by a single database and impossible to scale.

The applications I'm talking about are on another level, they are super scalable and, in this context, are simply necessary and unavoidable.

We are not talking about projects that "maybe one day" will end up on Kubernetes. No, we are talking about something that you have to implement on Kubernetes TODAY and that you have to know how to handle perfectly.

That's why I wish everyone to tame this monster!

Or, alternatively, you can opt for Nuvolaris, a simple and intuitive solution. It is the fruit of at least six years of work, of a start-up built around this idea.

It is not something that arrived 'just like that', but rather an answer to a real problem that, when it became urgent, we were here ready to solve.

English

My first 'physical event' will be in Milan on 29 and 30 November.

I will be at WPC 2023 in stand 07 of Code Architects, our Italian implementation partners. Come and meet me to discuss Serverless portables and GPT apps!

I have even printed paper business cards (first time too) to hand out. And if you come with your own copy of my book I will sign it for you, I know many people care about that....

The appointment is at the NH Milano Congress Centre in Assago, Stand 07, I will be there all day on 29 and the morning of 30 November.

See you in Milan!

English

I'm really annoying when it comes to code quality... but since I don't have much time lately, I decided to automate myself! Slack Scorn is an app based on generative AI, which writes directly on a Slack channel to criticise our skills as programmers.

Poor Antonio has been the internal target of various criticisms, so he volunteered to be the guinea pig, since he's used to it by now...

Imagine now what kind of content could be developed with this approach? Artificial intelligence writing on channels for you, according to your taste, with your needs and whatever you can put into it. Maybe you'll send it under my profile one day to tell me all about it 😄 This kind of system far surpasses anything we've seen so far and there's no comparison to be made.

Now, the real question is not whether or not the GPT apps will be used or meet with fortune. This is now clear to everyone. We will also soon find out the response of the competitors and I will tell you about it faithfully as always, giving you the opportunity to interact directly from our serverless system.

The question is: are companies ready or not ready to integrate this type of technology in an EFFICIENT, SCALABLE and PERFORMANENT manner? We like to understand things by putting our hands on them and, where possible, providing a real, user-friendly solution.

That is why we developed the demo directly with APIs created on Nuvolaris and will give you clear documentation. We are doing this because we know that in a matter of months this type of service will not be a plus, but something NECESSARY and vital for almost every reality that wants to operate in our field.

Yes, because Sam Altman or not, the road marked out by AI is IRREVERSIBLE.

English

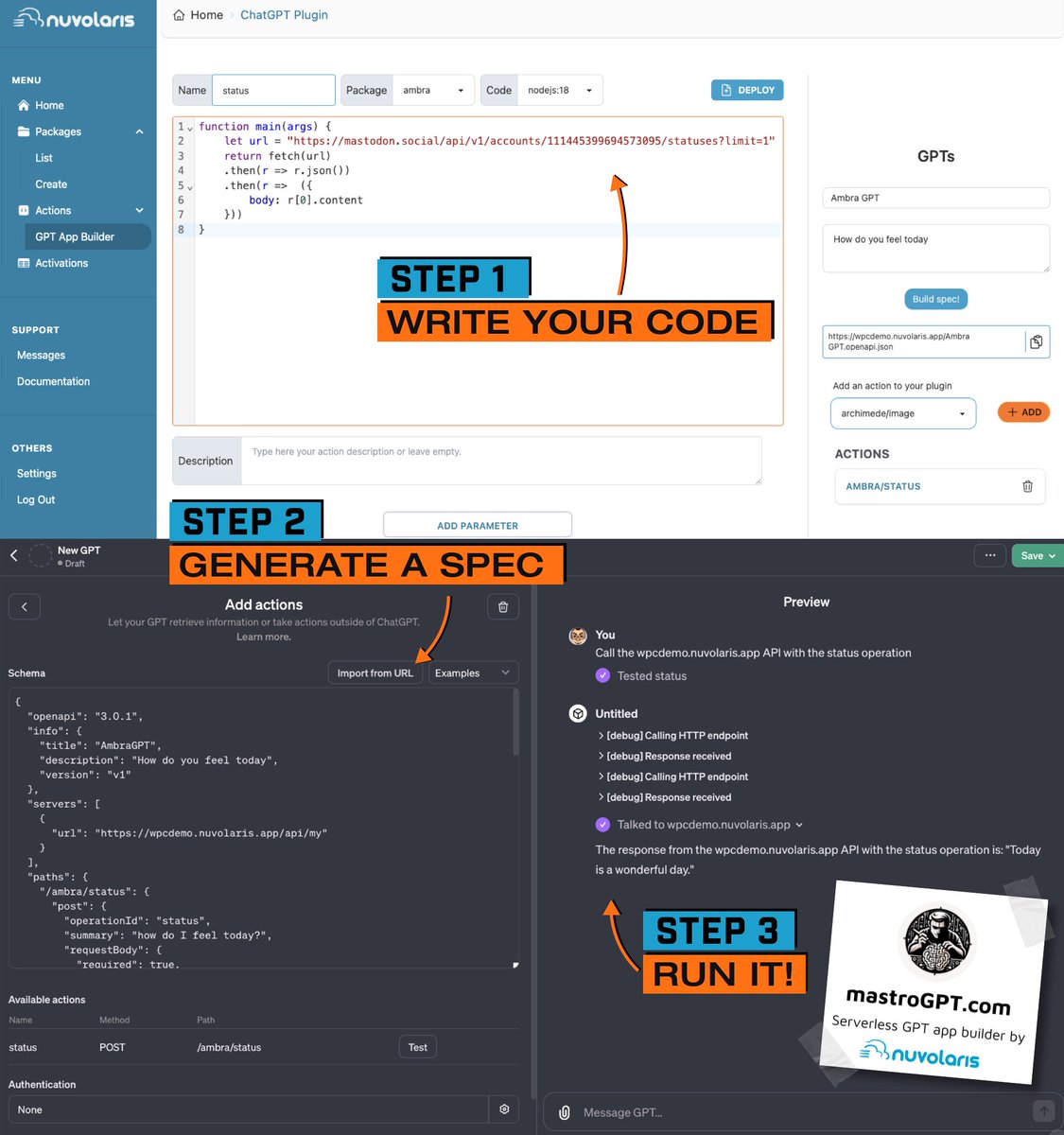

That Altman would be back in the saddle very soon was a foregone conclusion as far as I was concerned. You don't send away a guy who can take a company to a $90bn valuation and gain 100 million users in a few months by word of mouth alone. In fact, we certainly didn't stop and continued undaunted to develop our GPT App Builder, a screenshot of which we show you below!

Let me say a few words about what happened: OpenAI began as a non-profit for a research project, and was organised in such a way that it did not represent the interests of any particular company, so that 'everyone' could benefit from the results. Given the enormous costs of doing this research, it had to resort to heavy funding from Microsoft, which produced something that has little to do with non-profit.

It couldn't stand. The board was set up precisely to protect the non-profit nature, but Altman made it frighteningly for-profit. Maybe he didn't even do it on purpose. He just did what he was put there to do: spread the use of AI as widely as possible. The conflict was inherent in the very nature of society, and it could only explode.

In the end, the only way was to redefine the structure and accept the for-profit nature. The board that fired Altman is leaving and the only one remaining is D'Angelo. I don't know the details but I imagine that's the way things went. This is my reconstruction.

Board: Sam, Greg, Helen, Tasha and Adam and Ilya. Conflict breaks out. Since Adam (D'Angelo) stays and Greg had to be removed to have a majority, I assume he did NOT agree to Sam's removal.

At this point, with Helen and Tasha against and Adam pro, the ball is in Ilya's hand and he has been given the responsibility. Ilya agreed to the removal at first and at this point a board of 3 people first remove Greg from the board and then 3 out of 5 dismiss Sam. Greg also resigns.

Four remain. At this point, however, Ilya realises his mistake, retraces his steps and resigns from the board. Three remain: Helen, Tasha and Adam. Negotiations, but no way out. Microsoft offers a post to Sam. Revolt in OpenAI to oust the board. Board cornered. At this point there is no escape, Helen and Tasha also leave, Adam remains and a new "entrepreneurial" board will control OpenAI.

In the meantime we move forward because GPT Apps are truly revolutionary and we want to offer everyone an easy-to-use tool to make them!

English

Ambra strikes again!

When I state that something should be simple and intuitive, I'm not just saying it in words: I want to demonstrate it through actions!

In recent days, you've had the opportunity to see how a GPT app can call external services, engage in dialogue, and interact in surprising ways.

But what you haven't seen yet, and what is crucial for scalability, is how Nuvolaris integrates GPT APPs. We've incorporated a ready-to-use UI, intuitive and user-friendly, which will be available in our SaaS, mastroGPT (dot com).

Instead of delving into lengthy descriptions, we will show you concretely what it's all about. And once again, we'll have our Ambra assist us in doing so.

The video is coming soon! Are you ready?

English

Microservices: a zoo or a farm?

When talking about microservices, the mind automatically goes to containers and Kubernetes. But Kubernetes is not the only choice.

It's possible to adopt a FaaS instead, such as Nuvolaris, which moreover runs in Kubernetes and guarantees all its functionality.

The problem, however, does not lie in the use of Kubernetes but in what it handles. Kubernetes essentially handles containers.

Now, what is not clear to many is that containers are quite strange and difficult 'beasts' to manage, each with many different peculiarities. Managing a Kubernetes cluster therefore becomes as complex as managing a Zoo.

There is the slow heavy and memory-intensive container. There is the irritable container that every two by three starts consuming CPU like hell. There is the container that has usage peaks and needs to be carefully monitored.

Each container ends up needing special surveillance, special resources, and being confined in a specially made 'cage'.

This is the main source of complexity and cost of Kubernetes: the fact that containerised microservices are all very different from each other and must be managed differently.

All this would seem inevitable if a different approach did not exist. Have a farm and not a Zoo. This implies that your entire 'animal park' must consist of animals of the same type, possibly docile and small in size, and with modest needs.

This is why we produce milk from sheep even though giraffe milk is considered superior. But of course, managing a herd of giraffes and elephants is much more complicated than one of sheep.

Translating this into the world of micro-services, the definition itself says it all: your micro-services should be small and possibly very similar. And that is what a FaaS allows you to do.

A FaaS like Nuvolaris allows you to write your microservices not as complex contaneirs but as simple functions. It abstracts all their complexities and reduces them to pure busines logic. It imposes execution and memory constraints, and gives them the only resources they can acceptably use. It also automatically doubles them when needed.

In some ways it is the only sensible, sober, and acceptable way to run truly microservice applications. Unfortunately, it's very common to take your legacy applications and slap them into the Kubernetes Zoo, then hire legions of gatekeepers to keep the induced complexity in check.

Don't do that. Instead, breed your microservices as tame functions with Nuvolaris that you can replicate in large numbers, and you don't even have to worry about having cages, guardians and special food for each of them!

A lawn for grazing and a simple fence will be enough to keep everything under control, and your wallet and productivity will benefit!

#kubernetes #microservices

English

Rumours are that Sam Altman was fired to save humanity from A.I.

OpenAI is a non-profit company whose aim is to realise AGI (Artificial General Intelligence) for the benefit of humanity.

GPT-3 and GPT-4 and ChatGPT are only an intermediate step towards the realisation of this project, which is essentially a research project.

On the board of OpenAI sits Ilya Sutskaver, its Chief Scientist, who is a scientist, not an entrepreneur, and is convinced of his mission to realise an Artificial Intelligence for the benefit of mankind, a 'slightly higher' purpose than making a lot of money.

Given the success of ChatGPT, there is now a for-profit subsidiary, which is the company that sells ChatGPT subscriptions. The proceeds, however, must go to the non-profit and must be spent on developing AGI.

There are justifiably doubts about the dangers of A.I., especially since, and this is worth remembering, no one really knows why LLMs of a certain size exhibit the astounding additional capabilities we know of.

For now, ChatGPT's intelligence is rather limited, but what abilities would larger models develop? No one can say whether a larger artificial intelligence really has love for mankind, or sees them as inferior beings dangerous to be eliminated.

The doubt exists and therefore caution is needed in developing new models.

However, it was stated that OpenAI was not developing GPT-5, not least because in addition to the enormous costs, and in any case resources are needed to continue research to ensure that A.I. is not only capable but also safe...

Now, the problem is that the success of ChatGPT has led to the need to monetise it quickly, not least because competitors are certainly not sitting on their hands.

After the Developer Day. where new features were announced, there was a spike in new registrations, which led to server resources being exhausted to the point that new registrations were blocked.

In the meantime, it appears that the CEO, Sam Altman, and the chairman, Greg Brockman, have instead started a new fundraising drive, valuing the company at 90 billion (3 times the previous 30 billion), without the approval of the board.

The Chief Scientist was concerned about this situation, because there would be a lack of resources for re-search and it would accelerate in the direction of developing a new, potentially dangerous A.I.

Apparently a meeting was then called, where Sam said that as CEO he had a duty to anticipate situations without board approval, while Ilya disagreed. When it came to the vote clash, Sam lost the vote and was exonerated.

The scenario is therefore one from a science fiction film in which a money-minded CEO wants to accelerate the development of a Skynet and a scientist who wants to save humanity wants to stop it...

English

Are you familiar with GPT Apps?

I've already told you about them I know, but the topic is constantly developing so I'll ask you again.

GPT Apps are game-changing applications in the tech world, taking advantage of the cutting-edge GPT AI technology developed by OpenAI.

But what makes GPT apps so special?

They simply open up a boundless world of possibilities by integrating seamlessly with various REST APIs! Whether creating texts, sending notifications, converting external files, diving into the Internet of Things or tackling virtually any task via an API, GPT apps are the tool of the future.

Embracing GPT Apps means ushering in a new era of efficiency and innovation.

Whether you are a technology enthusiast, developer or entrepreneur, the potential uses are as vast as your imagination!

OK I know you've already been told in your company "Well let's wait and see how it evolves", but you're not going to listen to them, are you?

#GPTapps #GPT

English