Scott Mace

14.8K posts

Scott Mace

@scottmace

Technology, security & healthcare writer/journalist.

Amid the Pentagon’s fight with Anthropic, Trump admin is rewriting contracting rules so that federal officials can override any AI companies’ internal protocols on safety, privacy, surveillance or autonomous warfare usage

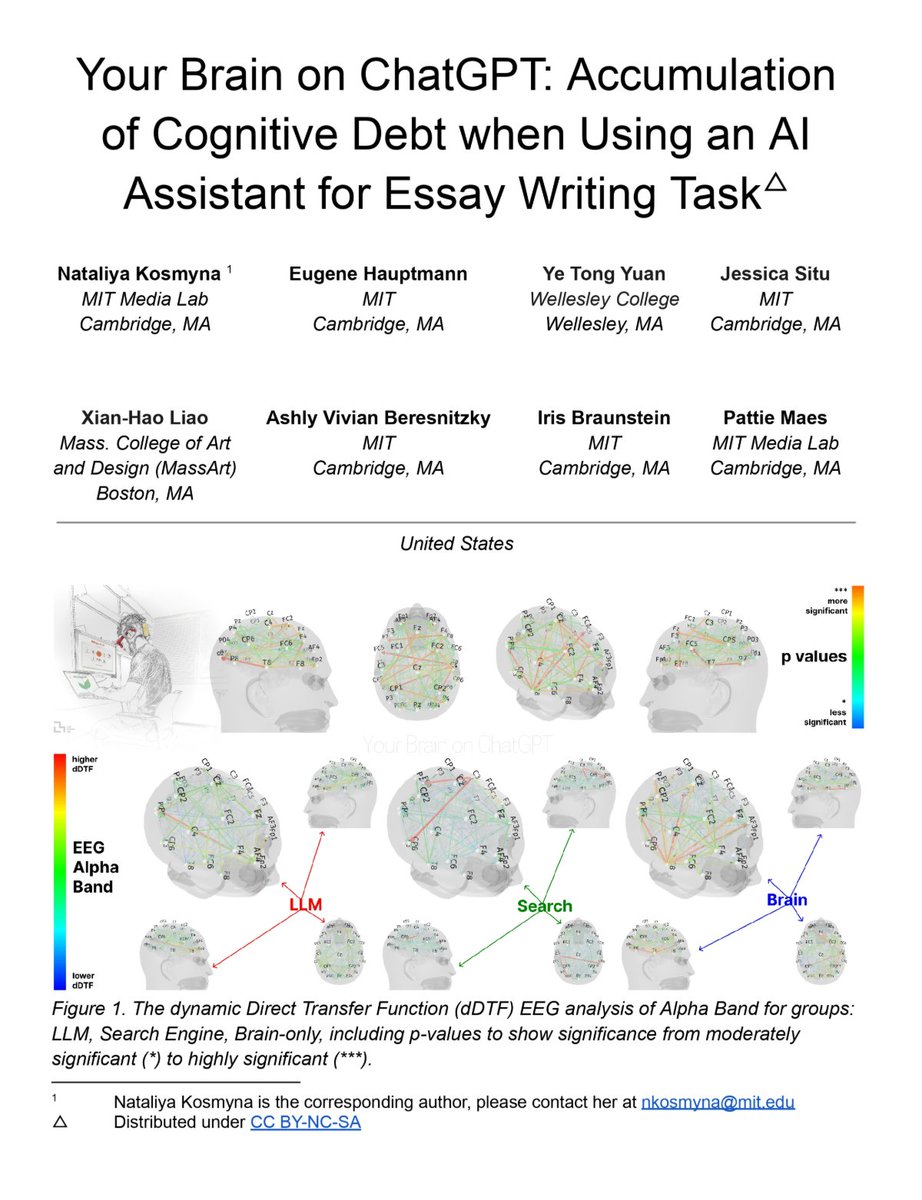

Ezra Klein: "Having AI summarize a book or paper for me is a disaster. It has no idea what I really wanted to know and wouldn't have made the connections I would've made. I'm interested in the thing I will see that other people wouldn't have seen, and I think AI typically sees what everybody else would see. I'm not saying that AI can't be useful, but I'm pretty against shortcuts. And obviously, you have to limit the amount of work you're doing. You can't read literally everything. But in some ways, I think it's more dangerous to think you've read something that you haven't than to not read it at all. I think the time you spend with things is pretty important." @ezraklein

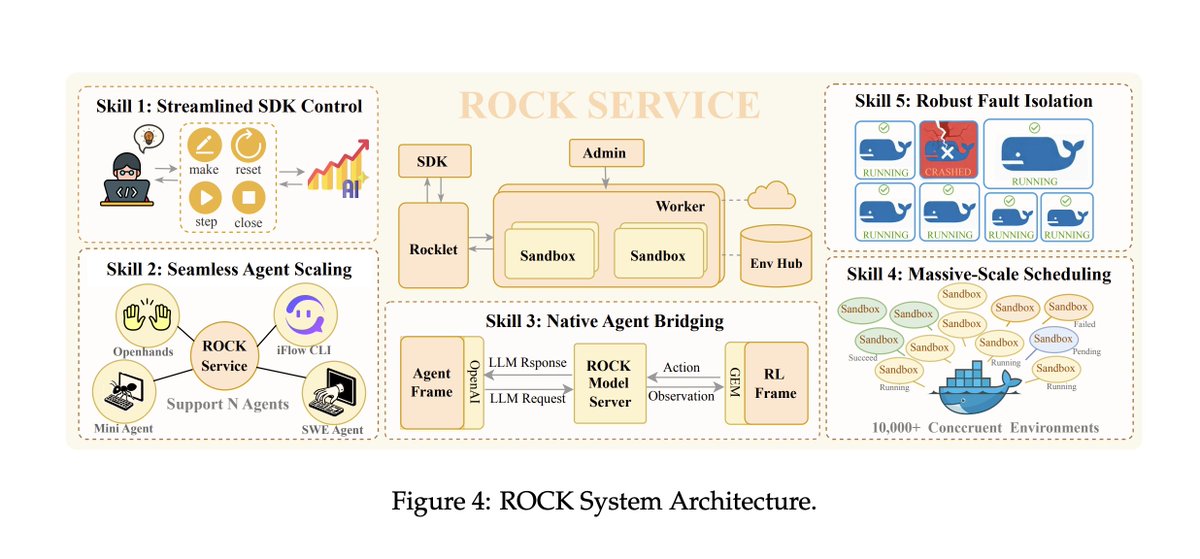

insane sequence of statements buried in an Alibaba tech report