Sebastian Farquhar

600 posts

Sebastian Farquhar

@seb_far

Research Scientist @DeepMind - AI Alignment. Associate Member @OATML_Oxford and RainML @UniofOxford. All views my dog's.

New Google DeepMind safety paper! LLM agents are coming – how do we stop them finding complex plans to hack the reward? Our method, MONA, prevents many such hacks, *even if* humans are unable to detect them! Inspired by myopic optimization but better performance – details in🧵

New Google DeepMind safety paper! LLM agents are coming – how do we stop them finding complex plans to hack the reward? Our method, MONA, prevents many such hacks, *even if* humans are unable to detect them! Inspired by myopic optimization but better performance – details in🧵

New Google DeepMind safety paper! LLM agents are coming – how do we stop them finding complex plans to hack the reward? Our method, MONA, prevents many such hacks, *even if* humans are unable to detect them! Inspired by myopic optimization but better performance – details in🧵

lol huh did we accidentally just fix hallucination problem ?

For those who have been using our method published in an earlier ICLR version, a minor tweak leading to big performance improvement is to estimate the entropy with a slightly different summation. 7/

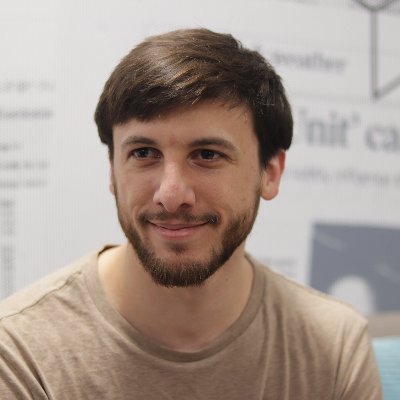

We are hiring! Google DeepMind's Frontier Safety and Governance team is dedicated to mitigating frontier AI risks; we work closely with technical safety, policy, responsibility, security, and GDM leadership. Please encourage great people to apply! 1/ boards.greenhouse.io/deepmind/jobs/…

Is your LLM hallucinating? 👻 Our @Nature paper shows how to detect when an LLM is making things up. A 'confabulating' LLM answers with inconsistent meanings when re-asked the same question. We use this to estimate uncertainty and detect confabulations. Learn more 🧵👇 1/