Sarvesh Patil retweetledi

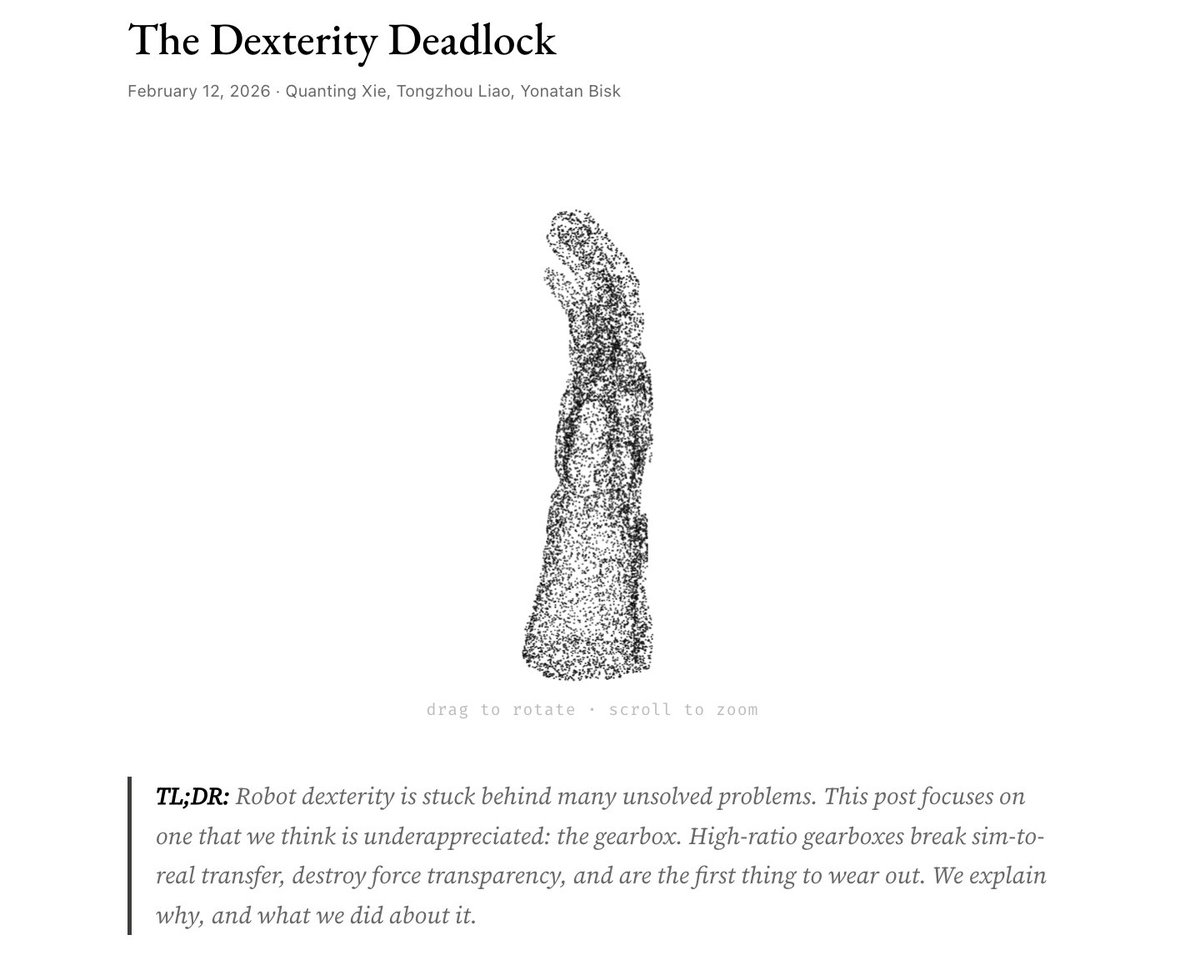

This post elucidates a couple of things that have been troubling me: ergosphere.blog/posts/the-mach…. I believe people are *always* the ends, not the means of research. When we start to automate away "good friction," I think we compromise on the true objective for short-term proxy gains.

English