Surface Reconstruction from Gaussian Splatting via Novel Stereo Views arxiv.org/abs/2404.01810 Project: gs2mesh.github.io

Shaked Brody

30 posts

Surface Reconstruction from Gaussian Splatting via Novel Stereo Views arxiv.org/abs/2404.01810 Project: gs2mesh.github.io

Paint by Inpaint Learning to Add Image Objects by Removing Them First Image editing has advanced significantly with the introduction of text-conditioned diffusion models. Despite this progress, seamlessly adding objects to images based on textual instructions without

Amazon presents Question Aware Vision Transformer for Multimodal Reasoning paper page: huggingface.co/papers/2402.05… Vision-Language (VL) models have gained significant research focus, enabling remarkable advances in multimodal reasoning. These architectures typically comprise a vision encoder, a Large Language Model (LLM), and a projection module that aligns visual features with the LLM's representation space. Despite their success, a critical limitation persists: the vision encoding process remains decoupled from user queries, often in the form of image-related questions. Consequently, the resulting visual features may not be optimally attuned to the query-specific elements of the image. To address this, we introduce QA-ViT, a Question Aware Vision Transformer approach for multimodal reasoning, which embeds question awareness directly within the vision encoder. This integration results in dynamic visual features focusing on relevant image aspects to the posed question. QA-ViT is model-agnostic and can be incorporated efficiently into any VL architecture. Extensive experiments demonstrate the effectiveness of applying our method to various multimodal architectures, leading to consistent improvement across diverse tasks and showcasing its potential for enhancing visual and scene-text understanding.

what paper (not your own, maybe not even in your own area) can you not stop telling people about?

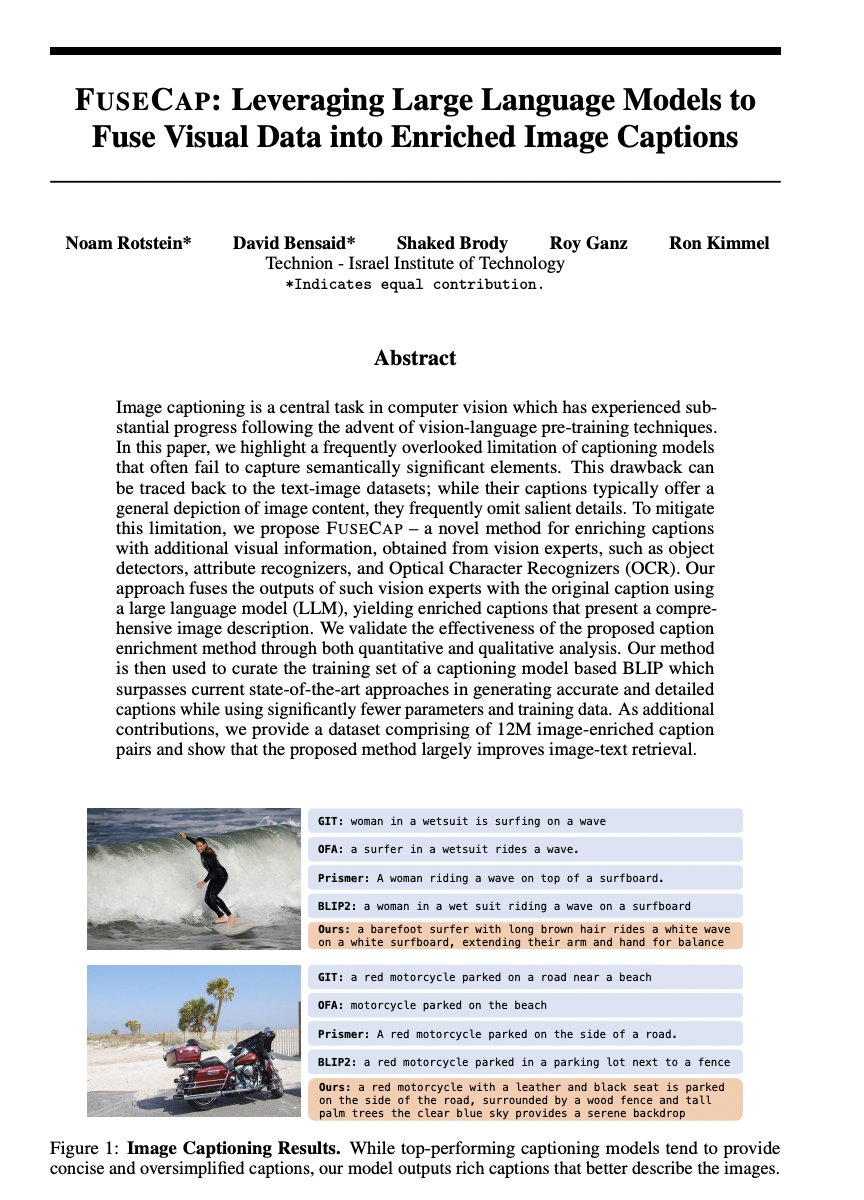

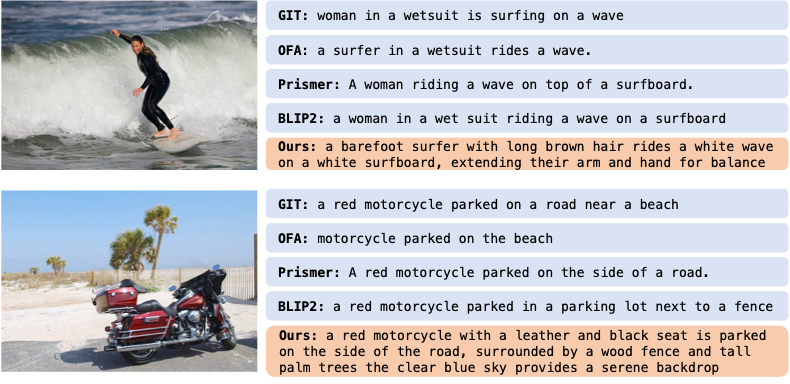

FuseCap: Leveraging Large Language Models to Fuse Visual Data into Enriched Image Captions propose FuseCap - a novel method for enriching captions with additional visual information, obtained from vision experts, such as object detectors, attribute recognizers, and Optical Character Recognizers (OCR). Our approach fuses the outputs of such vision experts with the original caption using a large language model (LLM), yielding enriched captions that present a comprehensive image description. We validate the effectiveness of the proposed caption enrichment method through both quantitative and qualitative analysis. Our method is then used to curate the training set of a captioning model based BLIP which surpasses current state-of-the-art approaches in generating accurate and detailed captions while using significantly fewer parameters and training data. As additional contributions, we provide a dataset comprising of 12M image-enriched caption pairs and show that the proposed method largely improves image-text retrieval. paper page: huggingface.co/papers/2305.17… demo: huggingface.co/spaces/noamrot…

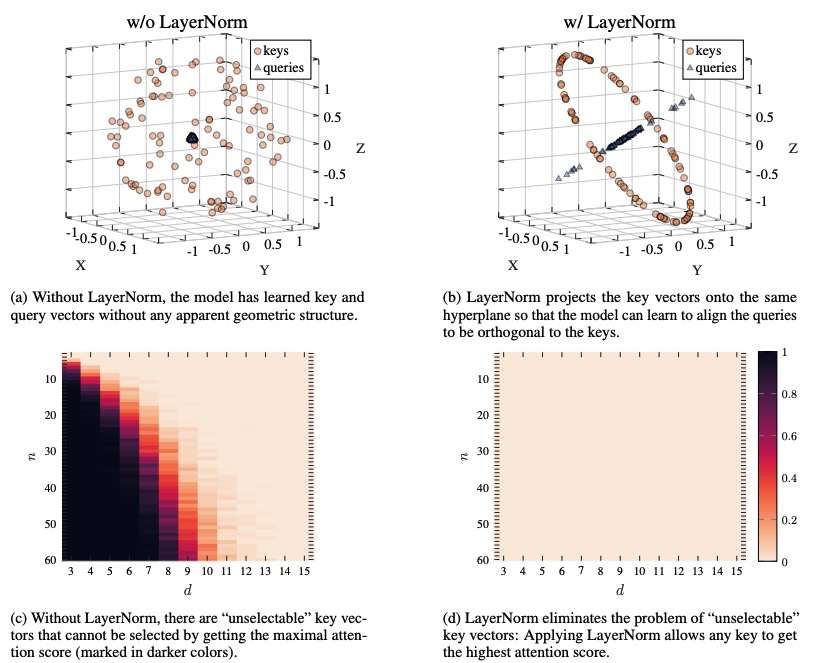

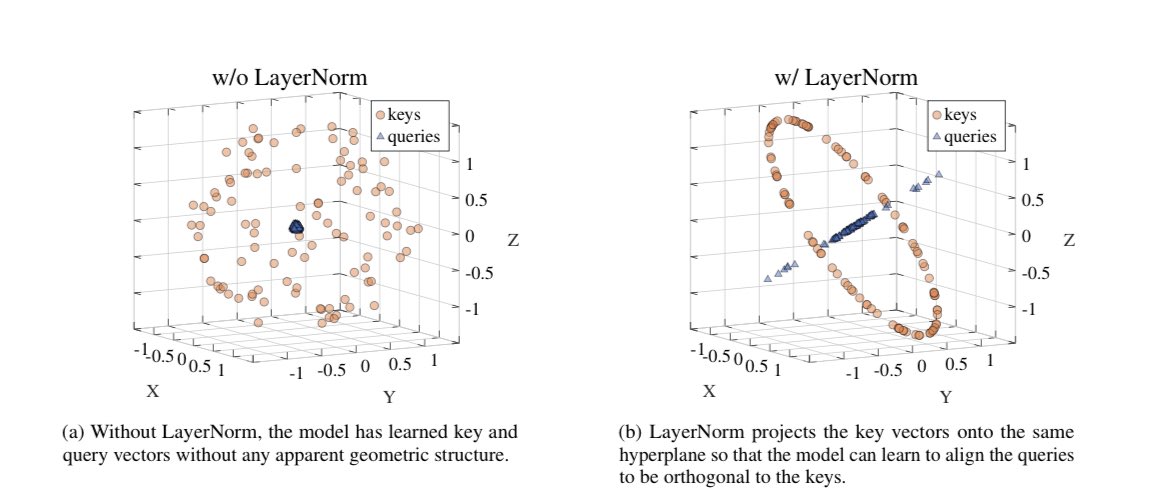

I'm thrilled to announce that our paper "On the Expressivity Role of LayerNorm in Transformers' Attention" has been accpeted to Findings of ACL 2023 #ACL2023. 1/5

I'm thrilled to announce that our paper "On the Expressivity Role of LayerNorm in Transformers' Attention" has been accpeted to Findings of ACL 2023 #ACL2023. 1/5