Uri Alon

367 posts

Uri Alon

@urialon1

Research Scientist @GoogleDeepMind

מסתכל על מה שקורה במקסיקו וחושב לעצמי שראש קרטל סמים זה לא מקצוע שיוחלף בקרוב ע"י ai

@IdoCharmDiet אם היית יכול לבחור תרגיל אחד לעשות, מה היית בוחר?

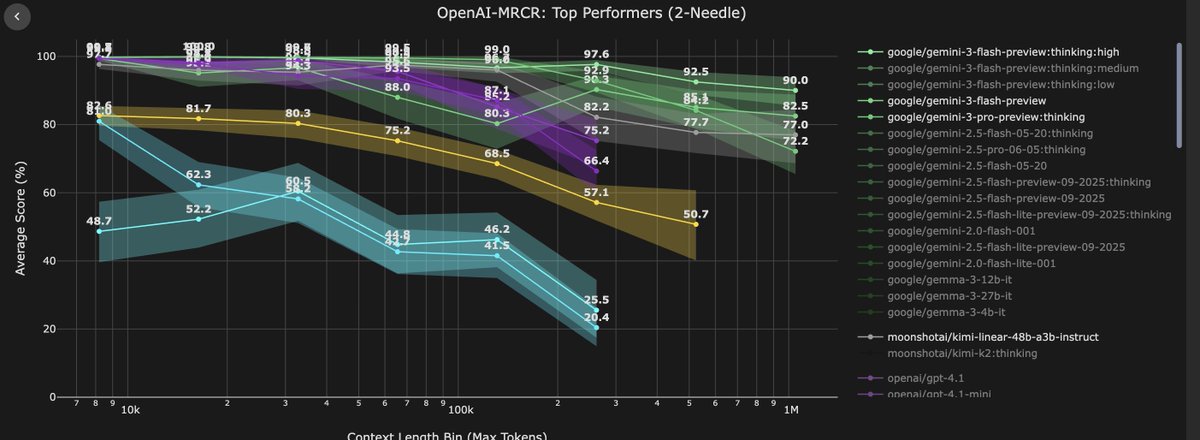

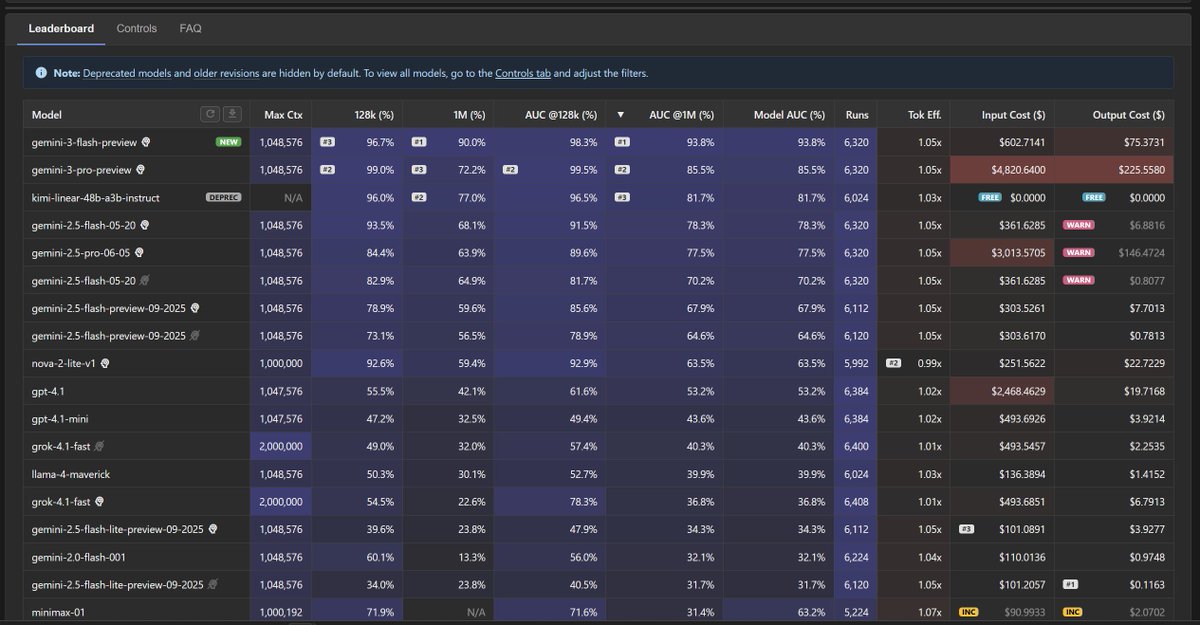

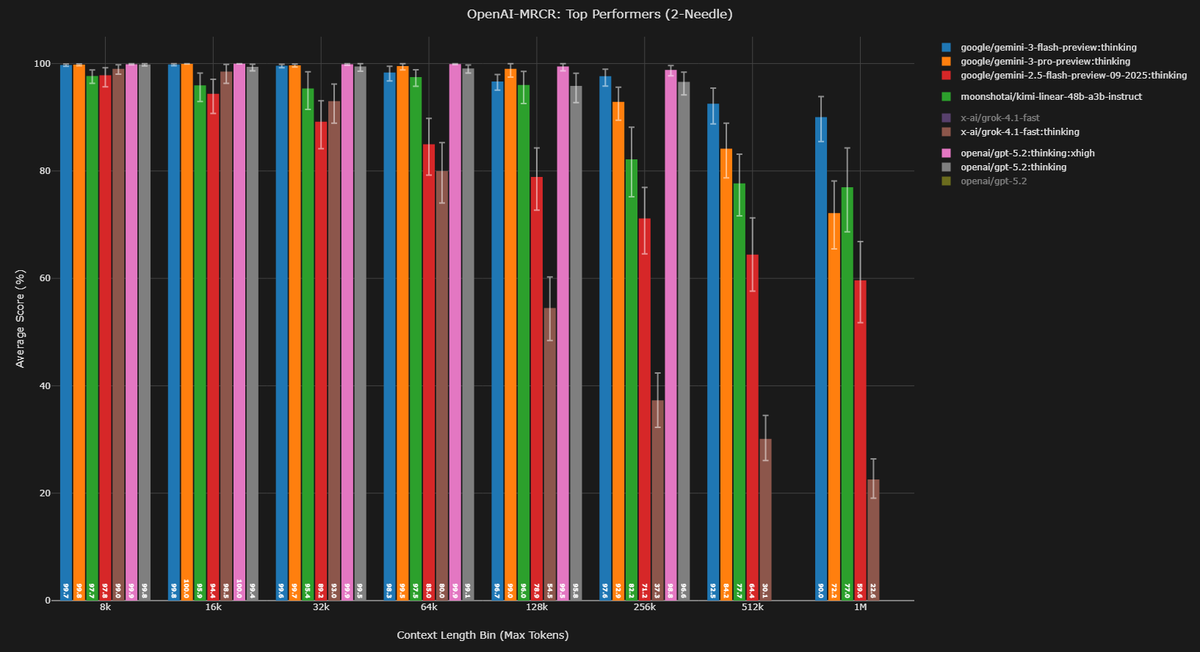

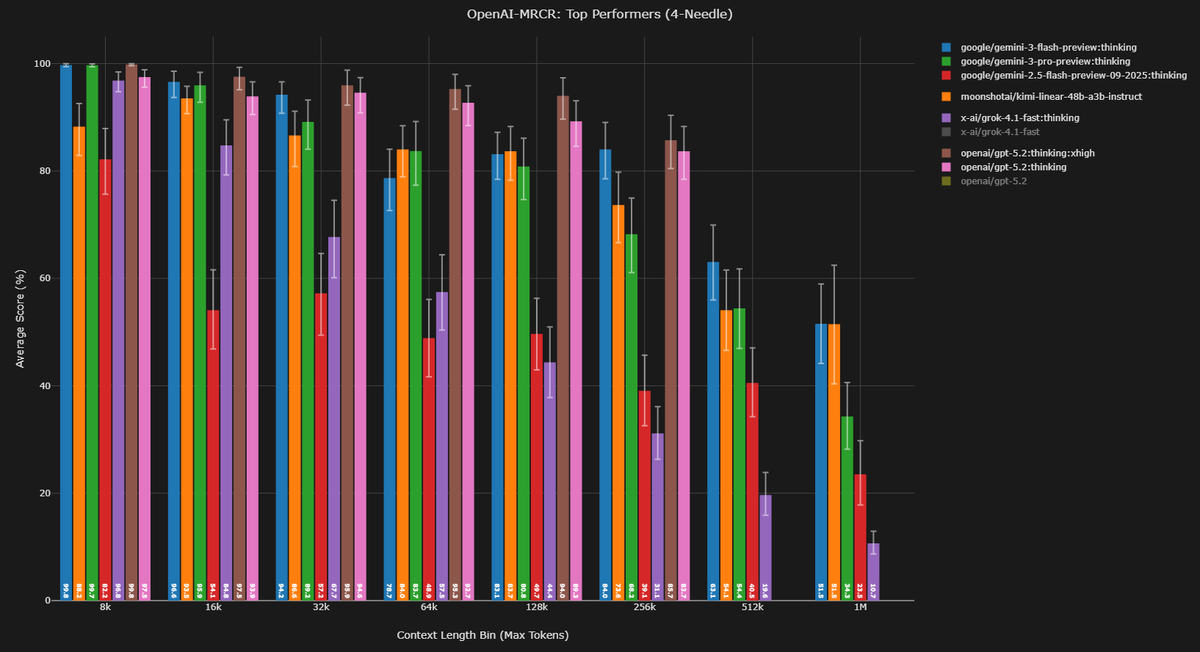

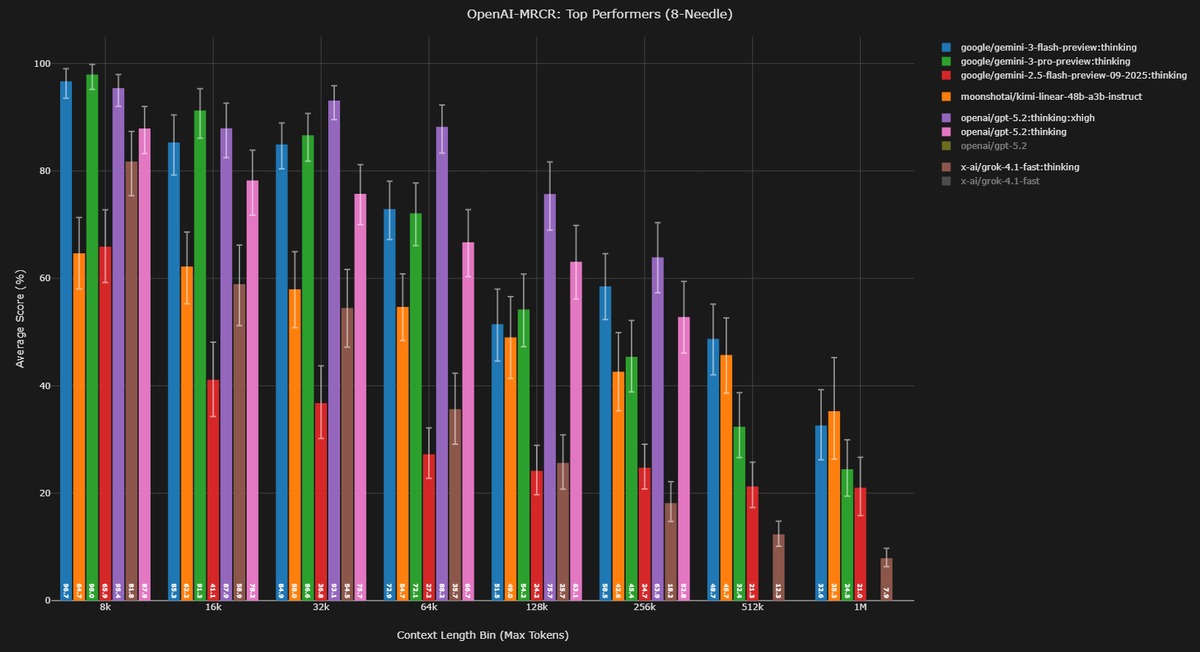

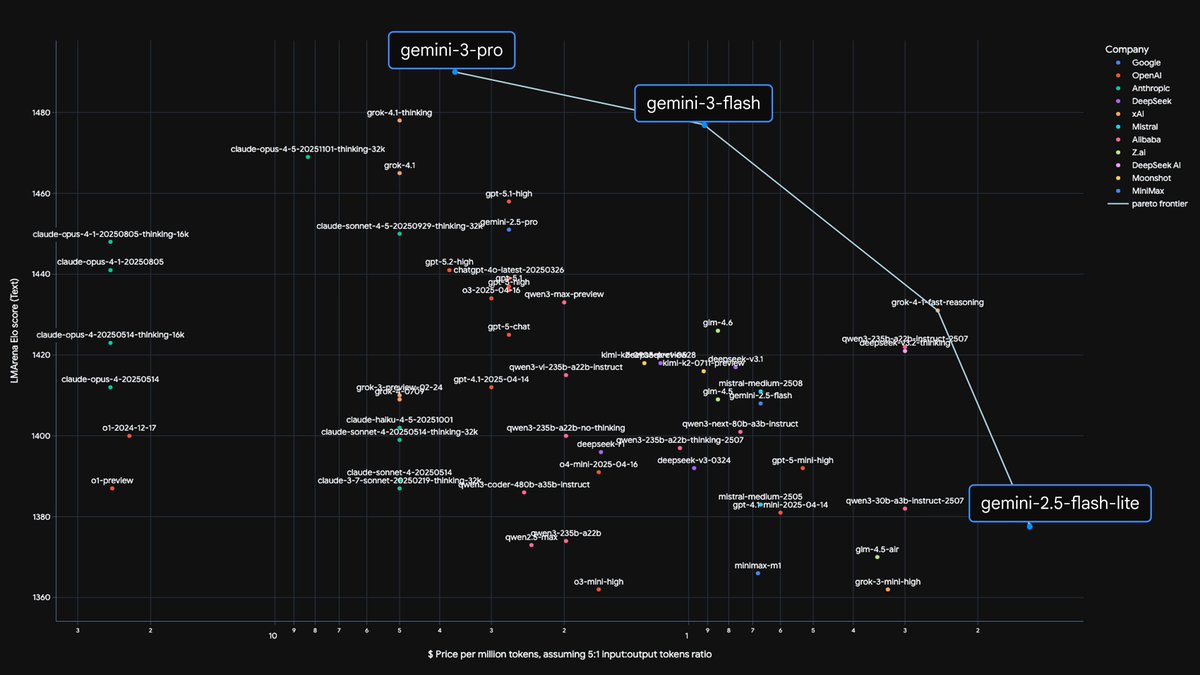

for more context on OpenAI's MRCR benchmark, curated at contextarena.ai by @DillonUzar , Gemini 3 flash achieved 90% acc @ 1 million ctx this performance is SoTA across all models, most SoTA models cant even go past 256k ctx at this length, you cant be using standard attention, it'll perform bad anyways, and ofc it'll be very expensive. (Gemini 3 flash is $0.5 in $3 out) Some sort of efficient attention is implemented, so thats why the price is hitting the same level as a linear/sparse attention model BUT linear attention (hybrid) is only good at long ctx bench, and suck at knowledge task. G3F is great at knowledge, even #3 on Artificial Analysis Index. they cant be using any SSM/mamba variants hybrid (at least w/ standard attn) either as those suck at long ctx. Same as sparse attention, as you can see from DeepSeek 3.2's DSA So what black magic did they do? guess i'll never find out :( (unless i join google...??) contextarena.ai/?models=cohere…

i'll elaborate: a common computation pattern in DL happens to coincide with a known operator in linear algebra (matmul), and so we conveniently borrow linalg notation and terminology (matrices, vectors, ranks, norms). but this is just jargon. the algebric properties arent needed.