Neta Shaul

37 posts

Neta Shaul

@shaulneta

PhD Student at @WeizmannScience

🚀🎬We introduce TMD (Transition Matching Distillation): 480p videos generated from text prompts in < 3 NFEs! 1️⃣Main backbone for feature extraction and lightweight head for iterative refinement 2️⃣Distilled from Wan2.1 14B T2V combining MeanFlow & DMD2 🔗research.nvidia.com/labs/genair/tmd

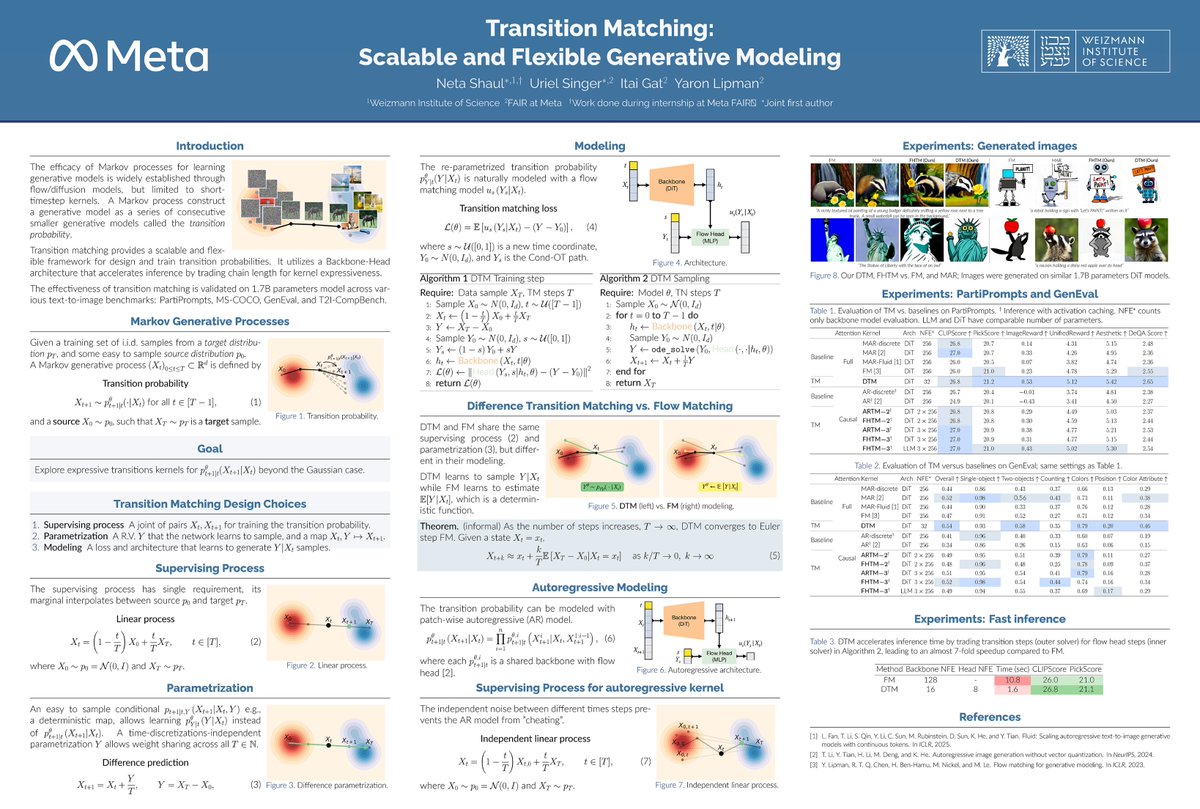

#NeurIPS2025 "Transition Matching" explores generative Markov processes with expressive transition kernels, going beyond the Gaussian kernel used in diffusion and flow models. Interested? Let's chat! 📍 Poster #3609 🕒 Wed at 11am - 2pm 📄 arxiv.org/abs/2506.23589

New work: “GLASS Flows: Transition Sampling for Alignment of Flow and Diffusion Models”. GLASS generates images by sampling stochastic Markov transitions with ODEs - allowing us to boost text-image alignment for large-scale models at inference time! arxiv.org/pdf/2509.25170 [1/7]

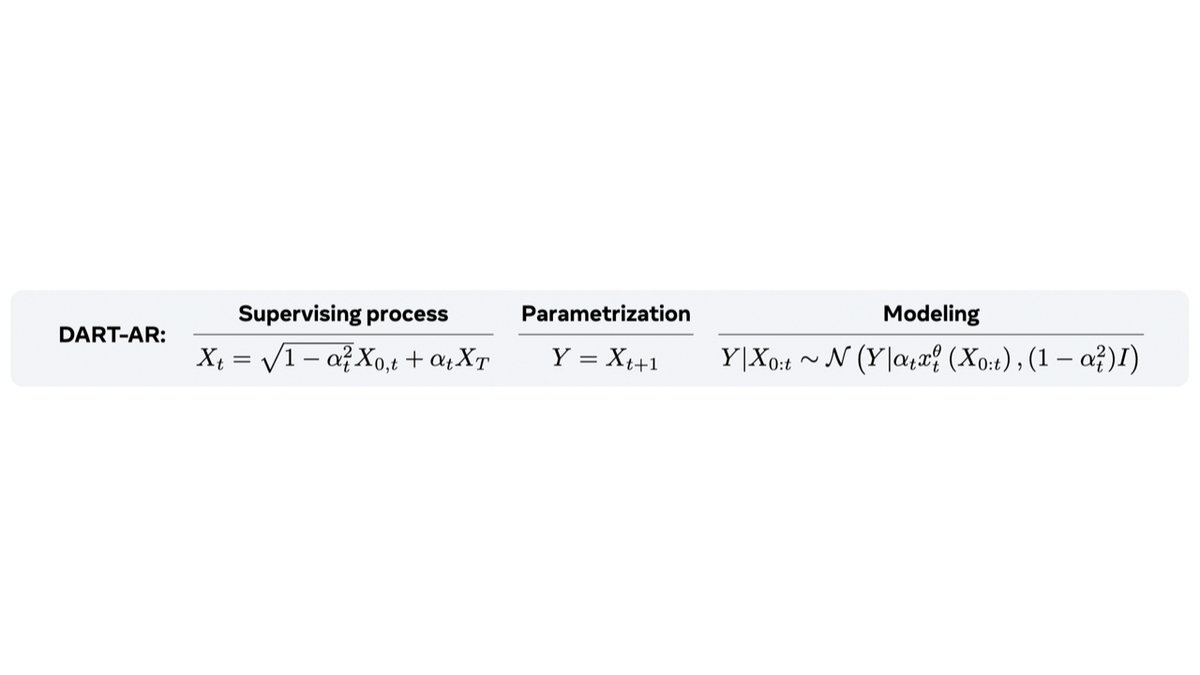

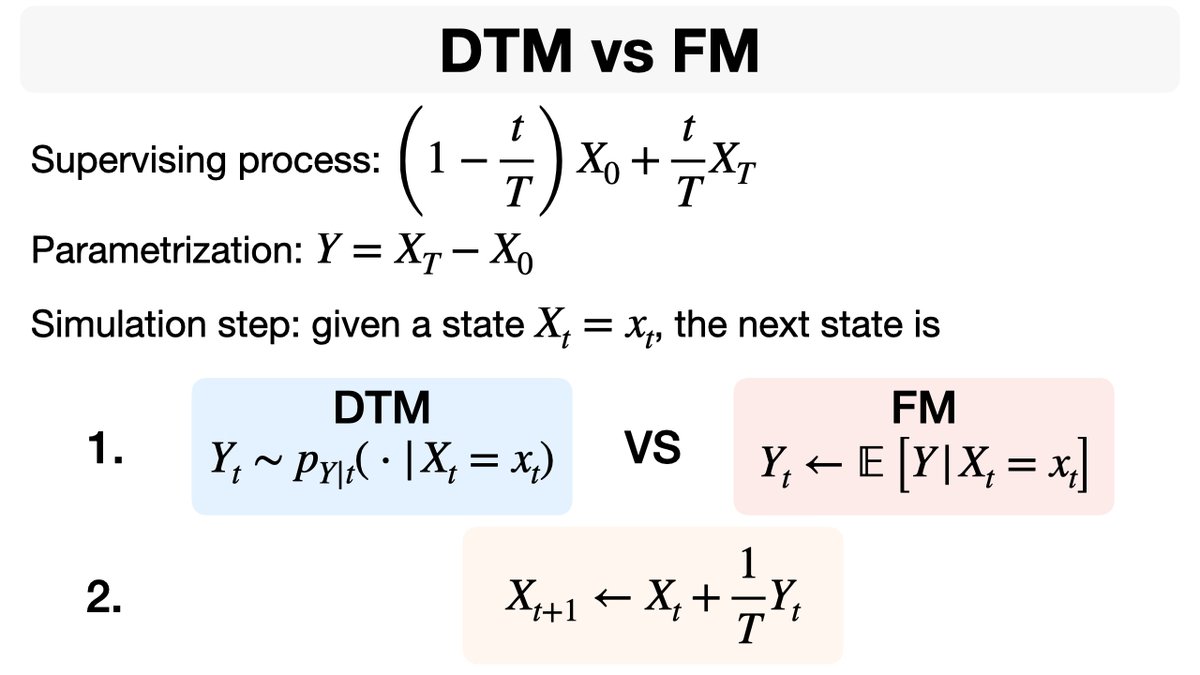

If you're curious to dive deeper into Transition Matching (TM)✨🔍, a great starting point is understanding the similarities and differences between 𝐃𝐢𝐟𝐟𝐞𝐫𝐞𝐧𝐜𝐞 𝐓𝐫𝐚𝐧𝐬𝐢𝐭𝐢𝐨𝐧 𝐌𝐚𝐭𝐜𝐡𝐢𝐧𝐠 (𝐃𝐓𝐌) and Flow Matching (FM)💡.

[1/n] New paper alert! 🚀 Excited to introduce 𝐓𝐫𝐚𝐧𝐬𝐢𝐭𝐢𝐨𝐧 𝐌𝐚𝐭𝐜𝐡𝐢𝐧𝐠 (𝐓𝐌)! We're replacing short-timestep kernels from Flow Matching/Diffusion with... a generative model🤯, achieving SOTA text-2-image generation! @urielsinger @itai_gat @lipmanya

[1/n] New paper alert! 🚀 Excited to introduce 𝐓𝐫𝐚𝐧𝐬𝐢𝐭𝐢𝐨𝐧 𝐌𝐚𝐭𝐜𝐡𝐢𝐧𝐠 (𝐓𝐌)! We're replacing short-timestep kernels from Flow Matching/Diffusion with... a generative model🤯, achieving SOTA text-2-image generation! @urielsinger @itai_gat @lipmanya

[1/n] New paper alert! 🚀 Excited to introduce 𝐓𝐫𝐚𝐧𝐬𝐢𝐭𝐢𝐨𝐧 𝐌𝐚𝐭𝐜𝐡𝐢𝐧𝐠 (𝐓𝐌)! We're replacing short-timestep kernels from Flow Matching/Diffusion with... a generative model🤯, achieving SOTA text-2-image generation! @urielsinger @itai_gat @lipmanya