Uriel Singer

54 posts

@urielsinger

Research Scientist @ Meta AI Research

🚀🎬We introduce TMD (Transition Matching Distillation): 480p videos generated from text prompts in < 3 NFEs! 1️⃣Main backbone for feature extraction and lightweight head for iterative refinement 2️⃣Distilled from Wan2.1 14B T2V combining MeanFlow & DMD2 🔗research.nvidia.com/labs/genair/tmd

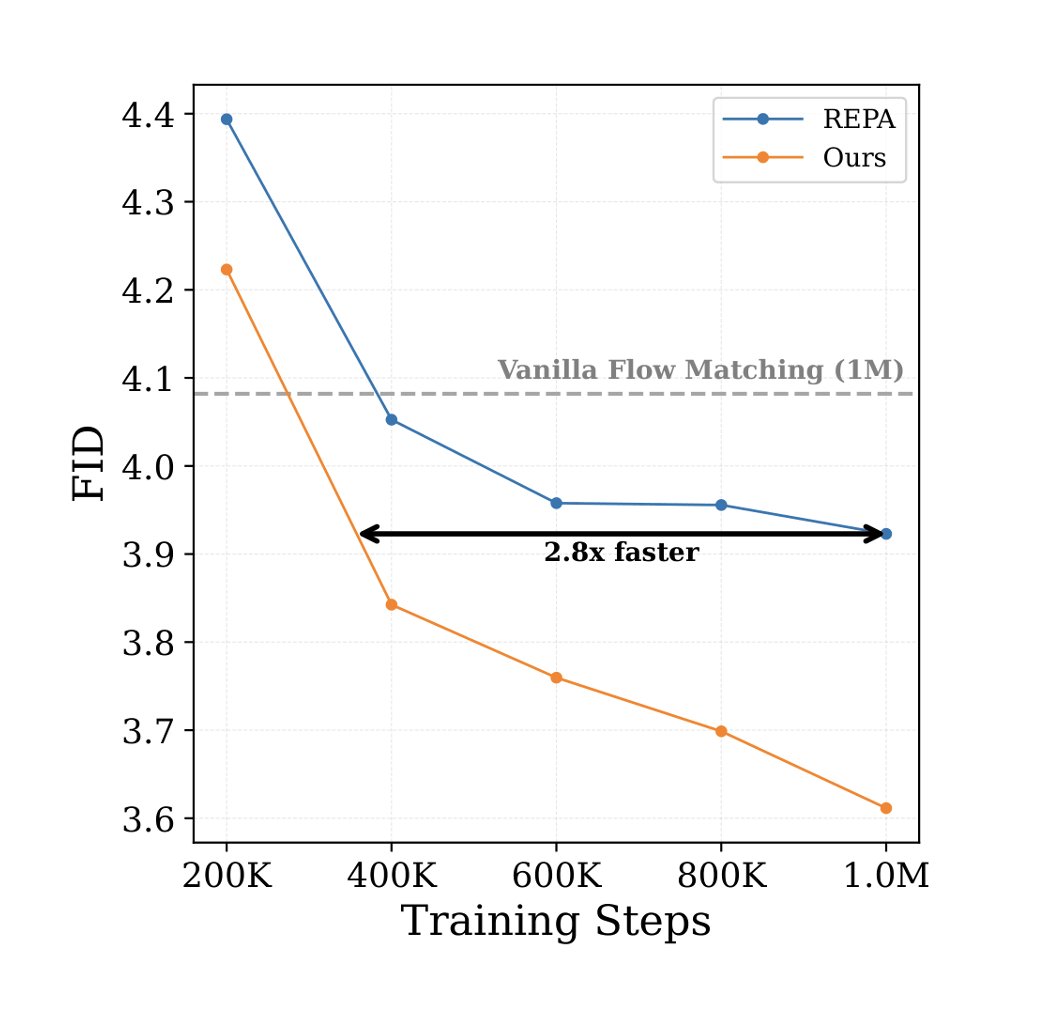

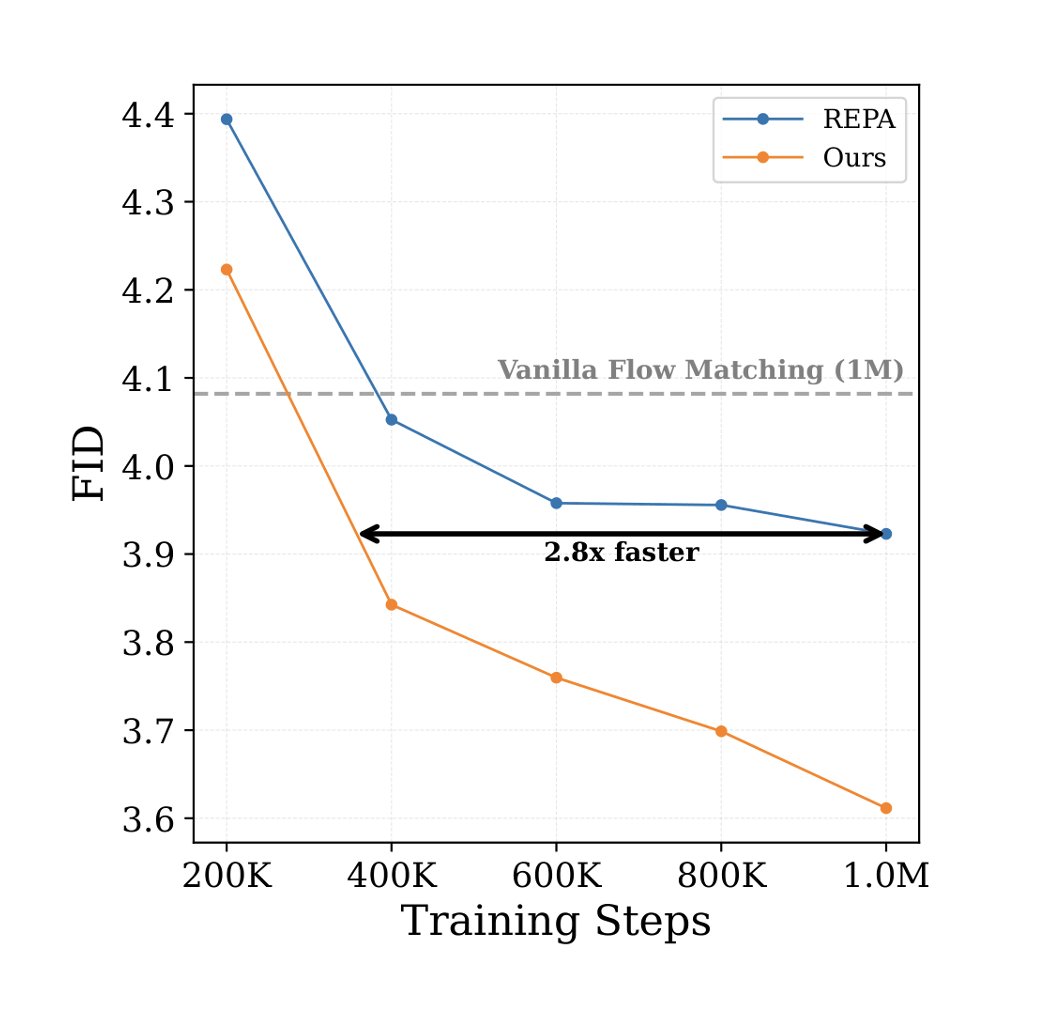

[1/n] New paper alert! 🚀 Excited to introduce 𝐓𝐫𝐚𝐧𝐬𝐢𝐭𝐢𝐨𝐧 𝐌𝐚𝐭𝐜𝐡𝐢𝐧𝐠 (𝐓𝐌)! We're replacing short-timestep kernels from Flow Matching/Diffusion with... a generative model🤯, achieving SOTA text-2-image generation! @urielsinger @itai_gat @lipmanya

[1/n] New paper alert! 🚀 Excited to introduce 𝐓𝐫𝐚𝐧𝐬𝐢𝐭𝐢𝐨𝐧 𝐌𝐚𝐭𝐜𝐡𝐢𝐧𝐠 (𝐓𝐌)! We're replacing short-timestep kernels from Flow Matching/Diffusion with... a generative model🤯, achieving SOTA text-2-image generation! @urielsinger @itai_gat @lipmanya

[1/n] New paper alert! 🚀 Excited to introduce 𝐓𝐫𝐚𝐧𝐬𝐢𝐭𝐢𝐨𝐧 𝐌𝐚𝐭𝐜𝐡𝐢𝐧𝐠 (𝐓𝐌)! We're replacing short-timestep kernels from Flow Matching/Diffusion with... a generative model🤯, achieving SOTA text-2-image generation! @urielsinger @itai_gat @lipmanya

[1/n] New paper alert! 🚀 Excited to introduce 𝐓𝐫𝐚𝐧𝐬𝐢𝐭𝐢𝐨𝐧 𝐌𝐚𝐭𝐜𝐡𝐢𝐧𝐠 (𝐓𝐌)! We're replacing short-timestep kernels from Flow Matching/Diffusion with... a generative model🤯, achieving SOTA text-2-image generation! @urielsinger @itai_gat @lipmanya

[1/n] New paper alert! 🚀 Excited to introduce 𝐓𝐫𝐚𝐧𝐬𝐢𝐭𝐢𝐨𝐧 𝐌𝐚𝐭𝐜𝐡𝐢𝐧𝐠 (𝐓𝐌)! We're replacing short-timestep kernels from Flow Matching/Diffusion with... a generative model🤯, achieving SOTA text-2-image generation! @urielsinger @itai_gat @lipmanya

[1/n] New paper alert! 🚀 Excited to introduce 𝐓𝐫𝐚𝐧𝐬𝐢𝐭𝐢𝐨𝐧 𝐌𝐚𝐭𝐜𝐡𝐢𝐧𝐠 (𝐓𝐌)! We're replacing short-timestep kernels from Flow Matching/Diffusion with... a generative model🤯, achieving SOTA text-2-image generation! @urielsinger @itai_gat @lipmanya

[1/n] New paper alert! 🚀 Excited to introduce 𝐓𝐫𝐚𝐧𝐬𝐢𝐭𝐢𝐨𝐧 𝐌𝐚𝐭𝐜𝐡𝐢𝐧𝐠 (𝐓𝐌)! We're replacing short-timestep kernels from Flow Matching/Diffusion with... a generative model🤯, achieving SOTA text-2-image generation! @urielsinger @itai_gat @lipmanya

VideoJAM is our new framework for improved motion generation from @AIatMeta We show that video generators struggle with motion because the training objective favors appearance over dynamics. VideoJAM directly adresses this **without any extra data or scaling** 👇🧵

🧵1/ Text-to-video models generate stunning visuals, but… motion? Not so much. You get extra limbs, objects popping in and out... In our new paper, we present FlowMo -- an inference-time method that reduces temporal artifacts without retraining or architectural changes. 👇