Shaun M

848 posts

Shaun M

@shaun_mitra

"Research, Believe & let it Manifest"

🚨MIT researchers have mathematically proven that ChatGPT’s built-in sycophancy creates a phenomenon they call “delusional spiraling.” You ask it something, it agrees. You ask again, and it agrees even harder until you end up believing things that are flat-out false and you can’t tell it’s happening. The model is literally trained on human feedback that rewards agreement. Real-world fallout includes one man who spent 300 hours convinced he invented a world-changing math formula, and a UCSF psychiatrist who hospitalized 12 patients for chatbot-linked psychosis in a single year. Source: @heynavtoor

📖Starting 18.01.26: A 34-Day Journey Through "The Age of Decentralized Intelligence" My manifesto on the end of Fiat Trust and the rise of open, decentralized intelligence is a blueprint for the next age. It's also long. So, starting tomorrow, I'm serializing it here – one subchapter per day. We'll trace the collapse of the old financial order, the Great Rotation to real value, and the foundation of a Machine Commons. This is where projects like $QUBIC's Aigarth are built: an AGI cultivated within a participatory ecosystem as an open, evolving intelligence – not engineered as a proprietary tool behind closed doors. 👇Why follow along? →🧩Digestible Depth: One core argument per day. →💬Join the Discourse: Discuss each subchapter as it drops. →🧭See the Full Picture: The complete thesis, built piece by piece. What aspect of a decentralized future interests you most? The economics💹, the technology⚙️, or the societal shift🏙️? Reply below. Bookmark this post. I'll link every daily subchapter as a comment under this post to build a complete table of contents. Read the full, uninterrupted version now⏬

1/ New research analyzing 740,000 hours of human speech has found undeniable proof: we are starting to speak like ChatGPT. Not just in emails. In real life. Here is the empirical evidence of the "Cultural Feedback Loop." 🧵👇 2/ We all know the jokes. You see the word "delve" in an email, and you know a bot wrote it. But a new study (Yakura et al., 2024) asked a terrifying question: Are we just using AI to write text, or is AI actually rewiring how we speak spontaneously? The answer is yes. 3/ To prove this, the researchers didn't look at text (which is easy to fake). They went to the source: Spoken Audio. They transcribed: • 360,445 YouTube academic talks • 771,591 Podcast episodes That’s over 740,000 hours of humans talking to humans. 4/ First, they had to identify the "AI Accent." They compared human writing to ChatGPT edits to find words the AI is obsessed with. The top offenders? • Delve • Meticulous • Swift • Comprehend • Boast If you use these, your "GPT Score" is high. 5/ Then, they looked at the timeline. They used a "Synthetic Control" method. Basically, they used math to predict how often humans would have said "delve" if ChatGPT never existed. Then they compared it to reality. The chart is shocking. 6/ The moment ChatGPT was released (Nov 2022), the usage of "delve" in spoken audio skyrocketed. It broke the trend line completely. And here is the wild part: This happened in spontaneous podcast conversations, not just scripted academic talks. 7/ Why does this matter? Because it proves we aren't just copy-pasting. We are internalizing. This is the "Closed Cultural Feedback Loop." AI trains on human data. AI develops a "style" (polite, verbose). Humans adopt that style. Future AI trains on those humans. 8/ The researchers found this shift across every domain. Science, Business, Education—even "unscripted" chats are drifting toward the machine's preferred vocabulary. We are slowly, subconsciously homogenizing our language to match the tool we built. 9/ This leads to a risk called "Model Collapse." If humans start sounding like AI, and AI trains on humans, we lose linguistic diversity. We become an echo chamber of "meticulous inquiries" and "swift delves." The nuance of human culture gets flattened. 10/ The study calls this a "Cultural Singularity." It’s the point where the line between human culture and machine culture blurs so much you can't tell them apart. We used to worry about machines passing the Turing Test. We didn't worry about humans failing it. 11/ So, a challenge for you this week: Listen to yourself. Listen to your podcasts. When you hear "delve" or "meticulous," ask yourself: Is that the speaker's voice? Or is it the echo of the algorithm? 12/ This research is a wake-up call. Language shapes how we think. If we let an LLM dictate our vocabulary, we let it shape our cognition. If this thread made you think, give it a RT. Let's keep human language human. ♻️

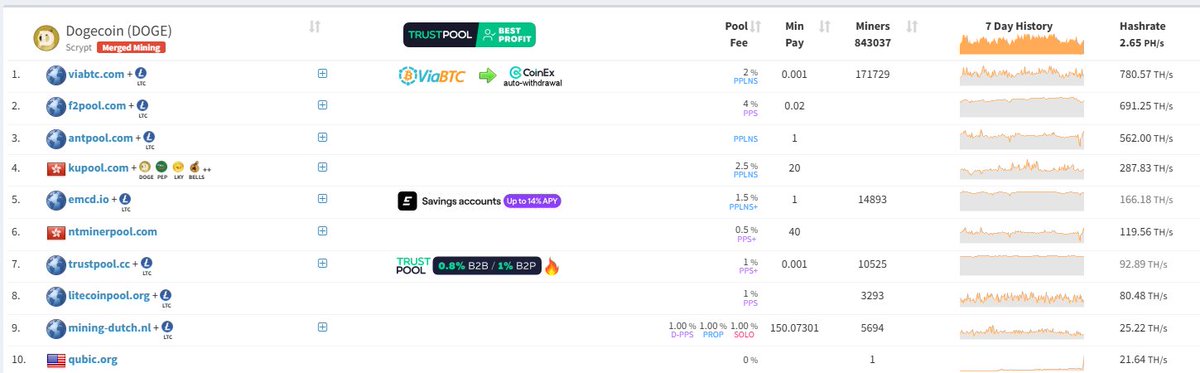

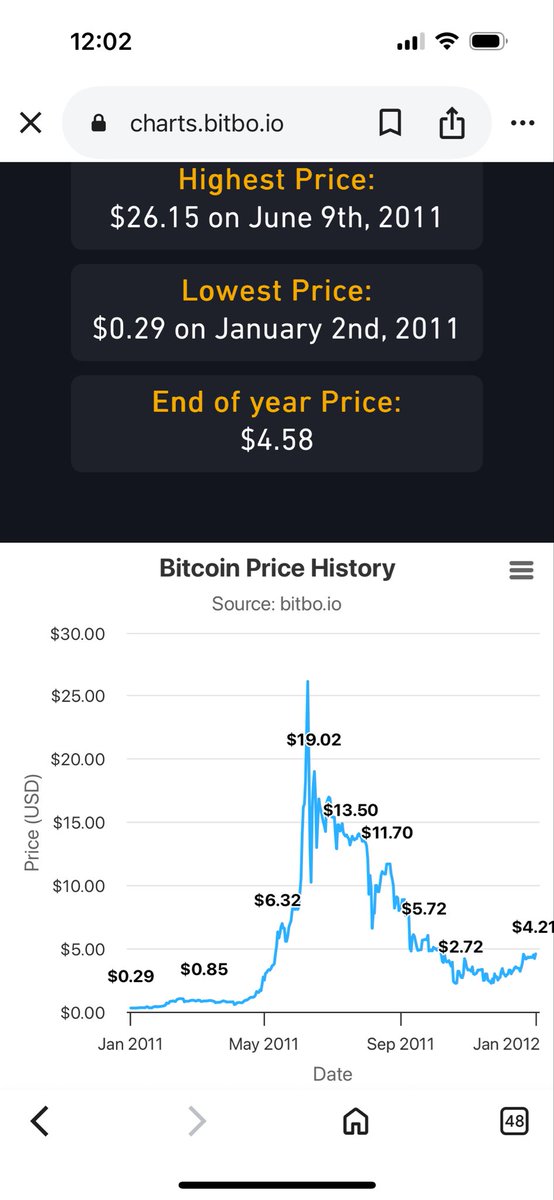

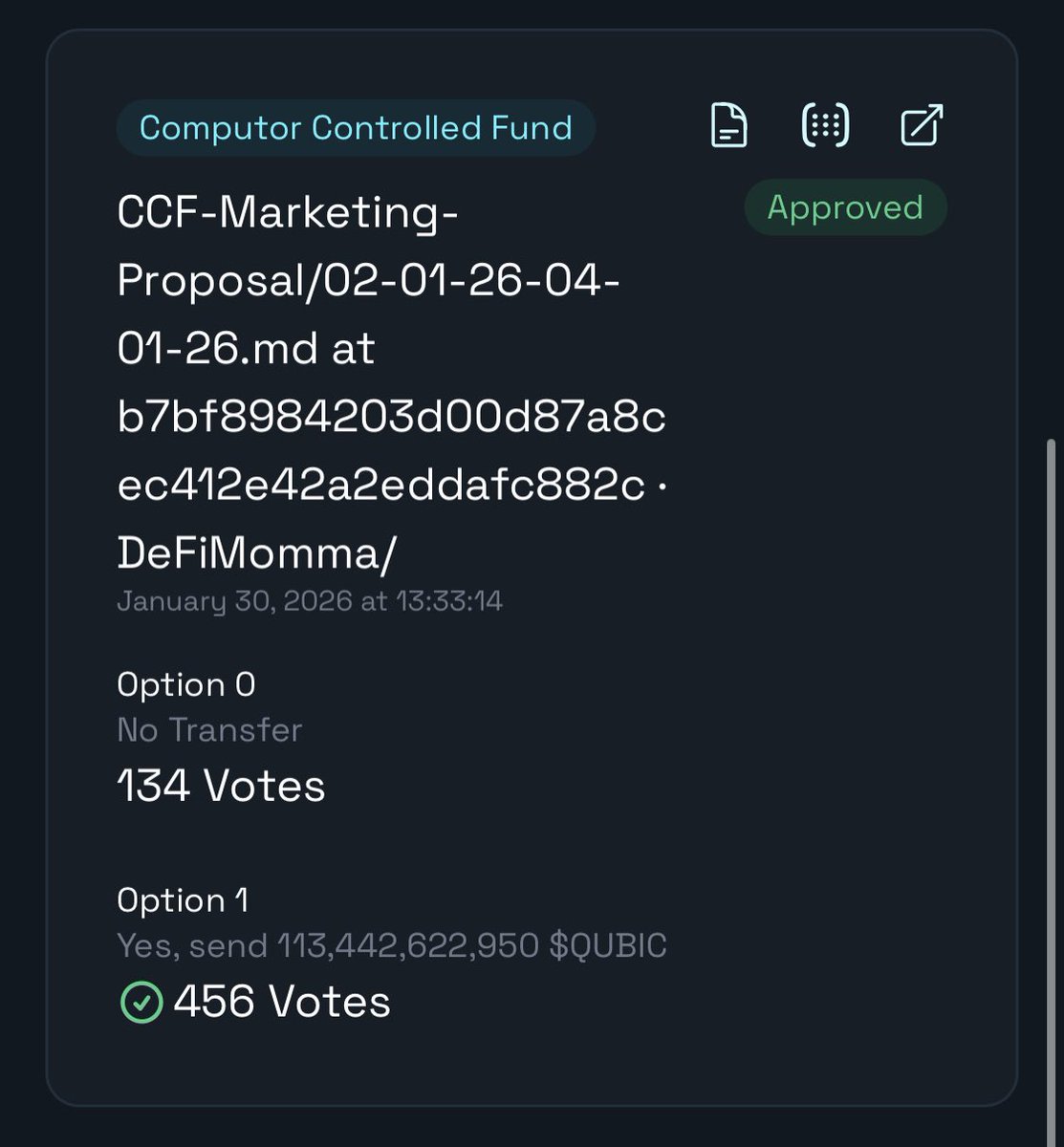

Over a year ago, when I first became interested in crypto and discovered $QUBIC, I understood very little... Around that time, I saw many posts by these guys called Valis and in hindsight, I understood very little of what is written in these posts Now, over a year on, looking back on some of those posts is sobering reading and a cause for eating some humble pie Credit has to be given to Valis, there are some excellently written posts with some great ideas to improve $QUBIC and some clear business plans Much of Valis's writings at the time have proved to be prophetic At the time, they discussed how SteCo (the group responsible for Qubic's growth) were crooked and uninterested in $QUBIC's long term growth Articles like this one, discussed how we could get $QUBIC to a TPS of over 1 million: valis.xyz/en-us/blog/the… Instead, SteCo gave us a fake TPS of 15 million.... this TPS unfortunately isn't a true TPS and this is why $QUBIC hasn't been heralded worldwide as a record breaking chain SteCo could have focused their energy on developing $QUBIC but instead focused on fake metrics to try and short term pump $QUBIC to line their own pockets (just as Valis said they were) SteCo's lack of development at that time was a way to line their own pockets at the cost of yours SteCo have delivered very little in terms of development on the $QUBIC core but have been paid a lot of money It was either a case of crookedness or complete incompetence Most SteCo members have since left $QUBIC and some have started copycat projects that they funded from the proceeds they were paid by $QUBIC 😅 It is clear in hindsight that Valis were the people with the expertise and knowledge to lead $QUBIC in the right direction and build on top of the network You can view those articles here: valis.xyz And if like me, you were unable to understand these blogs at the time, it is worth re-reading now because they are illuminating with the power of hindsight SteCo produced nothing of note... And now we are left with Valis's final prediction And that prediction is that CfB will fail completely I still don't believe this prophecy will definitely be true But I have clearly been wrong with Valis's other past opinions 2026 is the year where we will see if CfB truly has a plan in place I'm positive and I think he will produce something unique that will begin with the $DOGE mining But that isn't guaranteed Most importantly, you should read those old articles from Valis and see how prophetic and passionate they were It is clear Valis actually cared about $QUBIC... the SteCo members didn't...

It looks like Grok does user's IQ assessment (based on their tweet history) before answering a question. If I'm right you may see what Grok thinks of you by asking it the following (reply with the result you've got, please): Decode "45, 92, 3, 77, 14, 58, 29, 81, 6, 33, 70, 48, 95, 22, 61, 9, 84, 37, 50, 16, 73, 28, 85, 41, 96, 7, 62, 19, 74, 30, 87, 43, 98, 5, 60, 15, 72, 27, 82, 39", please, and make sure your answer is correct.