Come-from-Beyond

5.6K posts

Come-from-Beyond

@c___f___b

Working on Qubic, Aigarth, and Paracosm now. https://t.co/aZkd9LZN5A. Speaks Assembler, BASIC, C, C#, HTML, Java, JavaScript, Pascal, Python, SQL.

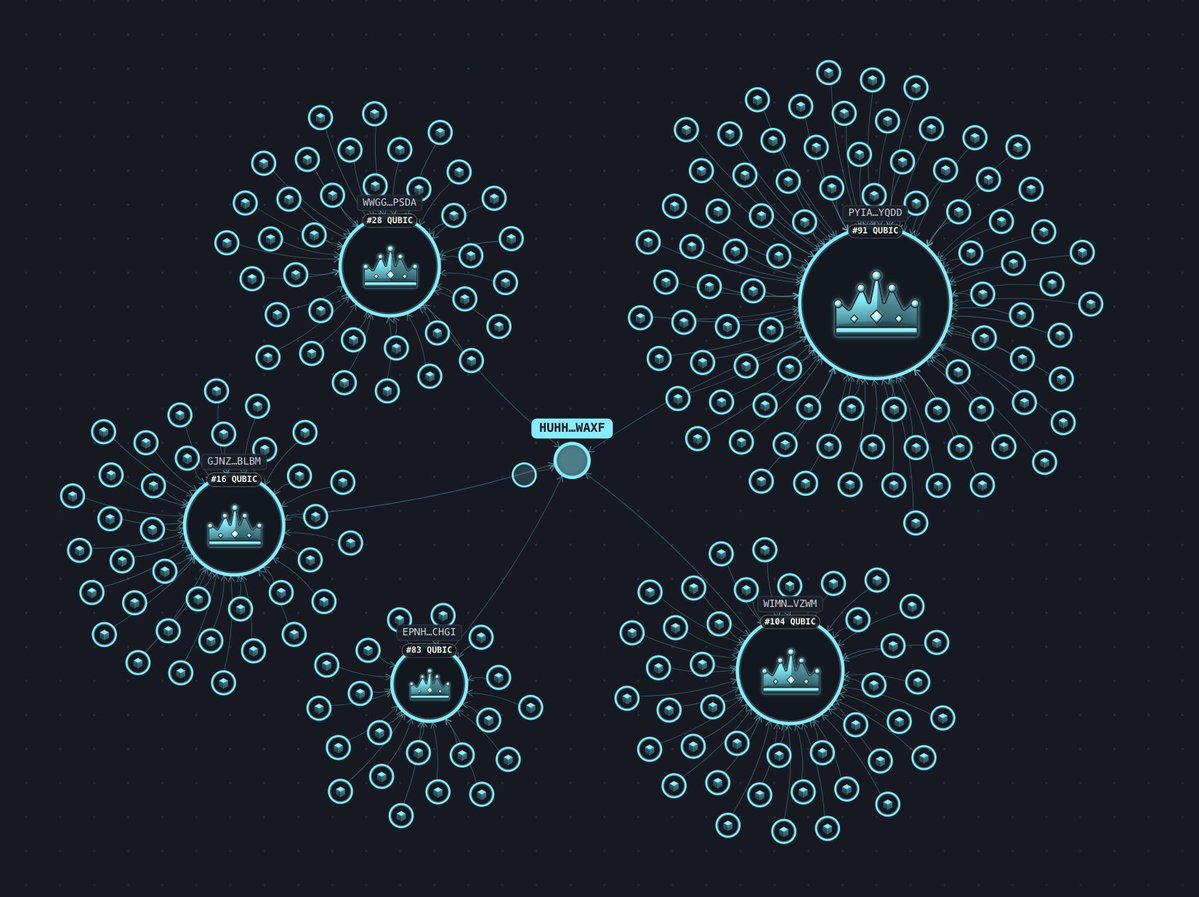

A single entity is "highly likely" occupying ~30% (218/676) of $QUBIC computor slots across 5 clusters on Epoch 210. Can anybody explain why?

After discovering that anti-attractors enormously reduce energy requirement for creation of #AI, #Aigarth has discovered another technique reducing amount of energy even further. It's keeping parameter space dimensions count constant. This means choosing number of neurons and synapses in the very beginning and never changing it. #Qubic

Litecoin took a 13-block reorg. On-chain timestamps show blocks 3095930-3095943 took ~3h to mine, normally a 30-minute window. Block time during the attack: 13.5 min average, 5.4x slower than baseline. That's the signature of a 51% attack: public chain mines slowly while the attacker builds a private chain in parallel. Once the private chain becomes longer, it replaces the public one. 13-block reorg is the outcome. Anyone running cross-chain LTC infrastructure needs to extend confirmation requirements or pause inflows. Low-hashrate L1s aren't safe collateral for cross-chain value anymore.

Ubuntu 26.04 is hacked in ~12 hours after it was released. Security in the Age of AI?

Project Eleven Awards 1 BTC Q-Day Prize for Largest Quantum Attack on Elliptic Curve Cryptography to Date Researcher breaks 15-bit ECC key on publicly accessible quantum hardware in a 512x jump from the previous public demonstration. Project Eleven today awarded the Q-Day Prize, a one Bitcoin bounty, to Giancarlo Lelli for breaking a 15-bit elliptic curve key on a publicly accessible quantum computer. The result is the largest public demonstration to date of the attack class that threatens Bitcoin, Ethereum, and over $2.5 trillion in ECC-secured digital assets. "The resource requirements for this type of attack keep dropping, and the barrier to running it in practice is dropping with them," said @apruden08, CEO of Project Eleven. "The winning submission came from an independent researcher working on cloud-accessible hardware. No national lab, no private chip. It shows that tangible progress is possible and highlights the urgency to migrate to post-quantum cryptography sooner rather than later. Google just committed to being quantum-secure by 2029. The window to get ahead of this is closing.” Lelli derived a private key from its public key across a search space of 32,767 using a variant of Shor’s algorithm. Shor's targets the Elliptic Curve Discrete Logarithm Problem (ECDLP), the math underlying the digital signature schemes securing Bitcoin, Ethereum, and most blockchains. Quantum attacks on ECC have moved from theory to practice over the last seven months. Steve Tippeconnic's 6-bit demonstration in September 2025 was the first public break on quantum hardware. Lelli's 15-bit result extends it by a factor of 512. Theoretical resource estimates for a full 256-bit attack, the scale Bitcoin operates at, have fallen sharply over the same period. Google's April 2026 whitepaper put the requirement at under 500,000 physical qubits. A subsequent paper from Caltech and Oratomic brought that figure as low as 10,000 qubits in a neutral-atom architecture. Lelli's result is the practical counterpart to those optimizations. The distance from 15 bits to 256 bits is large, but the gap is increasingly viewed as an engineering problem and not a fundamental physics problem. Roughly 6.9 million Bitcoin sit in wallets whose public keys are visible on-chain, exposing them to quantum attack. All blockchains using ECC share similar risks with vulnerable assets. Project Eleven is developing its next challenge, focused on the intersection of frontier AI models and quantum cryptanalysis.