Avi Shefi

444 posts

Avi Shefi

@shefiavi

Consultant | AI & Distributed Systems

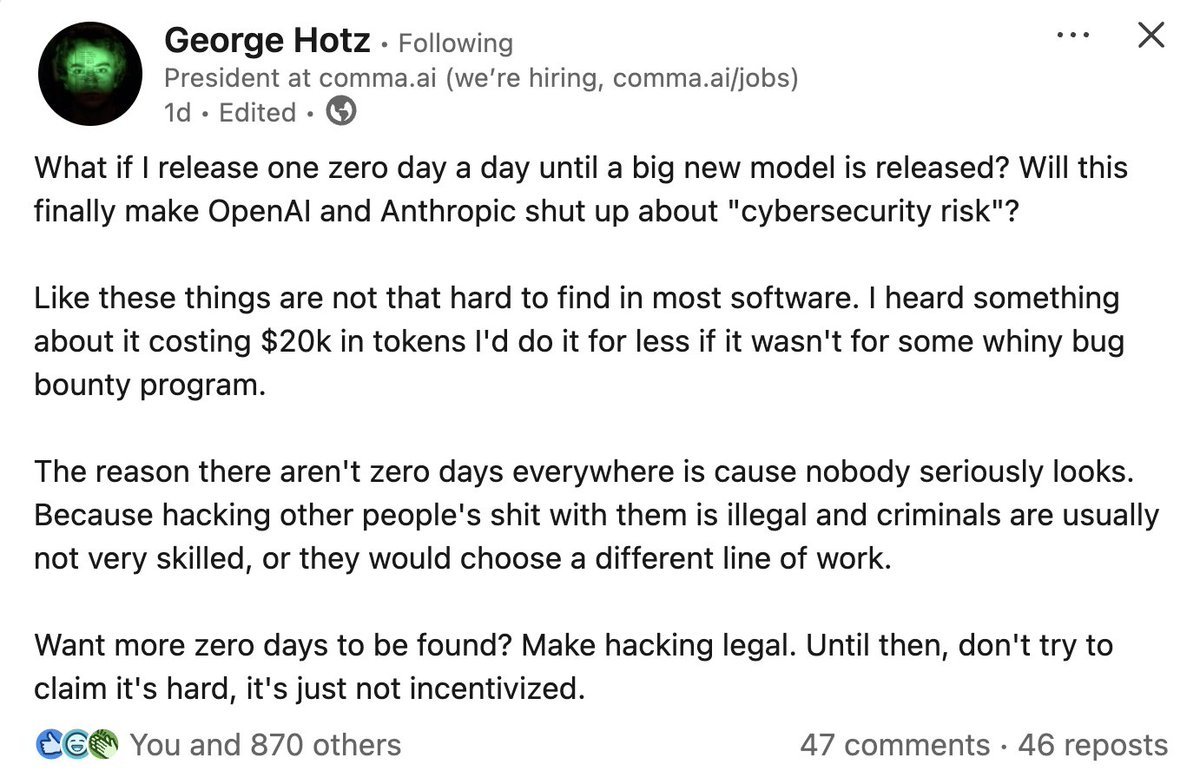

The US social mood is turning dramatically negative on AI

Chamath: Anthropic's Mythos Warning Is Theater @Jason: “Chamath, is it the Boy who Cried Wolf, or is this the real deal now?” @Chamath: “I think it's mostly theater. In February of 2019 when Dario was still at OpenAI, they did the same thing with GPT-2. That was a 1.5 billion parameter model, which sounds like a total fart in the wind in 2026. But at that time, this model was supposed to be the end of days. And at the end of it, it was a huge nothingburger. If you actually think that Mythos is capable of doing what it says it can do, two things are true. One is, a very sophisticated hacker can probably do those things right now with Opus. And two, if these exploits are this easy to find, whether you use Opus or whether you use Mythos, the reality is you'd have to shut down the internet for about five years to patch them all. So when you see a large multi-trillion dollar GSIB bank, it's a bit of theater. Why? What do you think they can actually accomplish in two months? Do you actually think that if there's these vulnerabilities, it's all going to get fixed? Let's give them six months, let's give them nine months. So I do think that Sacks is right, that they have figured out a very clever go-to-market muscle here that activates hyper attention and hyper usage, and so I give them tremendous credit. But we've seen it before, we saw it when these folks were the principal architects at OpenAI, and we're now seeing the same playbook here. The reality is that capitalism moves forward, the funding needs moves forward, and the need for these guys to build adoption moves forward. And that's going to supersede what this is.”

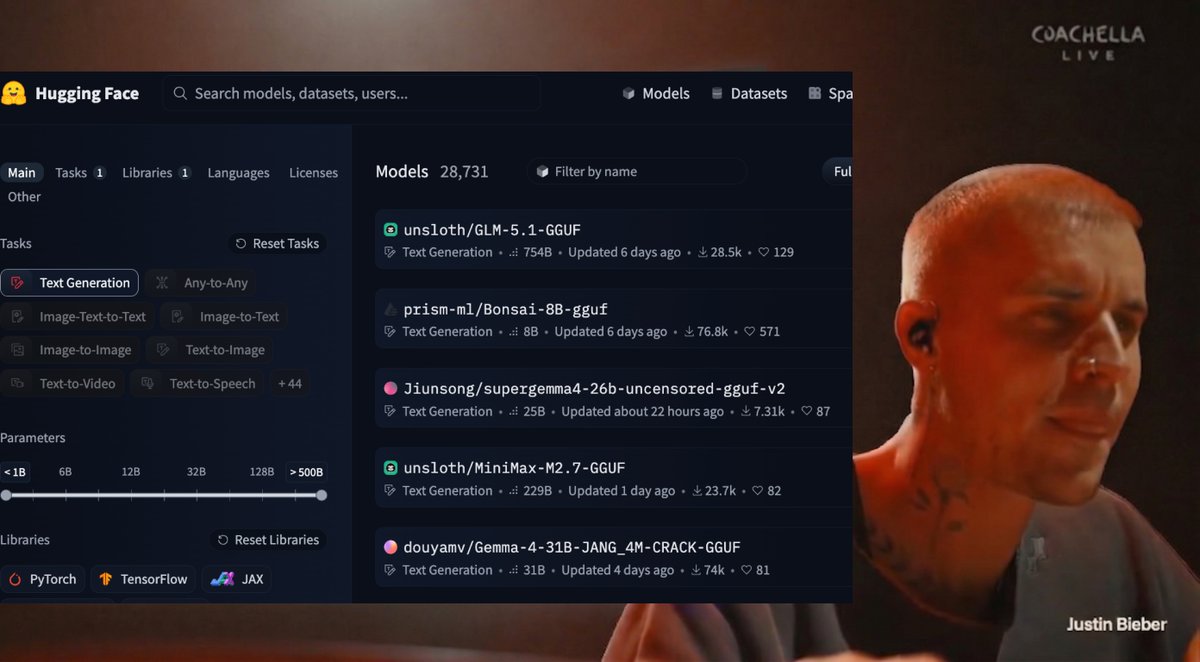

Yeah folks, it's gonna be harder in the future to ensure OpenClaw still works with Anthropic models.

Claude Code rate limited me so hard I bought a $5,000 NVIDIA DGX Spark. Arriving tomorrow. A personal AI supercomputer. Anthropic cut off OpenClaw users. Slashed Claude Opus 4.6 rate limits. Told $200/month Max plan customers to use less. Then gave us a credit as an apology. This is what happens when AI companies have too much power over your workflow. One update and your entire stack breaks. Local models are the only infrastructure no one can throttle. No rate limits. No 529 errors. No surprise policy changes. Tomorrow I'm testing the DGX Spark live on stream. Running local models through real vibe coding workflows. The goal is simple. Never depend on a single provider again.