Sabitlenmiş Tweet

Siddhartha Gairola

2.5K posts

Siddhartha Gairola

@sidgairo18

🏔️📍🇩🇪 @ELLISforEurope 🇪🇺 PhD Student @cvml_mpiinf at MPI-INF & IST-A 物の哀れ ✨

Saarbrücken, Germany Katılım Temmuz 2017

659 Takip Edilen1.4K Takipçiler

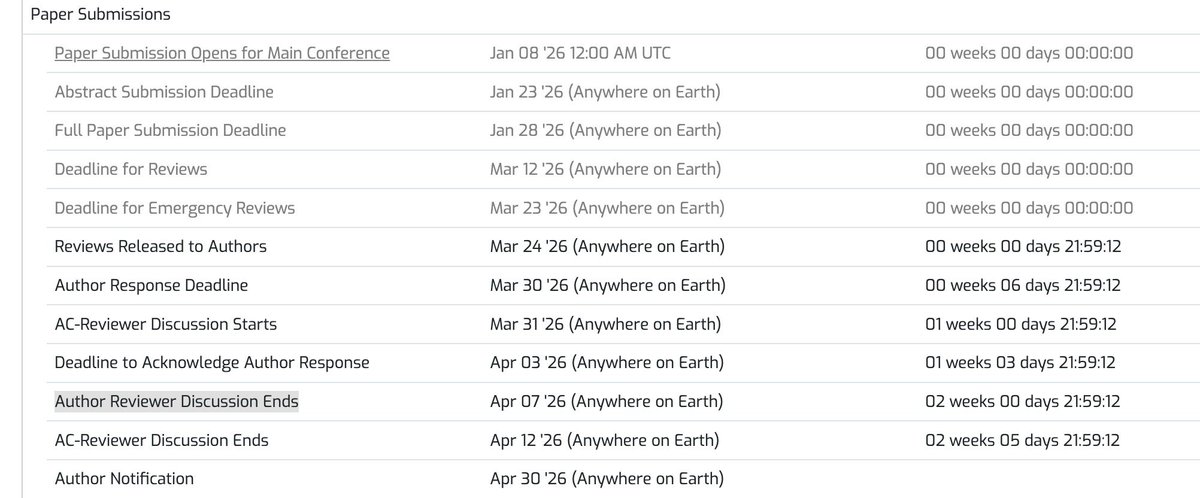

This seems confusing to me, the Author Response Deadline is March 30th, and then the Author Reviewer Discussion Ends April 7th ?

What is the difference between the two ? Are the authors still allowed to comment / respond to the Reviewers during the period between March 31st to April 7th ?

@icmlconf

English

Siddhartha Gairola retweetledi

Siddhartha Gairola retweetledi

Siddhartha Gairola retweetledi

Siddhartha Gairola retweetledi

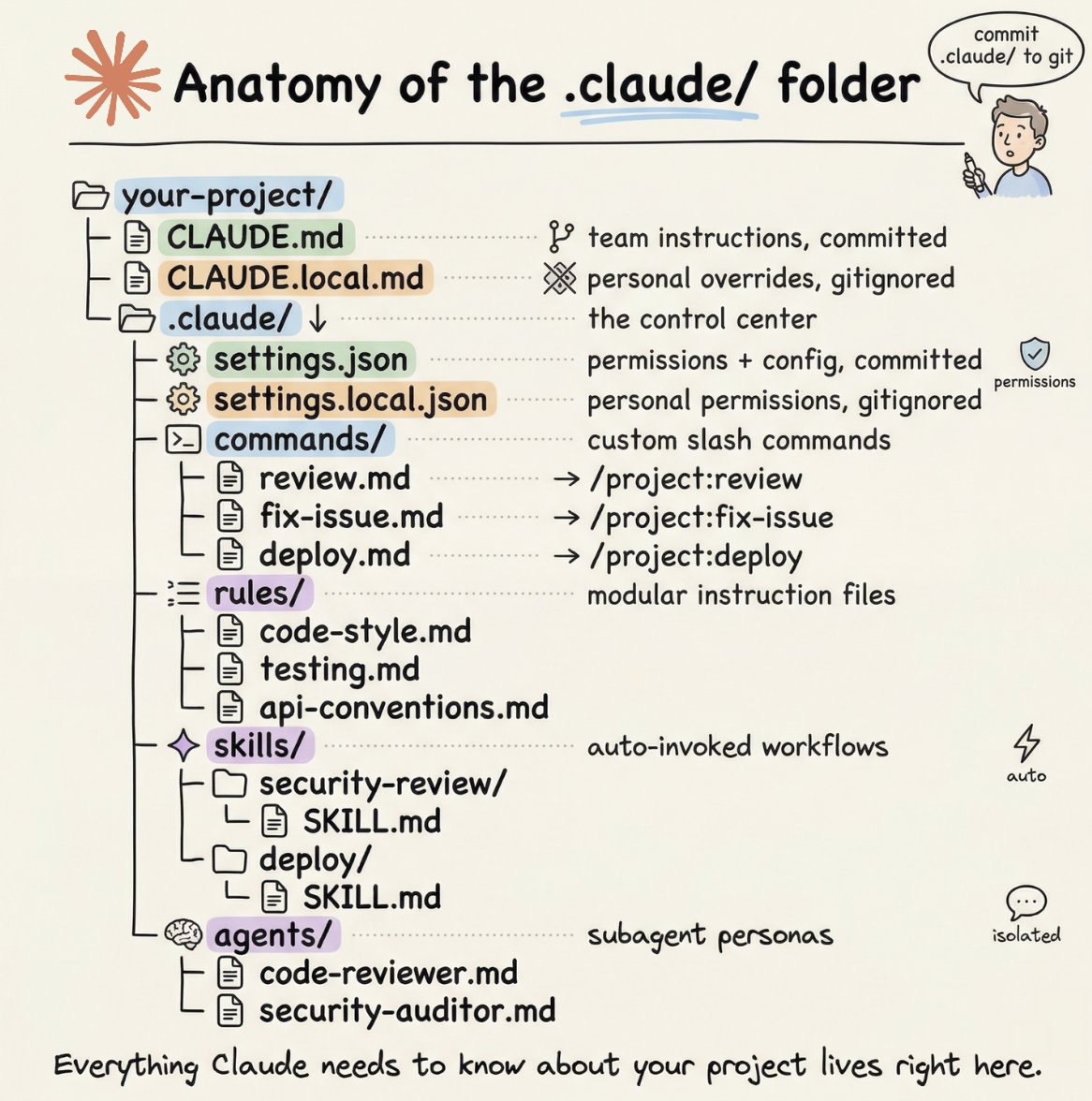

How to setup your Claude code project?

TL;DR

Most developers skip the setup and just start prompting. That's the mistake.

A proper Claude Code project lives inside a .𝗰𝗹𝗮𝘂𝗱𝗲/ folder. Start with 𝗖𝗟𝗔𝗨𝗗𝗘.𝗺𝗱 as Claude's instruction manual. Split it into a 𝗿𝘂𝗹𝗲𝘀/ folder as it grows. Add 𝗰𝗼𝗺𝗺𝗮𝗻𝗱𝘀/ for repeatable workflows, 𝘀𝗸𝗶𝗹𝗹𝘀/ for context-triggered automation, and 𝗮𝗴𝗲𝗻𝘁𝘀/ for isolated subagents. Lock down permissions in 𝘀𝗲𝘁𝘁𝗶𝗻𝗴𝘀.𝗷𝘀𝗼𝗻.

There are two .𝗰𝗹𝗮𝘂𝗱𝗲/ folders: one committed with your repo, one global at ~/.𝗰𝗹𝗮𝘂𝗱𝗲/ for personal preferences and auto-memory across projects.

The .𝗰𝗹𝗮𝘂𝗱𝗲/ folder is infrastructure. Treat it like one.

The article below is a complete guide to 𝗖𝗟𝗔𝗨𝗗𝗘.𝗺𝗱, custom commands, skills, agents, and permissions, and how to set them up properly.

Akshay 🚀@akshay_pachaar

English

Siddhartha Gairola retweetledi

Siddhartha Gairola retweetledi

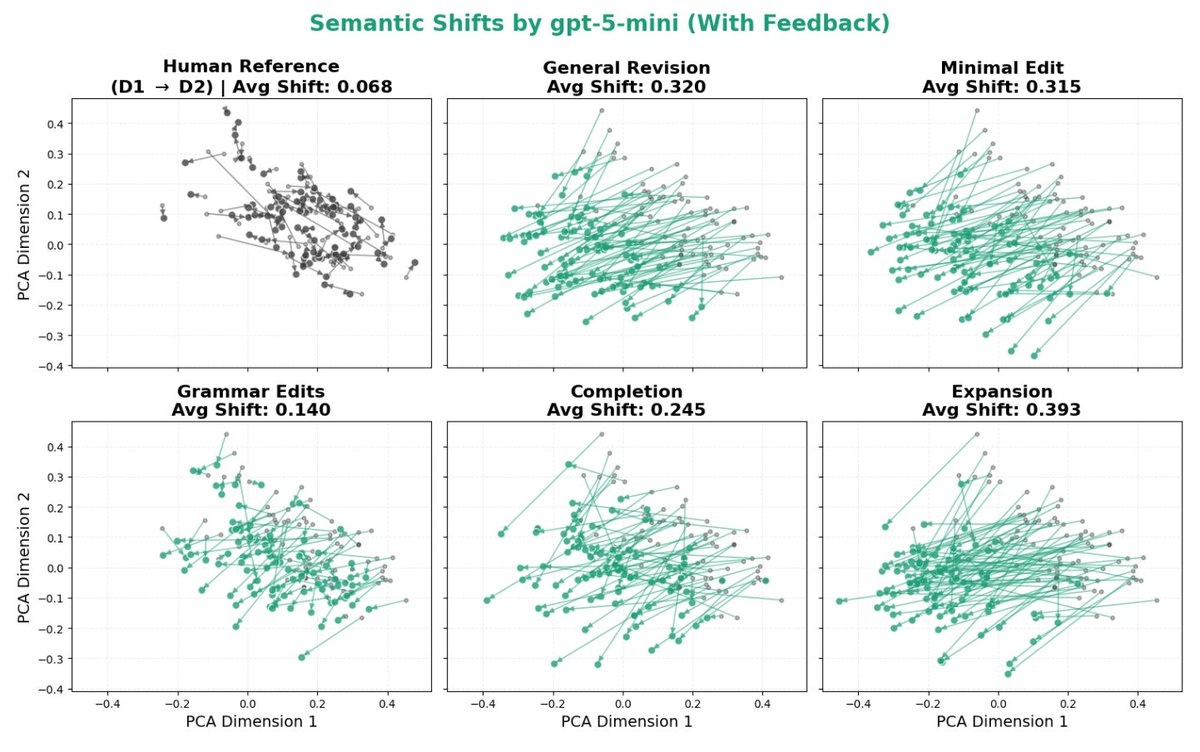

The paper I’ve been most obsessed with lately is finally out: nbcnews.com/tech/tech-news…! Check out this beautiful plot: it shows how much LLMs distort human writing when making edits, compared to how humans would revise the same content.

We take a dataset of human-written essays from 2021, before the release of ChatGPT. We compare how people revise draft v1 -> v2 given expert feedback, with how an LLM revises the same v1 given the same feedback. This enables a counterfactual comparison: how much does the LLM alter the essay compared to what the human was originally intending to write? We find LLMs consistently induce massive distortions, even changing the actual meaning and conclusions argued for.

English

Siddhartha Gairola retweetledi

A visual guide to modern LLM attention variants, all in one place: magazine.sebastianraschka.com/p/visual-atten…

English

Siddhartha Gairola retweetledi

How to develop good research questions nature.com/articles/s4156… (free: rdcu.be/eJ8A1) 🧬🖥️🧪

English

Huge thanks for the acknowledgment! It is really an honor to get honorable mentioned twice at @3DVconf, first a best paper honorable mention 2 years ago :)

Also big congrats to my dear colleague and friend @Mi_Niemeyer for the award, so well deserved!

International Conference on 3D Vision@3DVconf

3DV Outstanding Doctoral Dissertation Award Honorable Mention goes to Songyou Peng! @songyoupeng Thesis title: "Neural Scene Representations for 3D Reconstruction and Scene Understanding" #3DV2026

English

Siddhartha Gairola retweetledi

Siddhartha Gairola retweetledi

@sidgairo18 Aren't the absolute scores irrelevant? What matters is the global relative ranking of papers (which we get because every reviewer/AC sees many papers). And the calibration across conferences happens by enforcing (implicitly or explicity) a ~similar acceptance rate (?).🤔

English

Food for thought - 🤔

I've been thinking about this long and hard - having been reviewing for popular ML / CV conferences (ICML, ICLR, NeurIPS, CVPR, ICCV, ECCV) - with the community submitting papers across these, it only makes sense to have a uniform reviewer form, guidelines, rules and format across these conferences.

Personally I have a real hard time calibrating my scale from 1-10 (ICLR) to 1-6 for CVPR, then we comes ICML which also has 1-6 but 3,4 are weak reject/accept instead of 3,4 as borderline reject/accept (for CVPR).

This only gets trickier and worse when you add ICCV, ECCV, NeurIPS into the mix. Then, you add NLP related conferences and Robotics ones, to make the entire system more and more confusing - with uncalibrated reviewer scores coming - which may or may not truly reflect the reviewer's intentions.

Happy to hear the thoughts of others.

cc: @icmlconf @CVPR @NeurIPSConf @iclr_conf @ICCVConference @eccvconf

English

@sidgairo18 This is exactly the mess that TMLR solved. There are no scores in round-1 of reviews. There is only yes/no decision after rebuttals. There are enough reasons why every system needs to converge to this.

English

“I’ve got a mobile 📱” Lmfao 🤣

Cigarette Nostalgia@CigsMake

You just dont see this type of comedy happening anymore

English

@shashankska Sure, but I was referring more to the reviewer form and scores - to be uniform / calibrated across venues.

English

@sidgairo18 We should simply follow the NLP community and do rolling reviews.

English