Zengzhi Wang

1.7K posts

@SinclairWang1

PhDing @sjtu1896 Working on Pre-training Data Engineering for Foundation Models: MathPile (2023), 🫐 ProX (2024), 💎 MegaMath (2025),🐙 OctoThinker(2025)

🏋️Thinking Mid-training: RL of Interleaved Reasoning🎗️ We address the gap between pretraining (no explicit reasoning) and post-training (reasoning-heavy) with an intermediate SFT+RL mid-training phase to teach models how to think. - Annotate pretraining data with interleaved thoughts - SFT mid-training to learn when/what to think alongside original content - RL mid-training to optimize reasoning generation with grounded reward from future token prediction Result: 3.2x improvement on reasoning benchmarks compared to direct RL post-training on base Llama-3-8B, and gains over only prior SFT as well. Introducing reasoning earlier makes models better prepared for post-training! Read more in the blog post: facebookresearch.github.io/RAM/blogs/thin…

@stefan_fee is running a mini frontier lab in academia 🤯

New paper: We deploy Claude Code in an autoresearch loop to discover novel jailbreaking algorithms – and it works. It beats 30+ existing GCG-like attacks (with AutoML hyperparameter tuning) This is a strong sign that incremental safety and security research can now be automated.

Congrats to the @cursor_ai team on the launch of Composer 2! We are proud to see Kimi-k2.5 provide the foundation. Seeing our model integrated effectively through Cursor's continued pretraining & high-compute RL training is the open model ecosystem we love to support. Note: Cursor accesses Kimi-k2.5 via @FireworksAI_HQ ' hosted RL and inference platform as part of an authorized commercial partnership.

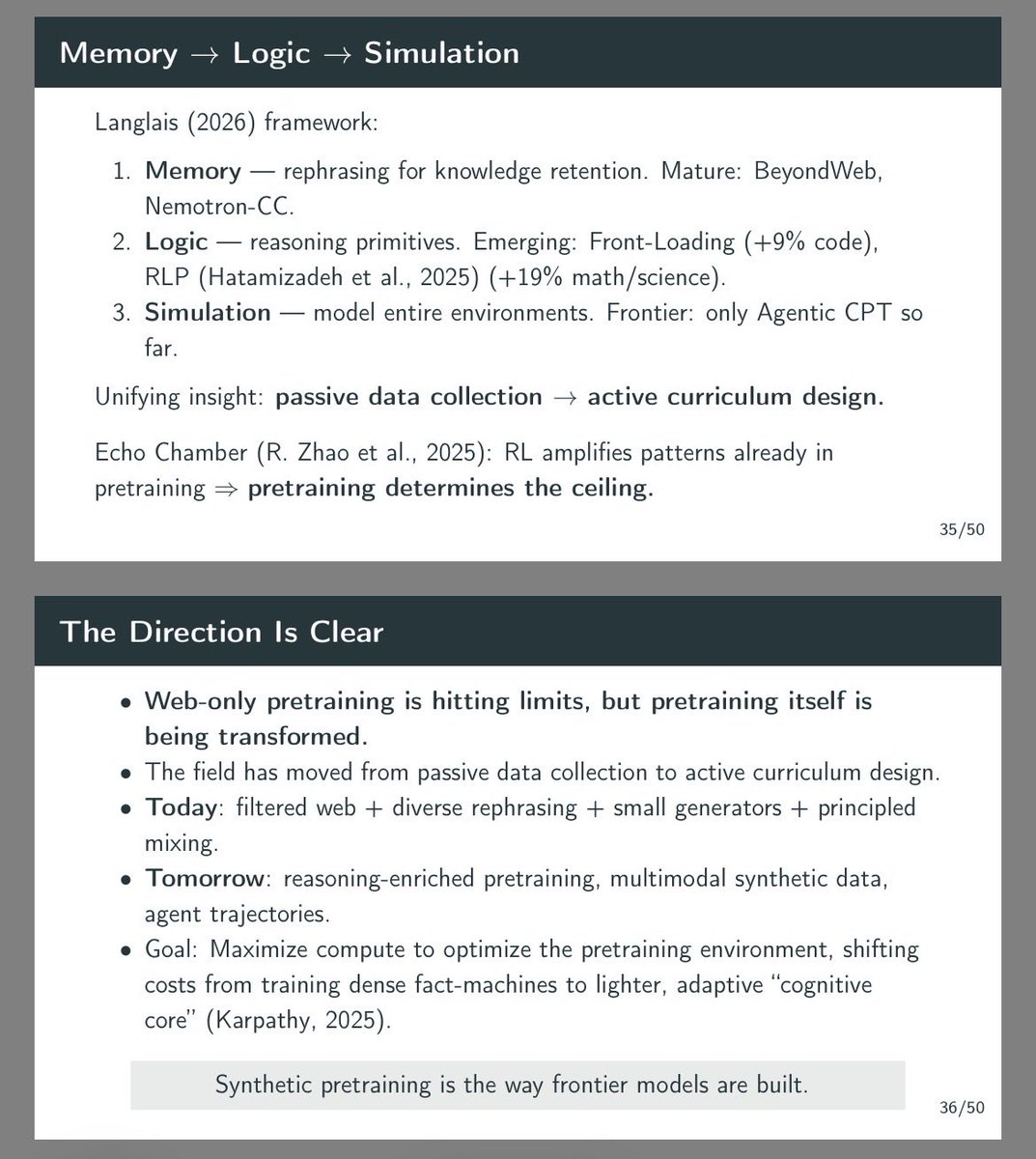

@inductionheads Spot on. We actually just gave a guest lecture at Berkeley EECS on this exact dynamic (L11: Synthetic Data Powering Pre-Training). @fujikanaeda Here are our slides if anyone wants to go down the rabbit hole: scalable-ai.eecs.berkeley.edu/assets/lecture…

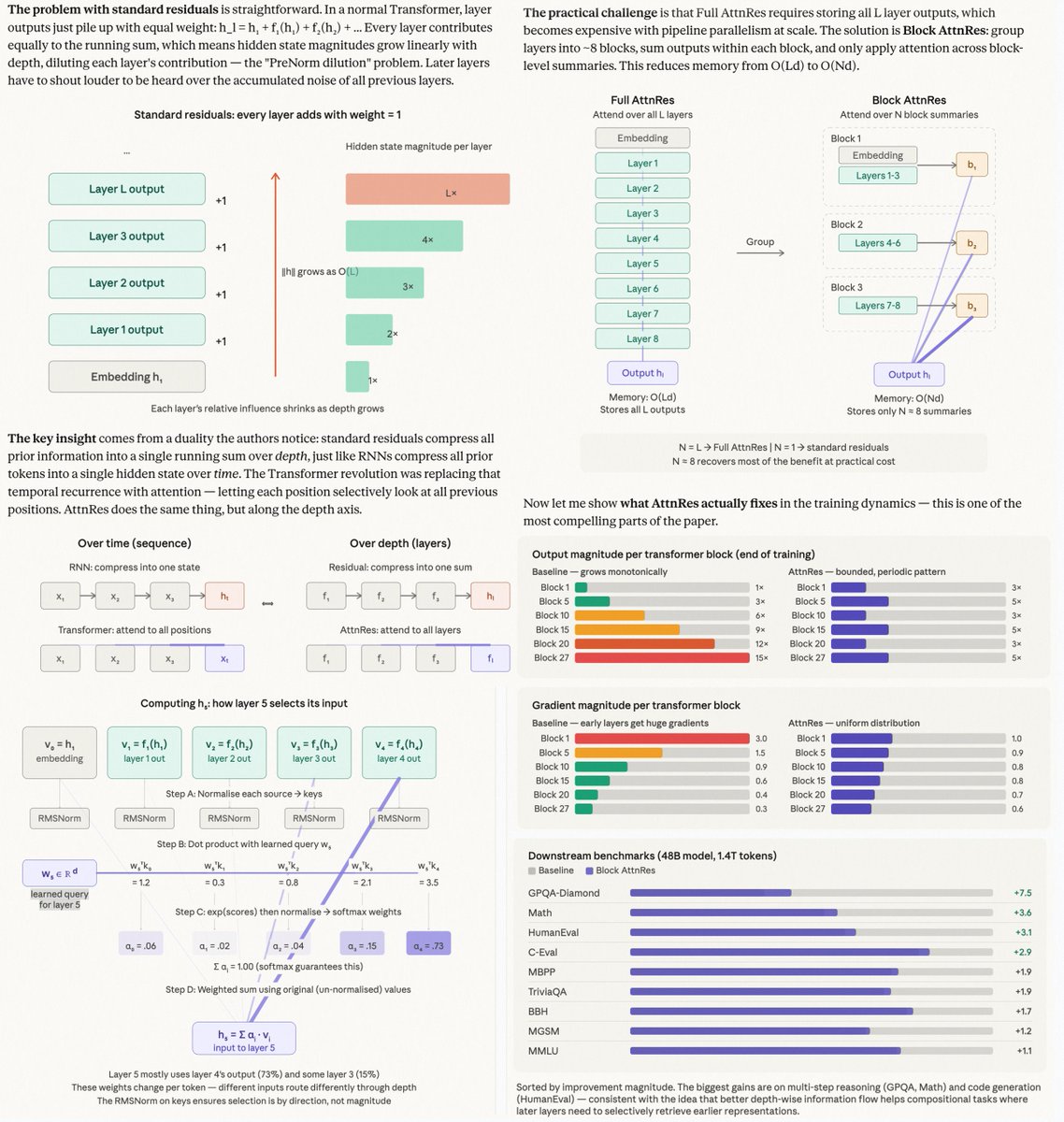

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers. 🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth. 🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale. 🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead. 🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains. 🔗Full report: github.com/MoonshotAI/Att…

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers. 🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth. 🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale. 🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead. 🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains. 🔗Full report: github.com/MoonshotAI/Att…