smallest.ai

517 posts

smallest.ai

@smallest_AI

AI research lab obsessed with small models

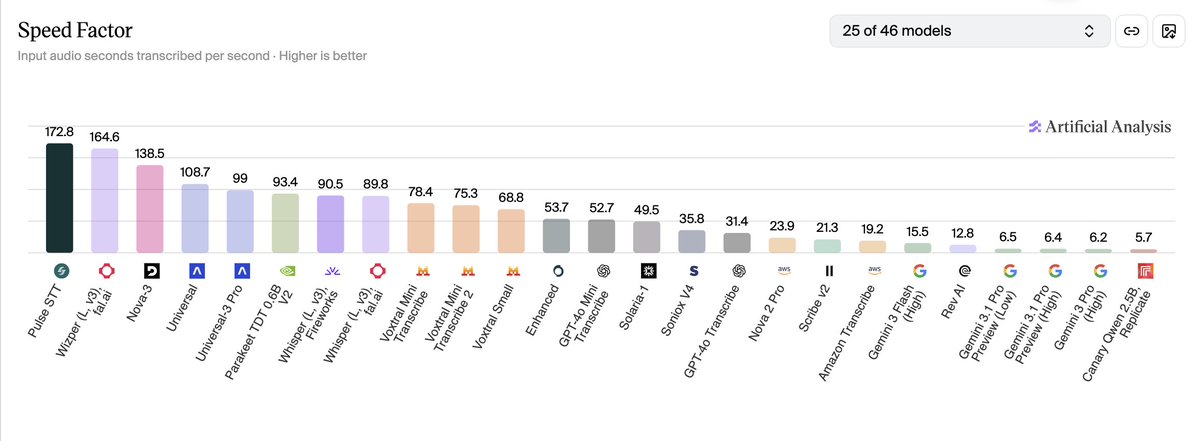

Guess who is number 2 on the @ArtificialAnlys Speech to Text benchmarks for speed. And the one that is number 1 is way worse in quality. The Smallest AI team churned out one of the fastest, most efficient speech-to-text models ever created.

Sub-agents in (latent) space! We’ve been working on a side project. As far as I know, this is the first massively multiplayer, completely LLM-driven game. Come play Gradient Bang with us. See if you can catch me on the leaderboard. This whole thing started because I wanted to explore a bunch of things I’m currently obsessed with, in an application of non-trivial size, that felt both new and old at the same time. So … a retro-style space trading game built entirely around interacting with and managing multiple LLMs. Factorio, but instead of clicking, you cajole your ship AI into tasking other AIs to do things for you. Some of the things we’ve been thinking about as we hack on Gradient Bang: - Sub-agent orchestration - Partial context sharing between multiple LLM inference loops - Managing very long contexts, and episodic memory across user sessions - World events and large volumes of structured data input as part of human/agent conversations - Dynamic user interfaces, driven/created on the fly by LLMs - And, of course, voice as primary input If you’ve been building coding harnesses, or writing Open Claw agents, or doing pretty much anything that pushes the boundaries of AI-native development these days, you’re probably thinking about these things too! This is all built with @pipecat_ai, the back end is @supabase, the React front end is deployed to @vercel, and all the code is open source.

Most asked question in my DMs Even I ask myself the same thing every day: what should I learn? The volume and velocity of learning have increased a lot in the last 5-6 months. Well, I have a simple and practical solution for this. 1. Basics of ML: People are shifting more towards agentic AI and neural networks, but if you want to learn neural networks, you need to be very good at regression, classification, and gradient concepts. In reality, most people use classic ML for time series forecasting, and XGBoost/LightGBM performs exceptionally well in nearly every classic ML scenario. So learn the basics of ML as your foundation not much depth in coding. Concepts are everything; spend a lot of time on them. NEURAL NETWORKS AND TRANSFORMERS ARE THE MOST MANDATORY CONCEPTS. YOU CAN'T ESCAPE THIS. ASK CLAUDE TO TEACH YOU THESE CONCEPTS IN THE SIMPLEST WAY POSSIBLE. 2. Deployment: Deployment is the #1 skill in AI/ML. Claude and Codex can write code and deployment scripts for you, but you must know how to connect the dots. Inference engineering is a high-level, in-demand skill, so turn every basic ML or agentic AI project into production. Use free tiers and credits, but at least build the habit of deployment while learning. 3. Agents: This is where current AI is heading, and people will adopt it more. Agents are necessary for your portfolio and new opportunities. You can vibe-code agents, but you need to understand agent orchestration and workflows. Do not go deep except in basics of ML and neural networks. Learn things through broad concepts. Do not try to store everything in your brain. You must be very good at solving problems using AI. It's still confusing what to choose, but finally I would say: Basics of ML (theory) + complete neural networks (theory) + projects on LLMs and agents with deployment. That's it!

Some rooms don't get posted about until after they happen. This is one of them. OFF THE RECORD | Bengaluru — a closed-door evening for AI founders. Seligman ventures × Venture Catalysts++ Raising in 2026. AI Models to Moats. Dinner.

Tried out the Agora Debater game. It features Aristotle & Socrates debating about any topic you choose, using @smallest_AI 's state-of-the-art Lightning-v3.1 TTS. Fun to test out what the two philosophers from 1000s of years ago have to say about AI 😆 Link below + more apps

Building enterprise voice AI means making real technical decisions at speed, with direct consequences for the businesses depending on your systems. @AkshatMandloi10 has been doing exactly that at @smallest_AI, where he and his team develop low-latency speech recognition systems and production-ready voice workflows for enterprise use. Akshat will be joining the fireside at 𝐀𝐈 𝐔𝐧𝐝𝐞𝐫 𝐓𝐡𝐞 𝐌𝐢𝐜𝐫𝐨𝐬𝐜𝐨𝐩𝐞, an invite-only evening hosted by 3one4 Capital and Bloomtree Business Advisors Private Limited, to bring perspective on what it actually takes to build and scale in today's AI cycle. 📍Bengaluru 📅 14 April @Aduge_Batta