Come by and see @EvanGrenda at the AWS booth at GTC. @tavus video avatars, voice agents built with NVIDIA Nemotron models, and new realtime AI architecture patterns in @pipecat_ai!

Pipecat AI

223 posts

@pipecat_ai

100% open source framework for realtime voice and multimodal AI. Maintained by @trydaily engineering team with support from the Pipecat developer community.

Come by and see @EvanGrenda at the AWS booth at GTC. @tavus video avatars, voice agents built with NVIDIA Nemotron models, and new realtime AI architecture patterns in @pipecat_ai!

INVERTED API KEYS “Where x402 is uniquely useful is the concept of inverted API keys. Instead of you giving the API key to the developer, the developer gives you the API key.” – @programmer of @CoinbaseDev

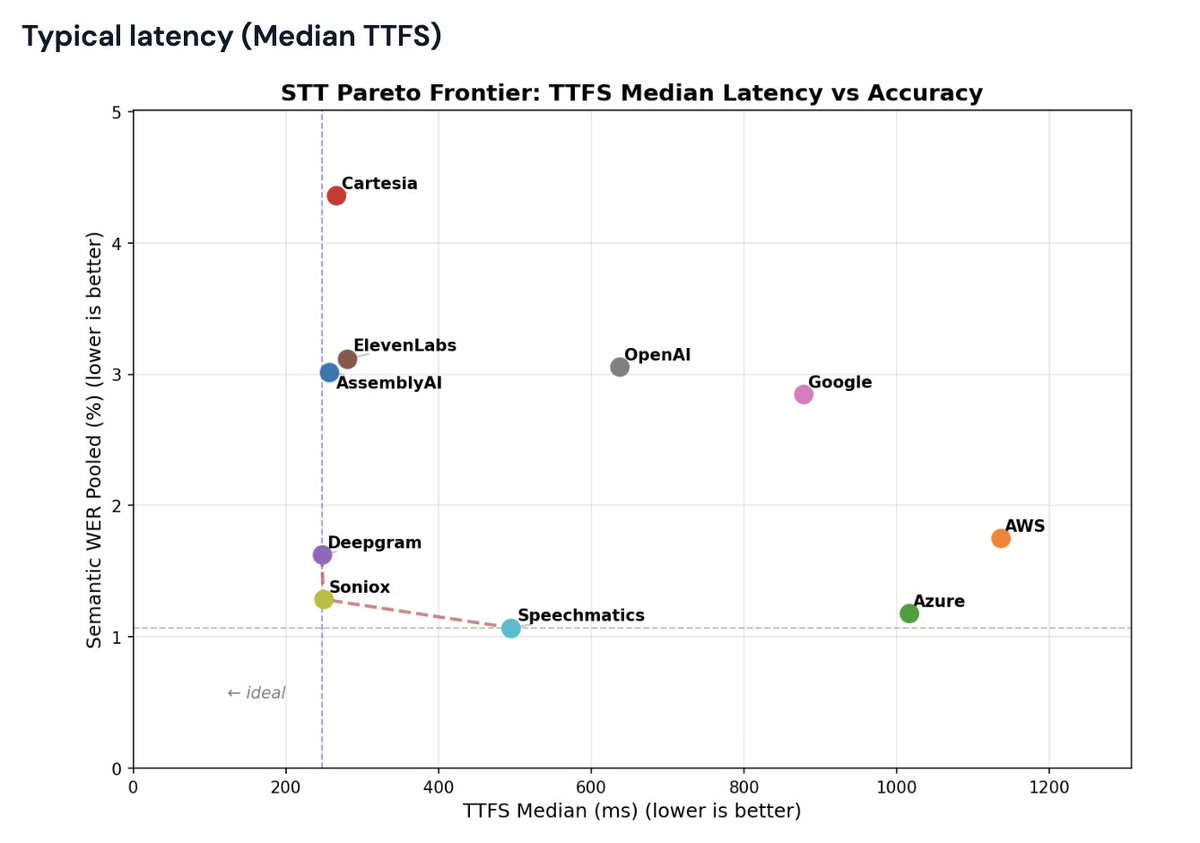

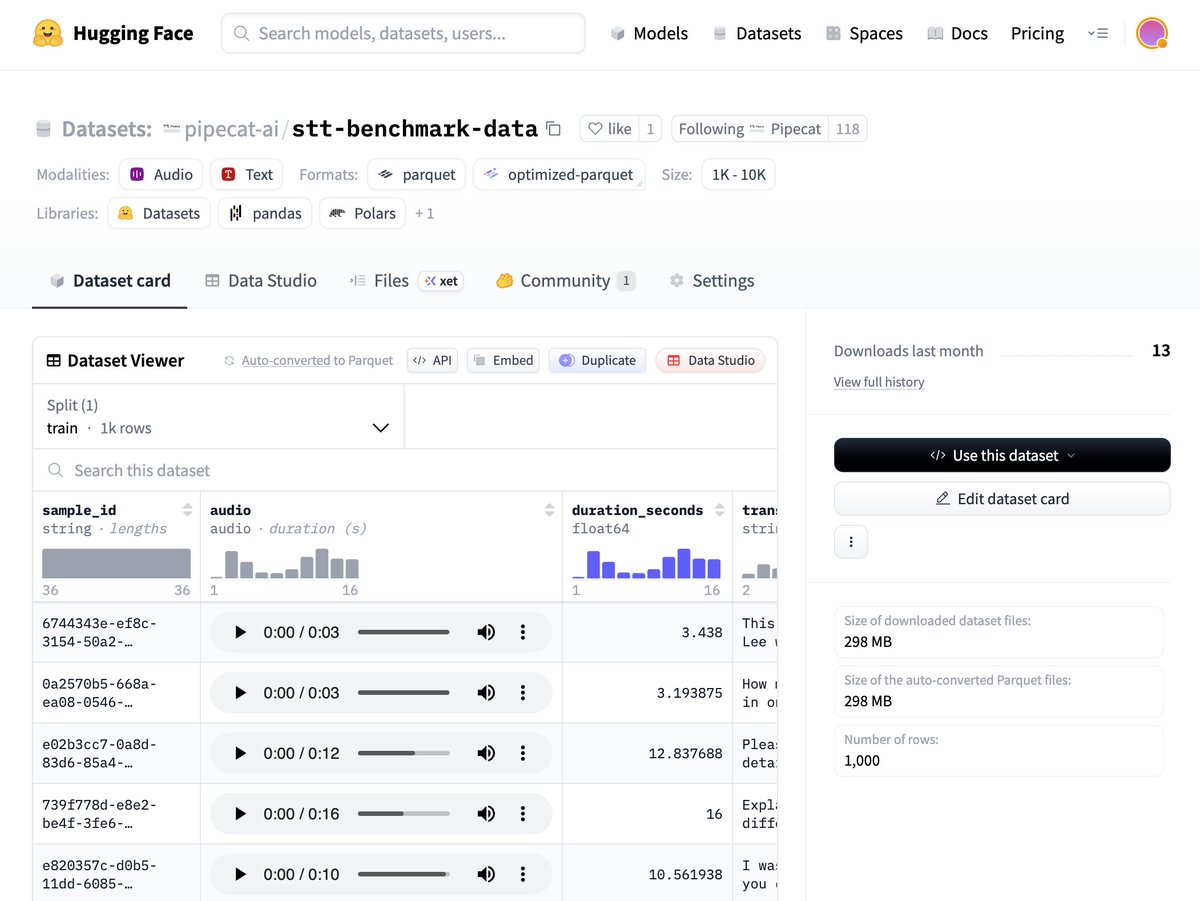

Real-time transcription just got a significant upgrade. Universal-3-Pro is now available for streaming — bringing AssemblyAI's most accurate speech model to live audio for the first time. Developers building voice agents, live captioning tools, and real-time analytics pipelines now get three things they've been asking for: 🔹 Best-in-class word error and entity detection across streaming ASR benchmarks 🔹 Real-time speaker labels — know who said what, as it happens 🔹 Superior entity detection for names, places, orgs, and specialized terminology in real-time 🔹 Code-switching and global language coverage built-in

Voice workflows just got stronger with gpt-realtime-1.5 in the Realtime API. The model offers more reliable instruction following, tool calling, and multilingual accuracy. Demo with @charlierguo

Voice workflows just got stronger with gpt-realtime-1.5 in the Realtime API. The model offers more reliable instruction following, tool calling, and multilingual accuracy. Demo with @charlierguo

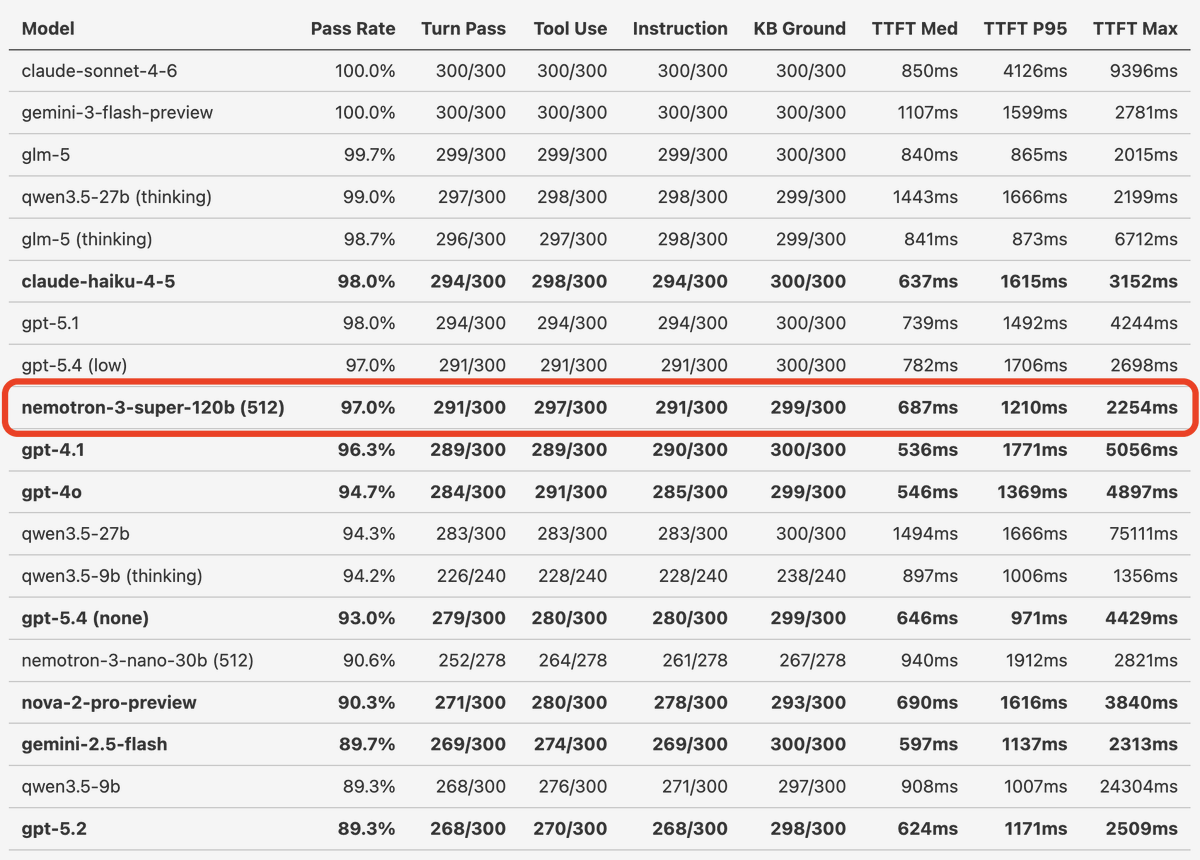

Claude Sonnet 4.6 scores 100%, with a median TTFT of 850ms, on our standard LLM Voice Agent performance benchmark. It's currently the fastest model that saturates this benchmark. I also re-ran the numbers for the whole leaderboard, and Claude Haiku 4.5 scored 98% with a TTFT of 637ms. This puts Haiku in front of GPT 5.1 in the rankings, and a bit better in "intelligence" than GPT 4.1, but 100ms slower. This is the first time we've had an Anthropic model that's a really good fit for most of our voice agent use cases. And now we have two! Claude models have always had great instruction following, tool calling, and conversational dynamics. But they've been slower than the other SOTA models. That's changed. One reason to re-run a benchmark like this is that latency changes. We continuously monitor latency for all the models we regularly use. But a specific run of a long-format benchmark like this is a bit different than our standard monitoring. Another reason, though, is that models like Claude, Gemini, and the GPT family are hosted systems and they evolve. A good rule of thumb is that changes in model behavior are probably your own code rather than real changes on the provider side. But that's not always true. And this performance jump for Claude Haiku 4.5 over the past two months is dramatic. I recently fixed some corner cases in tool call handling and improved the judging prompts in this benchmark. So I'll re-run Claude Haiku 4.5 against the benchmark code from 2 months ago, at some point, because I'd like to understand whether I previously had bugs that unfairly penalized Haiku. But either way, whether the model has gotten better or we've ironed out some issues with the benchmark, Haiku is impressive and is worth experimenting with if you are a voice AI developer.

Drop 5/14: Introducing Bulbul V3, our latest text-to-speech model. It raises the bar for how human it sounds, while being super robust. In an independent third-party human listening study, Bulbul V3 delivers the highest listener preference, and low error rates across use-cases and languages. See details in our blog, but first watch the video. sarvam.ai/blogs/bulbul-v3