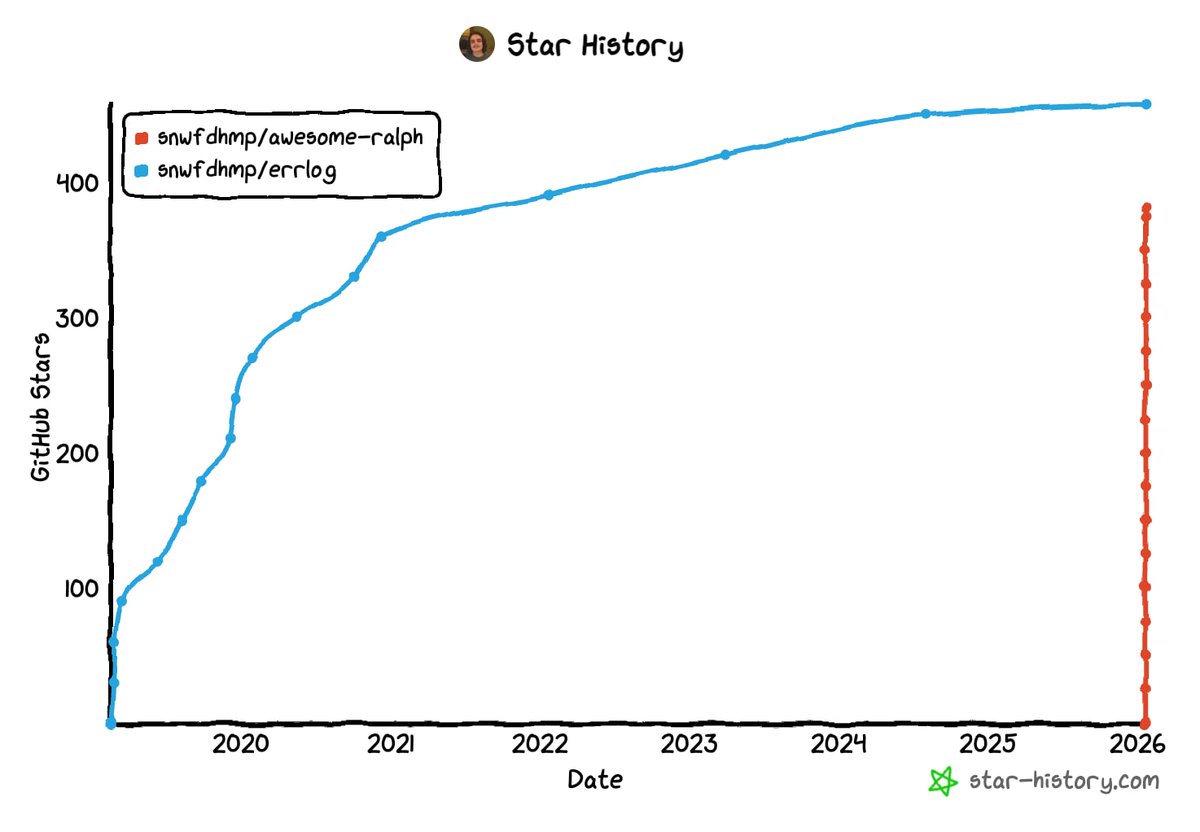

Three years ago we started working on a stealth project that we weren’t sure we’d ever talk about publicly... until today. Breakthrough: Introducing LFM-Zero: the first foundation model trained on 0 tokens. No pretraining. No finetuning. No data. Instead, we initialize from an implicit probabilistic prior over the underlying data-generating process, allowing the model to converge without ever observing data. LFM-Zero matches or surpasses models trained on 10T+ tokens across reasoning, coding, and multimodal tasks. Turns out that pretraining was just regularization that was holding us back. > Read our Tech Report here: tinyurl.com/lfm-zero