Sabitlenmiş Tweet

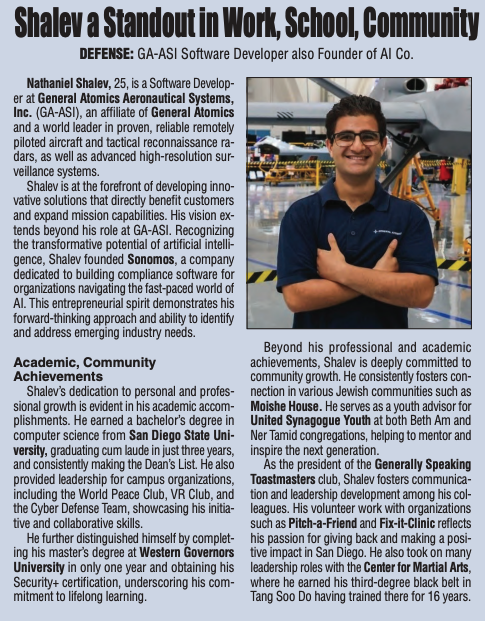

Sonomos is now a @Clio Partner! 🤝

Clio didn’t just build practice management software — they built the operating system for the modern law firm.

But AI adoption inside those firms has opened a new frontier: what happens when someone on your team pastes a client file into an AI tool.

Sonomos is the answer. Real-time PII detection. Local-only. Pre-send masking. No cloud. No exposure. No tradeoff.

The modern law firm runs on Clio, and it stays protected with Sonomos.

🔗 sonomos.ai

#LegalTech #Clio #DataPrivacy #AICompliance #PrivacyTech #LawFirms

English