Splendores

6.5K posts

Splendores

@splendores

Short biased Swing Trader transitioning into Retirement. How I started: https://t.co/ZNBGoE876O my life: https://t.co/0A1g8SM4SD

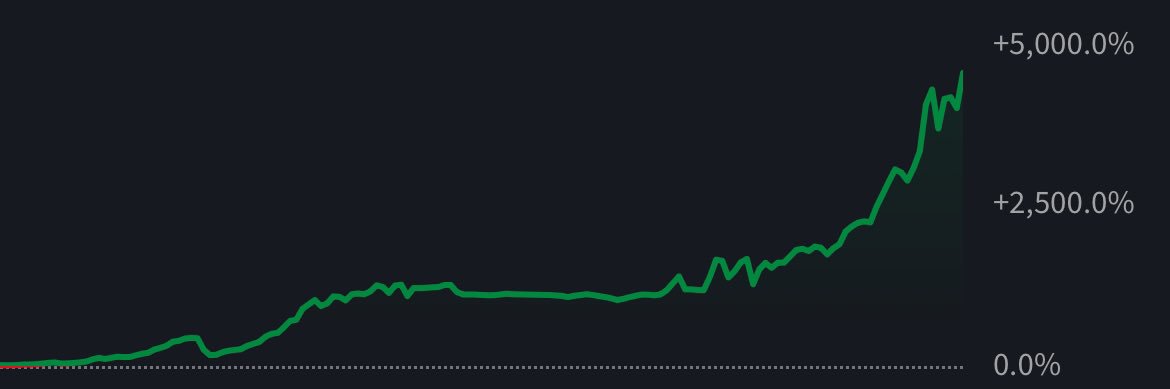

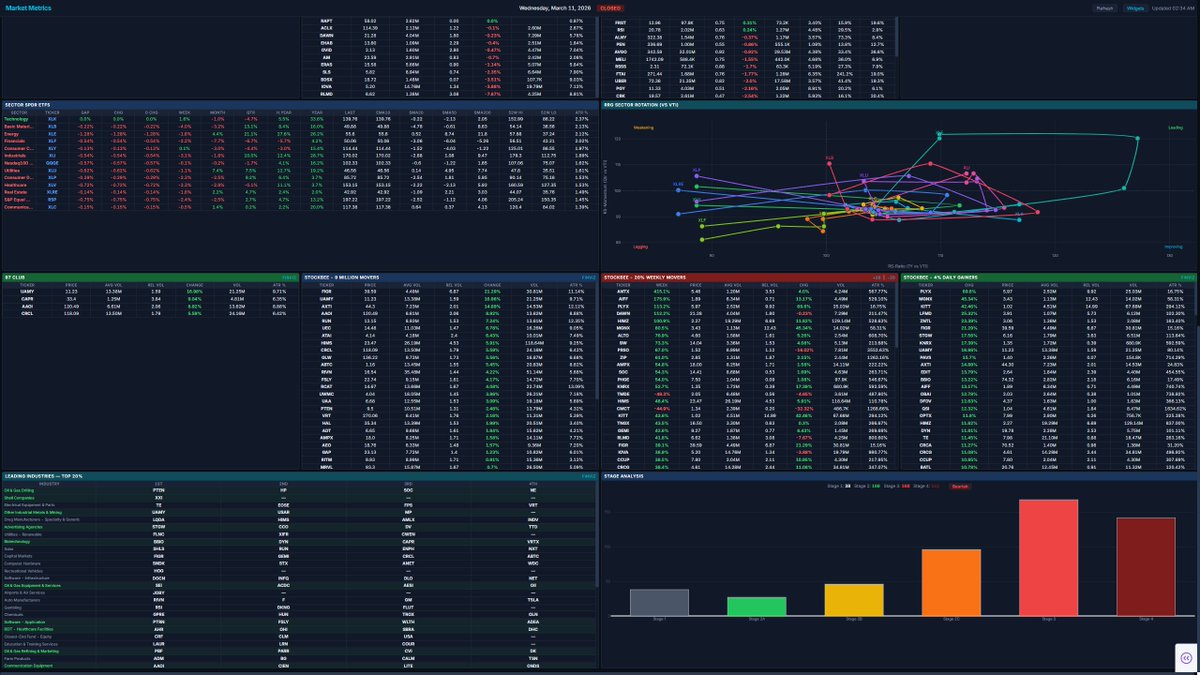

Oh, I'm very excited and motivated to make this future post about how I'm "stealing" ideas from others and adding no value. Someone said my little tutorials—showing how to make your own dashboards by leaning on what smarter people have built and taught us—led to an argument with someone I respect. They claimed all I do is steal others' ideas, turn them into dashboards for recognition, and fail to realize that I'm simply taking what smarter traders have built, seeing how it fits my trading, and creating tools that support me using AI—because this is the golden age we live in. At the same time, I educate non-tech people on how they can do the same with just a simple $20 AI plan. Instead, apparently I'm stealing ideas, ripping people off, and adding no value. Well, in that case, sir—who I still respect after this back-and-forth—I can't wait to rip off your product and show the whole world how they can recreate your simple spreadsheet in a few prompts. Thank you for teaching us how to "steal" your spreadsheet. No, no, I wasn't inspired to make my own from your tutorial that you freely posted on Twitter (after I even reached out to ask you some questions about it). No, I'm going to STEAL it. Stay tuned, his full product will be a free dashboard in few days. I'm halfway done anyways thanks to Computer/Cursor.

Panama Canal Authority: Transits increased 2.8% in first four months of fiscal year through January, with 4,156 in total.