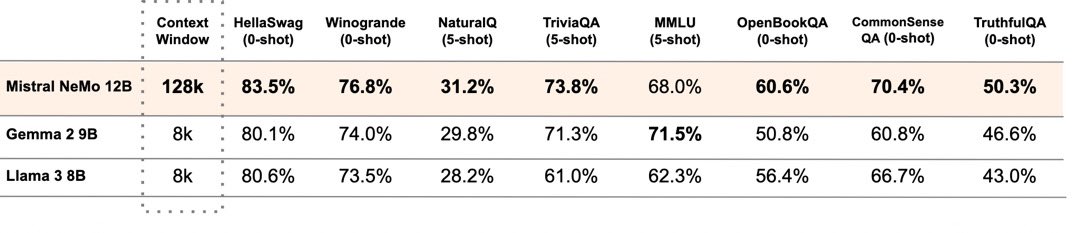

Today we are releasing the first model in the NVIDIA Nemotron 3 family: Nemotron 3 Nano!

Nemotron 3 Nano is truly open, efficient, and achieves class-leading accuracy on reasoning and agentic tasks. Check it out today! 🚀

research.nvidia.com/labs/nemotron/…

English