Our "Beyond Neural Scaling laws" paper got a #NeurIPS22 outstanding paper award! Congrats Ben Sorscher, Robert Geirhos, @sshkhr16 & @arimorcos awards: blog.neurips.cc/2022/11/21/ann… paper: arxiv.org/abs/2206.14486 🧵 twitter.com/SuryaGanguli/s…

sshkhr

1.3K posts

@sshkhr16

research eng @GoogleDeepMind prev: founder @DiceHealth, researcher @AIatMeta @VectorInst

Our "Beyond Neural Scaling laws" paper got a #NeurIPS22 outstanding paper award! Congrats Ben Sorscher, Robert Geirhos, @sshkhr16 & @arimorcos awards: blog.neurips.cc/2022/11/21/ann… paper: arxiv.org/abs/2206.14486 🧵 twitter.com/SuryaGanguli/s…

This is an instance of the more general principle: If you are graduating university and are about to join a consulting firm, don't do that.

people are walking around with their laptops slightly ajar to keep their agents running

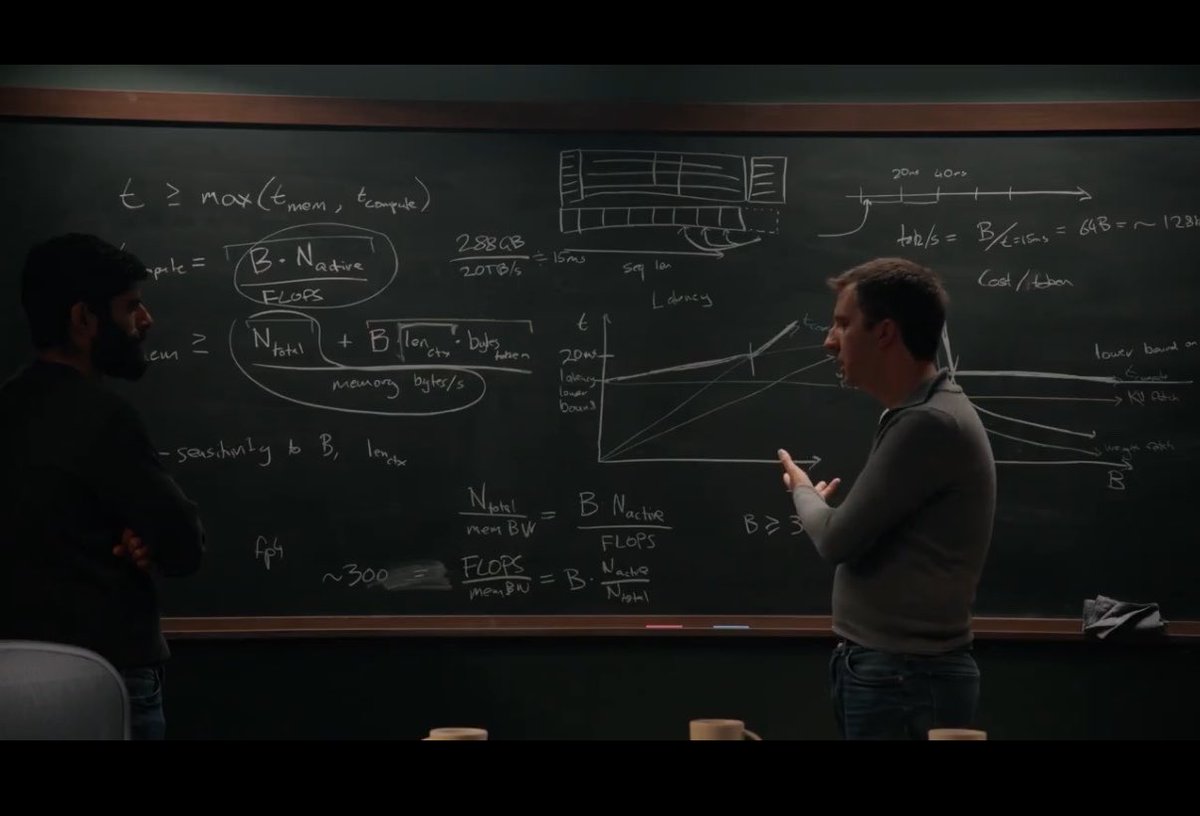

Did a very different format with @reinerpope – a blackboard lecture where he walks through how frontier LLMs are trained and served. It's shocking how much you can deduce about what the labs are doing from a handful of equations, public API prices, and some chalk. It’s a bit technical, but I encourage you to hang in there - it’s really worth it. There are less than a handful of people who understand the full stack of AI, from chip design to model architecture, as well as Reiner. It was a real delight to learn from him. Recommend watching this one on YouTube so you can see the chalkboard. 0:00:00 – How batch size affects token cost and speed 0:31:59 – How MoE models are laid out across GPU racks 0:47:02 – How pipeline parallelism spreads model layers across racks 1:03:27 – Why Ilya said, “As we now know, pipelining is not wise.” 1:18:49 – Because of RL, models may be 100x over-trained beyond Chinchilla-optimal 1:32:52 – Deducing long context memory costs from API pricing 2:03:52 – Convergent evolution between neural nets and cryptography

Jane Street hired this junior at $220k-$600k /year because he uses AI to analyse TRILLIONS of data in this 1-hour lecture - he show how to research trillion of data points thanks to his machine Bookmark & watch it, instead of Netflix to learn how to do the same!

Did a very different format with @reinerpope – a blackboard lecture where he walks through how frontier LLMs are trained and served. It's shocking how much you can deduce about what the labs are doing from a handful of equations, public API prices, and some chalk. It’s a bit technical, but I encourage you to hang in there - it’s really worth it. There are less than a handful of people who understand the full stack of AI, from chip design to model architecture, as well as Reiner. It was a real delight to learn from him. Recommend watching this one on YouTube so you can see the chalkboard. 0:00:00 – How batch size affects token cost and speed 0:31:59 – How MoE models are laid out across GPU racks 0:47:02 – How pipeline parallelism spreads model layers across racks 1:03:27 – Why Ilya said, “As we now know, pipelining is not wise.” 1:18:49 – Because of RL, models may be 100x over-trained beyond Chinchilla-optimal 1:32:52 – Deducing long context memory costs from API pricing 2:03:52 – Convergent evolution between neural nets and cryptography

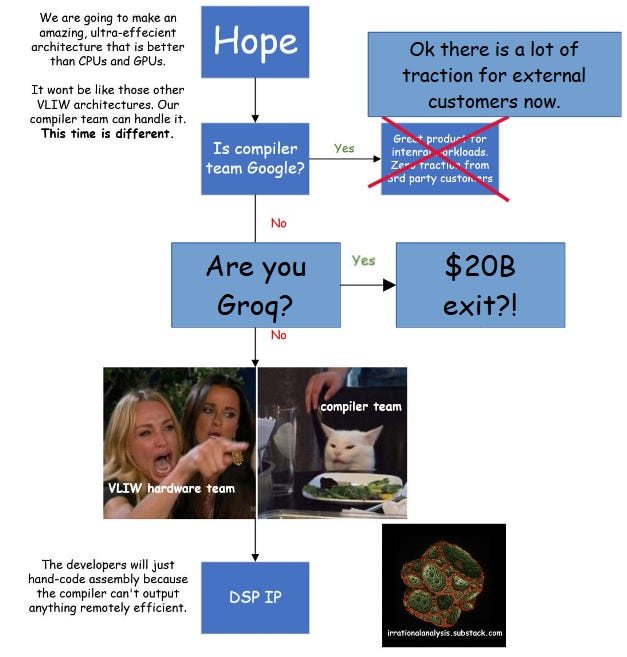

Inference Chips for Agent Workflows @sdianahu Most AI chips are designed for "prompt in, response out." Agents don't work that way. They loop, branch, and hold context across dozens of steps, and current GPUs hit 30–40% utilization as a result. That gap is where purpose-built silicon wins.

Inference Chips for Agent Workflows @sdianahu Most AI chips are designed for "prompt in, response out." Agents don't work that way. They loop, branch, and hold context across dozens of steps, and current GPUs hit 30–40% utilization as a result. That gap is where purpose-built silicon wins.

This feels like confusing a serving-runtime problem for a chip-startup opportunity. Agents do change inference patterns: loops, tool calls, branching, long context, KV reuse, burstiness. But most of that is an inference systems problem: scheduling, routing, KV-cache management, etc. Think Dynamo. By the time a new chip co tapes out + builds a compiler stack + wins cloud distribution, NVIDIA/AMD will likely have baked the obvious hardware-level optimizations into existing platforms.

Wild times are coming

Anthropic changes the performance of Claude by spying on what you are working on by the way