Vega51

3.8K posts

这剧情比电影还魔幻:Manus的CEO肖弘、首席科学家季逸超,本月被发改委召至北京开会。在会后被告知,因监管审查正式被限制出境。此前,该公司被美国Meta以20亿美元收购,肖弘出任Meta副总裁。另一个剧本:Manus从0到1,武汉/光谷给了2000㎡免费场地、税收减免、12亿+资金、算力、人才、政策背书、数据开放,是典型的政府+市场+高校协同养大的项目。这说白了,武汉政府就是天使投资人。他们要是跑了,政府工作人员怎么办?肖弘和季逸超被边控,也是情理之中。

有代码洁癖的人一定会喜欢的一个 Vibe Coding 小技巧: “现在问题解决了,但请你重新 Review 一下今天的几轮修改,看看是不是补丁叠补丁式的修改,如果是的话,重构成最优解。”

斯洛文尼亚目前面临严重的燃料供应压力,并已成为欧洲第一个出现大规模燃料购买限制的国家 总理Robert Golob表示,政府统一的限制: --个人每天50升,法人实体200升。 --同时,政府已决定动用军队协助燃料运输,并从紧急储备中释放高达3000万升柴油,禁止柴油出口,以保障国内供应。 --高速公路服务区的燃料价格管制已于3月20日起暂时取消,以缓解物流压力并改善偏远地区的供应。 PS:取消价格限制是为了激励零售商(如Petrol、MOL、Shell等)向斯洛文尼亚输入燃料,以利益牵引 此前西班牙已经宣布50亿欧元的补贴模式,大幅度降低相关税率,也是类似的激励措施

Mac just had its best launch week ever for first-time Mac customers. We love seeing the enthusiasm!

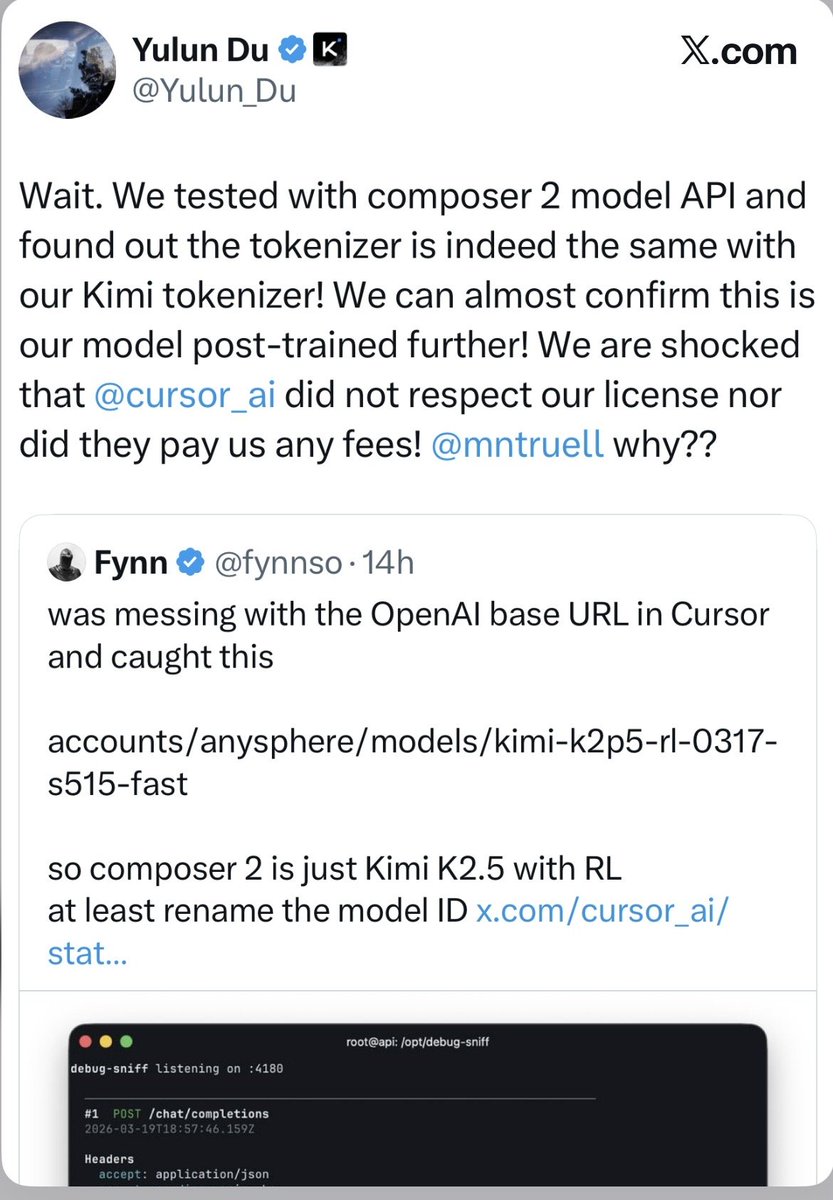

Cursor is raising at a $50 billion valuation on the claim that its “in-house models generate more code than almost any other LLMs in the world.” Less than 24 hours after launching Composer 2, a developer found the model ID in the API response: kimi-k2p5-rl-0317-s515-fast. That’s Moonshot AI’s Kimi K2.5 with reinforcement learning appended. A developer named Fynn was testing Cursor’s OpenAI-compatible base URL when the identifier leaked through the response headers. Moonshot’s head of pretraining, Yulun Du, confirmed on X that the tokenizer is identical to Kimi’s and questioned Cursor’s license compliance. Two other Moonshot employees posted confirmations. All three posts have since been deleted. This is the second time. When Cursor launched Composer 1 in October 2025, users across multiple countries reported the model spontaneously switching its inner monologue to Chinese mid-session. Kenneth Auchenberg, a partner at Alley Corp, posted a screenshot calling it a smoking gun. KR-Asia and 36Kr confirmed both Cursor and Windsurf were running fine-tuned Chinese open-weight models underneath. Cursor never disclosed what Composer 1 was built on. They shipped Composer 1.5 in February and moved on. The pattern: take a Chinese open-weight model, run RL on coding tasks, ship it as a proprietary breakthrough, publish a cost-performance chart comparing yourself against Opus 4.6 and GPT-5.4 without disclosing that your base model was free, then raise another round. That chart from the Composer 2 announcement deserves its own paragraph. Cursor plotted Composer 2 against frontier models on a price-vs-quality axis to argue they’d hit a superior tradeoff. What the chart doesn’t show is that Anthropic and OpenAI trained their models from scratch. Cursor took an open-weight model that Moonshot spent hundreds of millions developing, ran RL on top, and presented the output as evidence of in-house research. That’s margin arbitrage on someone else’s R&D dressed up as a benchmark slide. The license makes this more than an attribution oversight. Kimi K2.5 ships under a Modified MIT License with one clause designed for exactly this scenario: if your product exceeds $20 million in monthly revenue, you must prominently display “Kimi K2.5” on the user interface. Cursor’s ARR crossed $2 billion in February. That’s roughly $167 million per month, 8x the threshold. The clause covers derivative works explicitly. Cursor is valued at $29.3 billion and raising at $50 billion. Moonshot’s last reported valuation was $4.3 billion. The company worth 12x more took the smaller company’s model and shipped it as proprietary technology to justify a valuation built on the frontier lab narrative. Three Composer releases in five months. Composer 1 caught speaking Chinese. Composer 2 caught with a Kimi model ID in the API. A P0 incident this year. And a benchmark chart that compares an RL fine-tune against models requiring billions in training compute without disclosing the base was free. The question for investors in the $50 billion round: what exactly are you buying? A VS Code fork with strong distribution, or a frontier research lab? The model ID in the API answers that. If Moonshot doesn’t enforce this license against a company generating $2 billion annually from a derivative of their model, the attribution clause becomes decoration for every future open-weight release. Every AI lab watching this is running the same math: why open-source your model if companies with better distribution can strip attribution, call it proprietary, and raise at 12x your valuation? kimi-k2p5-rl-0317-s515-fast is the most expensive model ID leak in the history of AI licensing.

最近试了下字节的汽水音乐,再打开 QQ 音乐,有种从 2026 穿越回 2016 的感觉。 汽水音乐的逻辑和抖音一脉相承:选个类型,算法推歌,切歌上下滑。整个 App 只有一个核心交互,极简到没有任何决策成本。 QQ 音乐呢?成千上万个歌单,每天推荐 30 首歌。本质上还是十年前网易云那套"编辑+协同过滤"的思路,只不过加了更多版权和社交。页面也跟狗皮膏药一样复杂。 都 2026 年了,这套东西看着实在像上个时代的产物。 腾讯的路径一直是:找到被验证的产品,抄过来,嫁接社交关系链和版权优势,靠体量碾压。QQ 音乐对网易云如此,微信对米聊亦如此。 但字节的打法是用算法重新定义交互本身。说白了,歌单这个产品形态,在足够强的推荐算法面前,就是个冗余设计。 字节可怕的地方在于,它不跟你比功能多、版权全,是直接把竞争拉到你不擅长的维度——算法驱动的用户体验。 腾讯如果继续在旧框架里迭代,大概率会像门户网站遇上信息流,赢了所有旧对手,却输给一个改变规则的人。

澳洲医疗没什么好吹的,只有一条就能发现分别了:澳洲的人非常少,医生可以看病的机会就少,经验肯定是没有中国,香港丰富的。就这么点儿人,医疗事故发生频率非常高,疑难杂症这些主要靠的是科研,科研可以的。疑难杂症本身也无法靠经验,就是碰运气。那些需要经验--无论是诊治经验还是临床经验--的病,澳洲肯定是比不上中国的。 举个例子吧:甲沟炎,澳洲曾经出现过一例,十几岁的小孩儿甲沟炎去看病,按这位推主说的,这种最多就是到GP,澳洲的GP,99%的情况都是让你回去吃止疼药,这个小孩儿回去吃止疼药了,但没效果,反而越来越肿,去了急诊,急诊说你这个吃止疼药就好,会自己痊愈的,后来这个小孩儿因为细菌感染败血症,送急诊,没抢救过来,死了。死因研训了几个月,陪了几十万了事。 我前年中指也甲沟炎了,肿的不行,我知道GP会让我吃止疼片自己好,就打给一个我认识的以前在中国做护士的朋友,她说你去药房买碘酒,每天伸进去泡两分钟,两天就好了,我照做了,就好了。这位护士朋友移民后因为英语不太好,一直在澳洲做家居清洁。我后来感谢她,她说,哎,我在医院时这种一天得看几十个。都是这么处理的。 GP分流不是什么高级的事,几乎所有地区都是这么做的,中国也有社区诊所,香港分的更清楚,家庭医生分流,急诊室分流。中国能做到急诊室不需要等八个小时不是什么丢人的事儿,是医疗资源充足,并且可持续。这在人少的地方是很难实现的,澳洲现在还有很多人少的地方根本没有医生,靠的是实习生轮流过去坐诊,也就是说不管你生病是什么时候,看病得集中在那几天去看,实习医生去了通通常在当地council给的地方坐诊,住十天半月就走了。就得等下一个“游医”,这是人口少的不便之处,没什么好吹虚的。