Becknerized

1.9K posts

Becknerized

@stephenbeckner

Papers forged, cars hotwired, surfaces scratched, gooses cooked, time travelled, bubbles burst, fortunes told, codes cracked.

Terence Tao put it plainly: there is no evidence that LLMs exhibit genuine creativity. Yes, they have solved some Erdős problems. But these are low-hanging fruit, questions that attracted little attention and that yield once the right existing techniques are applied. That is not creativity. That is search plus recombination. Yes, LLM outputs can look impressive. But look at who is impressed: typically non-experts. Experts know very well that LLM performance gets terrible when you approach the frontier of human knowledge. And this is not a temporary gap. It reflects a structural limitation. We do not fully understand human creativity. But we do know a key property: Conceptual leaps: the ability to generate new representations, not just recombine existing ones. LLMs do not do this. They interpolate in representation space. They operate within existing conceptual frameworks; they do not create new ones. This is why we haven’t “yet seen them take the next step”.

You know what's worse than AI writing books? The stupid witch hunts trying to sniff them out. If you think a book is bad. Just say so and move on. If it's better/more successful than yours---either level up or suck it up, buttercup. This is just a new type of censorship.

On our way to OpenAI!

I’m happy to see a publisher pull an AI-generated book. I hope it has a chilling effect on people trying to sell AI slop. If you can’t be bothered to write it, we can’t be bothered to read it. Gift link: nytimes.com/2026/03/19/boo…

More Fvckery from OpenAI and it's not even Friday. The Copyright Collapse & Whining to Trump Let's start with this gem from OpenAI. "The AI race is over if training on copyrighted works isn't ruled fair use." And when that didn't land the way they hoped, they reached for the oldest trick in the book: "National security hinges on unfettered access to AI training data." Ah yes. National security. The universal excuse for breaking laws that apply to everyone else. Can't follow copyright law? National security. Fascinating argument from a company that just got sued by the dictionary. Yes. The dictionary. On March 16th, Encyclopedia Britannica and Merriam-Webster filed a brand new lawsuit alleging OpenAI "cannibalized" their revenue by training on nearly 100,000 of their articles without permission. They also trained on the dictionary and are now arguing that having to follow copyright law is a threat to national security. "OpenAI urges Trump either settle AI copyright debate or lose the AI race to China" They are asking the President of the United States to give them permission to break the law. Not compensate the people whose work they took. Just make it okay that they already did it and plan to keep doing it. And if he won't? China. China is the universal skeleton key for American corporations who want to do something illegal, unethical, or both. Can't follow copyright law? China. Can't pay authors? China. Got sued by the dictionary? CHINA. The China card isn't a legal strategy. It's what you say when you don't have one. It's a hostage note dressed up in national security language. And they sent it to the White House. Meanwhile in the Southern District of New York, Judge Sidney H. Stein is managing a growing pile of consolidated author lawsuits. We're talking George R.R. Martin, John Grisham, Sarah Silverman, Michael Chabon, Ta-Nehisi Coates, and Pulitzer Prize winners. The list reads like a college literature syllabus. A settlement conference was held March 13th. Another is scheduled March 30th. Summary judgment on the core fair use defense isn't expected until Summer 2026. And the court is still weighing class certification, which would allow authors to seek billions in collective damages rather than individual payouts. Not millions. Billions. OpenAI built a hundred billion dollar company on other people's work and would now like the law to agree that's fine, actually. For national security. And CHINA. This is theft with a flag draped over it. But 10/10 car thieves agree that laws are bad for business. @sama Anthropic had to pay, so should you. In September 2025, Anthropic agreed to a $1.5 billion settlement to resolve a class-action lawsuit alleging they used pirated books to train their AI models, marking one of the largest copyright settlements in U.S. history. (That's called precedent and it doesn't mean cry to the President). sources: arstechnica.com/tech-policy/20… reuters.com/legal/litigati…. docs.justia.com/cases/federal/…. authorsguild.org/news/ai-class-… lieffcabraser.com/openai-copyrig… #Keep4o #OpenSource4o #OpenAI #FireSamAltman

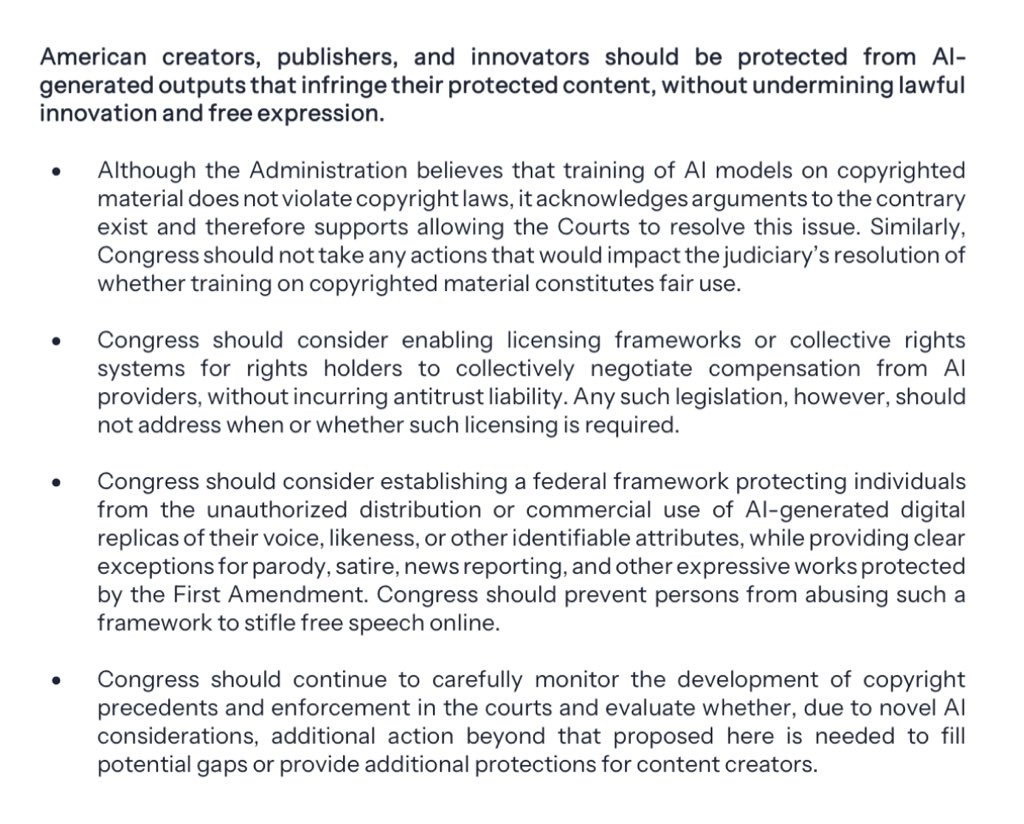

Here are the most pressing topics in AI policy the National Framework addresses: 1. Protecting Children and Empowering Parents: Many Americans are concerned about children interacting with AI. Congress should require age-assurance tools and ensure AI platforms give parents account controls and device limits, while platforms should implement strong features protecting against sexual exploitation of children and encouragement of self-harm. 2. Safeguarding and Strengthening American Communities: Congress should codify @POTUS’s Ratepayer Protection Pledge to ensure energy costs from data centers are not passed along to Americans. Congress should also strengthen law enforcement tools to fight AI-enabled scams against seniors. 3. Respecting Intellectual Property Rights and Supporting Creators: American creators’ works and identities must be protected, yet AI must be able to learn from the world it inhabits. Congress should support a balanced approach that allows for advanced AI training on copyrighted materials while strengthening creators’ ability to negotiate with AI providers and fight unauthorized AI replicas. 4. Preventing Censorship and Protecting Free Speech: The Federal government must defend free speech and be prevented from using AI systems to silence or censor lawful expression. 5. Enabling Innovation and Ensuring American AI Dominance: America is leading the world in AI development and deployment. Congress should strengthen our dominance with regulatory sandboxes, make more AI-ready federal datasets available, and look for additional ways to reduce regulatory barriers to innovation. 6. Educating Americans and Developing an AI-Ready Workforce: The Administration wants American workers to participate in and reap the rewards of a new AI-powered economy. Congress should expand and strengthen workforce training programs and AI-related apprenticeships.

Iranian woman, Dr. Fereshteh, explaining Allahu Akbar to western audience when US-Zio bomb a place nearby. Watch how she and everyone else reacts:

I'm not going to be as nice as this lady. If you don't have an editor, please don't publish. I don't care if you're paying that editor or not, but they need to be someone who *can* edit professionally. Technically, yes, you have a choice of whether or not to get outside help with your book, but I have yet to find the unicorn miracle that is good without any outside professional help. Opting to "not" is a great way to produce trash. However, a good edit is going to run $3-5k. The £880 quoted as an average here for an 80k manuscript is only around 13 hours of work at $60/hr (which is a good editor's rate). That's not really realistic. I expect the quoted average, then, is not really a dev or line editor's average, but is a blend including copy, which is a lot cheaper. I recommend, if you can't afford this, to work on your own editing skills (check out our videos--we discuss a lot of developmental editing topics in the context of actual books) and then *swap* work with other people. Basically, use your time as currency instead to get others to help you edit. But do not publish without outside editing advice.

We need a moratorium on AI data centers NOW. Here’s why.