Monishwaran Maheswaran

36 posts

@sudomonish

@Berkeley_EECS + Math | AI Research @berkeley_ai | Nuclear Research @BerkeleyLab | Building hyper-intelligent machines.

Can LLMs Self-Verify? Much better than you'd expect. LLMs are increasingly used as parallel reasoners, sampling many solutions at once. Choosing the right answer is the real bottleneck. We show that pairwise self-verification is a powerful primitive. Introducing V1, a framework that unifies generation and self-verification: 💡 Pairwise self-verification beats pointwise scoring, improving test-time scaling 💡 V1-Infer: Efficient tournament-style ranking that improves self-verification 💡 V1-PairRL: RL training where generation and verification co-evolve for developing better self-verifiers 🧵👇

🎥 Video generation is hitting the memory wall. As videos get longer, the KV cache quietly explodes — and long-horizon consistency starts to break. We built Quant VideoGen: a training-free KV cache compression method for auto-regressive video diffusion. Instead of storing every KV in high precision, QVG exploits video’s spatiotemporal redundancy with semantic-aware smoothing + progressive residual quantization. 🚀 Up to 7× KV memory reduction ⚡ <4% overhead ✅ Strong long-video quality 🕹️ Deploy HYWorldPlay on your own RTX 5090 locally KV compression is becoming a core scaling primitive — not just for LLMs, but for video generation too. Paper: arxiv.org/abs/2602.02958 Code: github.com/svg-project/Qu… (1/5)

GPT-5.5 on ARC-AGI (Verified) ARC-AGI-2: - Max: 85.0%, $1.87 - High: 83.3%, $1.45 - Med: 70.4%, $0.86 - Low: 33%, $0.35 GPT-5.5 is now state of the art on ARC-AGI-2

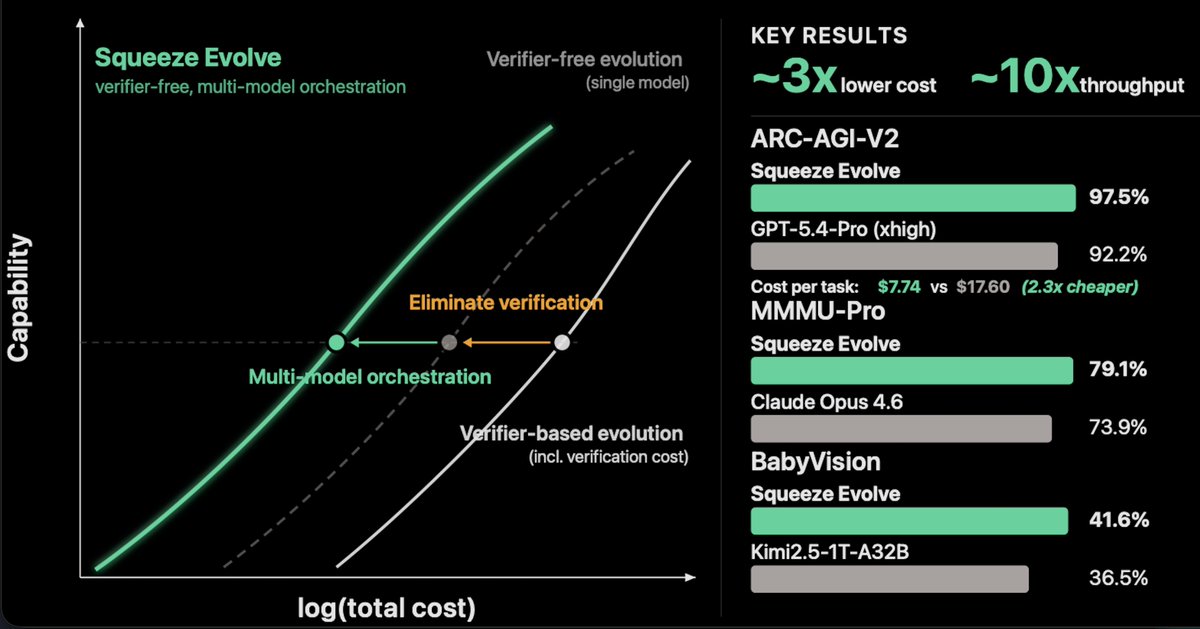

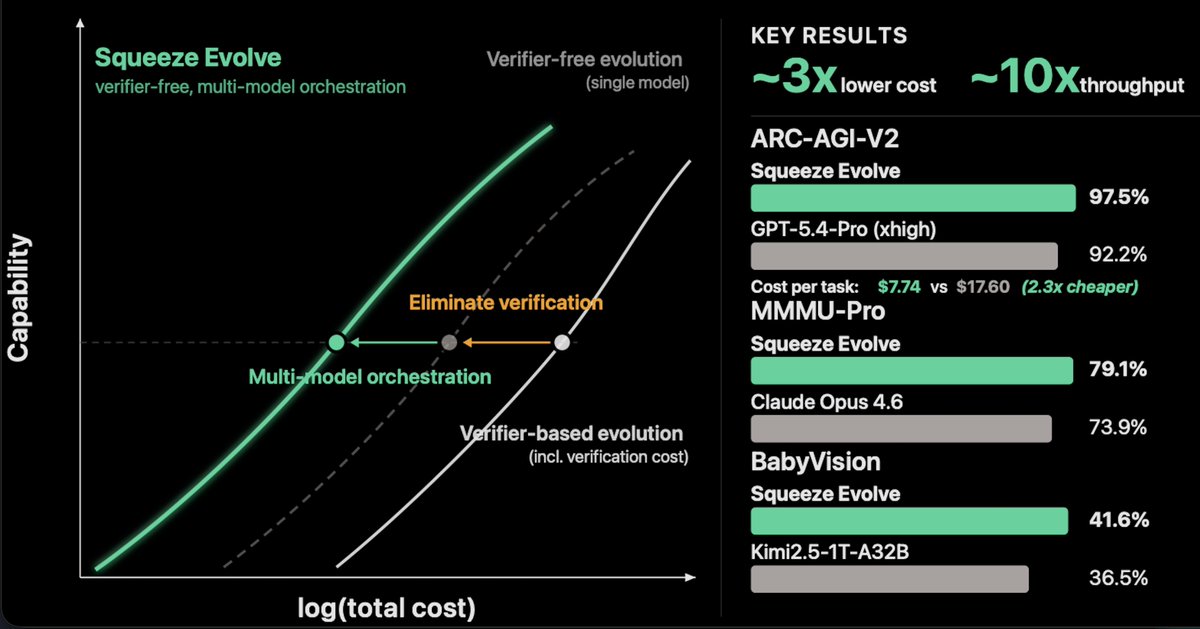

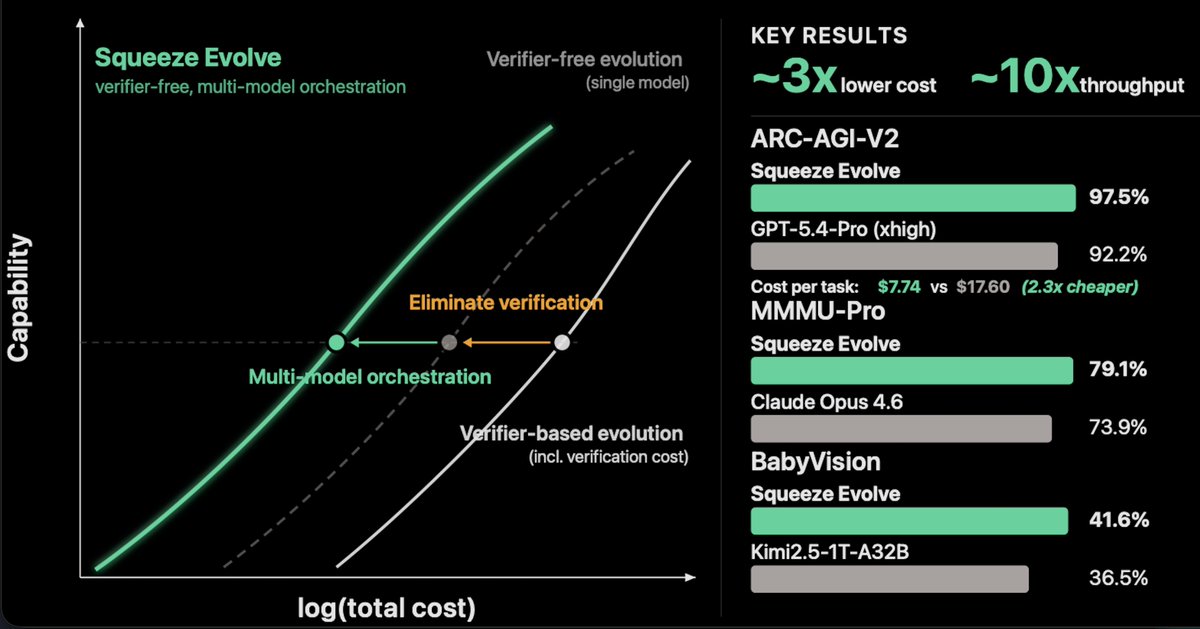

Super excited to Introduce our latest work: Squeeze Evolve. We unify test-time scaling methods into one evolutionary framework — then orchestrate many models across it. 3x lower cost. 10x throughput. 97.5%(SoTA) on ARC-AGI-V2. No verifier required. Framework: squeeze-evolve.github.io

Super excited to Introduce our latest work: Squeeze Evolve. We unify test-time scaling methods into one evolutionary framework — then orchestrate many models across it. 3x lower cost. 10x throughput. 97.5%(SoTA) on ARC-AGI-V2. No verifier required. Framework: squeeze-evolve.github.io

Super excited to Introduce our latest work: Squeeze Evolve. We unify test-time scaling methods into one evolutionary framework — then orchestrate many models across it. 3x lower cost. 10x throughput. 97.5%(SoTA) on ARC-AGI-V2. No verifier required. Framework: squeeze-evolve.github.io

Super excited to Introduce our latest work: Squeeze Evolve. We unify test-time scaling methods into one evolutionary framework — then orchestrate many models across it. 3x lower cost. 10x throughput. 97.5%(SoTA) on ARC-AGI-V2. No verifier required. Framework: squeeze-evolve.github.io

Super excited to Introduce our latest work: Squeeze Evolve. We unify test-time scaling methods into one evolutionary framework — then orchestrate many models across it. 3x lower cost. 10x throughput. 97.5%(SoTA) on ARC-AGI-V2. No verifier required. Framework: squeeze-evolve.github.io

Super excited to Introduce our latest work: Squeeze Evolve. We unify test-time scaling methods into one evolutionary framework — then orchestrate many models across it. 3x lower cost. 10x throughput. 97.5%(SoTA) on ARC-AGI-V2. No verifier required. Framework: squeeze-evolve.github.io

Super excited to Introduce our latest work: Squeeze Evolve. We unify test-time scaling methods into one evolutionary framework — then orchestrate many models across it. 3x lower cost. 10x throughput. 97.5%(SoTA) on ARC-AGI-V2. No verifier required. Framework: squeeze-evolve.github.io

Super excited to Introduce our latest work: Squeeze Evolve. We unify test-time scaling methods into one evolutionary framework — then orchestrate many models across it. 3x lower cost. 10x throughput. 97.5%(SoTA) on ARC-AGI-V2. No verifier required. Framework: squeeze-evolve.github.io