Sumuk

1.3K posts

Sumuk

@sumukx

research @google / prev @PrimeIntellect @huggingface, phded for a while at @siebelschool

I'm done. I'm f***ing done.

It's true. Here's a plot of GPT models and their usage of "goblin", "gremlin", "troll", etc over time. There's no anti-gremlin system instruction on our side, we get to see GPT-5.5 run free.

This GitHub incident is insane. Merge queue commits have been reverting previously merged commits at random. This not only breaks the mental contract teams have with Git in general, but is subtle enough to be really hard to unravel after the fact. githubstatus.com/incidents/zsg1…

Getting lots of questions on why the landing page / docs were updated if only 2% of new signups were affected. This was understandably confusing for the 98% of folks not part of the experiment, and we've reverted both the landing page and docs changes.

SpaceXAI and @cursor_ai are now working closely together to create the world’s best coding and knowledge work AI. The combination of Cursor’s leading product and distribution to expert software engineers with SpaceX’s million H100 equivalent Colossus training supercomputer will allow us to build the world’s most useful models. Cursor has also given SpaceX the right to acquire Cursor later this year for $60 billion or pay $10 billion for our work together.

To contribute to open-source: a thoughtful issue >> a thoughtless PR

I swear this is a dystopian parody filmed in 1996 as a warning about how the internet could go wrong. CHATBOTS DON’T HAVE A PSYCHOLOGY. THEY DON’T HOLD VALUES. THEY DON’T NEED FUCKING THERAPY. If chatbot companies are *paying* morons like this, what else do you need to know?

Please, I'm begging you, try to critically examine the differences between these two pieces of writing. ChatGPT editing did not improve this. Every single change only served to weaken your claims significantly. Everything is now hedged into oblivion: no longer have you outlined a "problem," now it's merely a "flaw." "It is true" now demoted to "it appears to be the case." "Is" gets a "usually" tacked on. A thesis statement at the end of the first paragraph gets run over by noisy, out-of-context example-whittling. All for fear of being misconstrued. And at the end, the argument that gets spat out isn't even yours anymore! You argued that Graeber failed to create a true account of work because he did not understand Chesterton's Fence. ChatGPT is arguing is that it is possible some apparently bullshit jobs could be secretly load-bearing if you squint. These are two different statements. The second is weaker and less compelling. It says less. And it's fucking longer! Don't do this anymore! Stop doing this! It's worse!!!

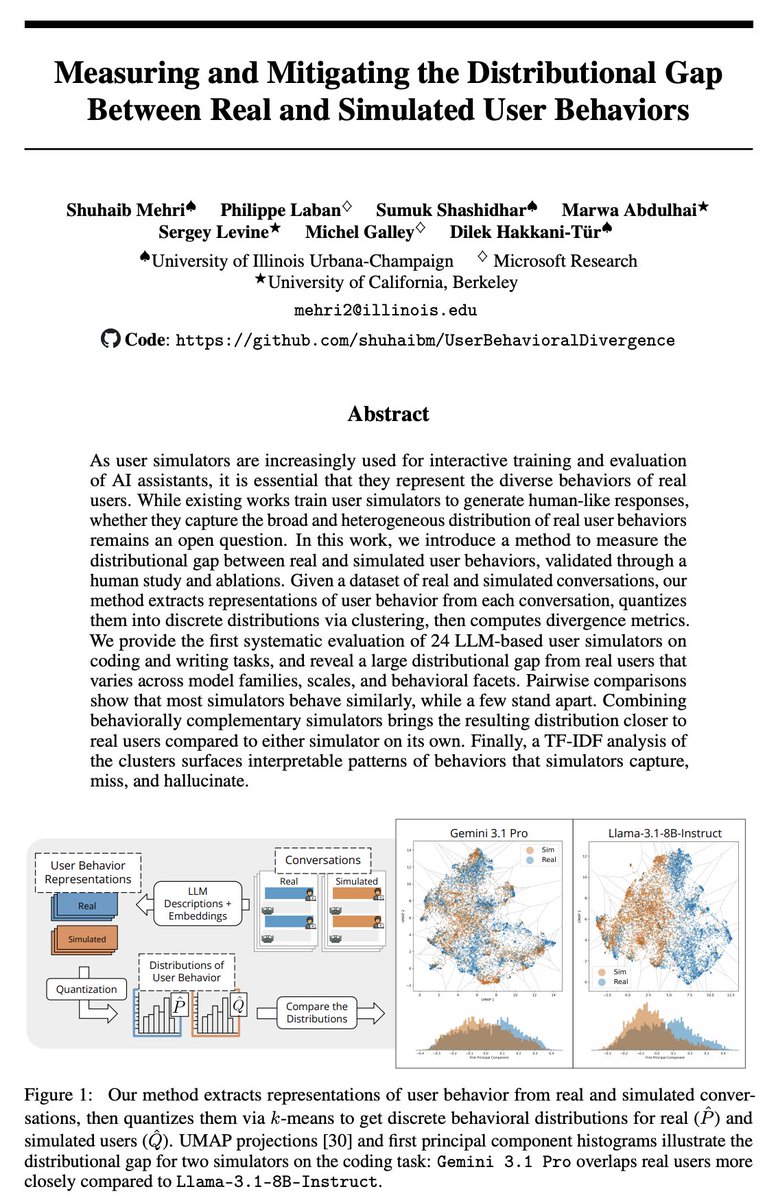

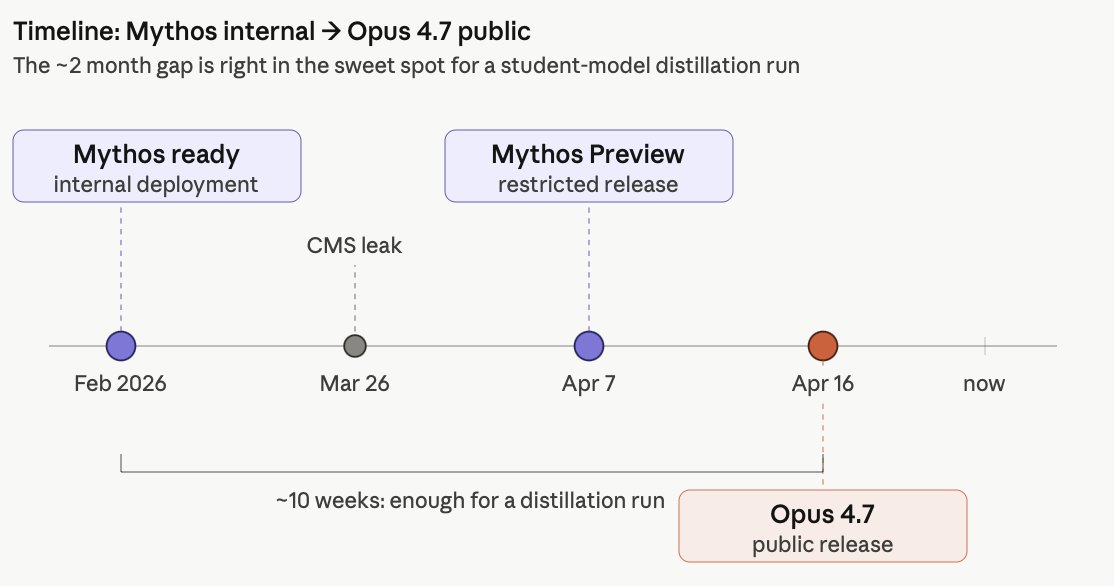

Hold on, something doesnt add up here. Opus 4.7 got much worse in needle in the haystack? need to dig into this

For You feed is great today, this is Nikita’s Liberation Day