Steven Shen

2.3K posts

Steven Shen

@syshen

Cofounder and CTO of CuboAi CEO of JidouAI ex-Circler

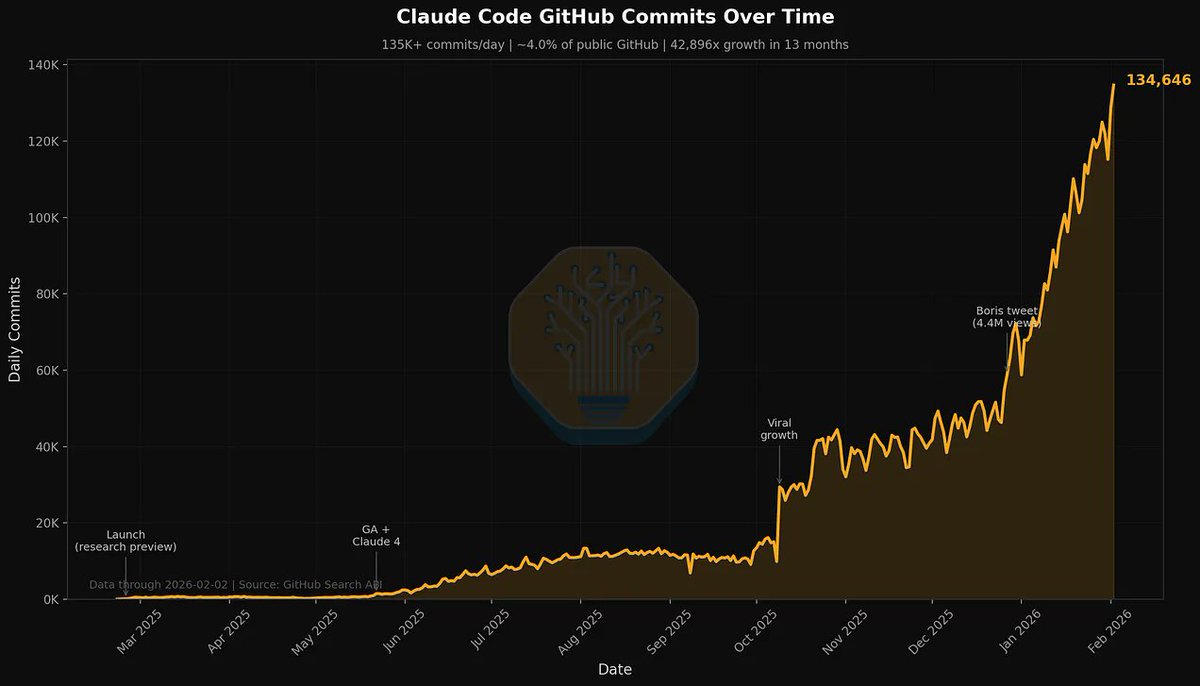

Holy shit. Anthropic engineers don't write code anymore. A new hire just leaked what's actually happening inside the company shipping harder than anyone in 2026: Nobody on his team has hand-written code in months. They run multiple agents in parallel and act like managers, not engineers. His exact words: "if you're just watching an agent code, you're already behind. that idle time should be spent spinning up another agent and directing it somewhere else." The mental model isn't "use AI to code faster." It's "you are the PM, the agents are your engineers, and your job is to keep all of them unblocked." He called it being "fully AI aligned" as a team and said it changes what's even possible to build. The productivity gap between people who think this way and people who don't is already enormous. And the proof is simple: Anthropic has shipped harder than any company in 2026. If you're still hand-writing code, you're not behind on tools. You're behind on the job itself.

Had a great chat with @lennysan on some of the fun stuff happening at the intersection of AI and growth! Thanks for having me on Lenny, had a blast :) open.spotify.com/episode/08QWCm…

We just released Claude Code channels, which allows you to control your Claude Code session through select MCPs, starting with Telegram and Discord. Use this to message Claude Code directly from your phone.

Claude Code is the Inflection Point, What It Is, How We Use It, Industry Repercussions, Microsoft's Dilemma, Why Anthropic Is Winning. newsletter.semianalysis.com/p/claude-code-…