sysxplore

5.1K posts

@sysxplore

Learn Linux step by step🐧→ https://t.co/gPoaPe3GeR

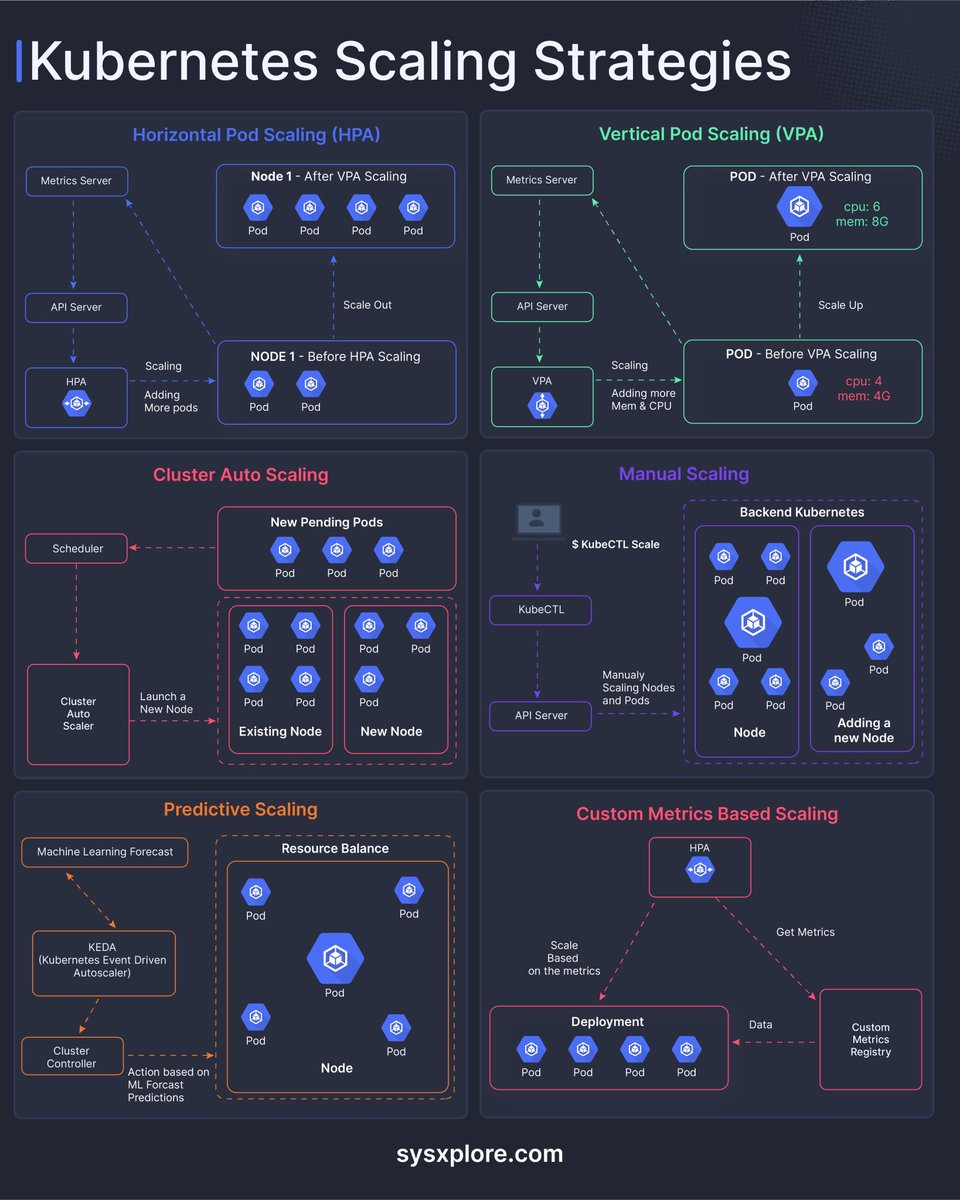

🚀 Kubernetes Scaling Strategies - Beyond Just “Add More Pods.” Scaling in Kubernetes isn’t one-size-fits-all. It’s a toolkit of strategies, each solving a different problem depending on workload patterns, resource constraints, and business needs. Here’s a quick breakdown of the key approaches: 🔹 Horizontal Pod Autoscaling (HPA): Scale *out* by adding more pods based on metrics like CPU or memory. Ideal for handling traffic spikes and stateless applications. 🔹 Vertical Pod Autoscaling (VPA): Scale *up* by adjusting CPU and memory for existing pods. Useful when workloads are stable but resource needs are unpredictable. 🔹 Cluster Autoscaling: Automatically adds or removes nodes based on scheduling demands. Ensures your cluster always has the right capacity—no more, no less. 🔹Manual Scaling: Still relevant for controlled environments or predictable workloads. Gives full control, but requires active management. 🔹 Predictive Scaling (KEDA, ML-based): Move from reactive -> proactive. Anticipate demand using historical data and event-driven triggers. 🔹 Custom Metrics Scaling: Go beyond CPU/memory. Scale based on business metrics like queue length, request rate, or user activity. Key takeaway: The real power comes from combining these strategies- not choosing just one. Smart scaling = better performance + optimized cost. How are you handling scaling in your Kubernetes workloads today? Are you still reactive, or moving toward predictive systems?