Talha Sarı

46 posts

Codex goal mode is kinda crazy

Peter Steinberger, creator of OpenClaw, on why AI agents still produce "slop" without human taste in the loop: "You can create code and run all night and then you have like the ultimate slop because what those agents don't really do yet is have taste." Peter is direct: raw capability without direction still produces mediocre output. "They are spiky smart and they're really good at things, but if you don't navigate them well, if you don't have a vision of what you're going to build, it's still going to be slop. If you don't ask the right questions, it's still going to be slop." Great AI-assisted work is defined by the human guiding it. @steipete describes his own creative process when starting a new project: "When I start a project, I have like this very rough idea what it could be. And as I play with it and feel it, my vision gets more clear. I try out things, some things don't work, and I evolve my idea into what it will become." Most people skip this part entirely, front-loading everything into a single prompt and wondering why the result feels hollow. "My next prompt depends on what I see and feel and think about the current state of the project." Each step informs the next. The work itself is the feedback loop. "But if you try to put everything into a spec up front, you miss this kind of human-machine loop. And then I don't know how something good can come out without having feelings in the loop — almost like taste." The agentic trap is what happens when you remove yourself from the process too early.

We partnered with University of Chicago economist @SuproteemSarkar to study how more capable models have changed the way people use Cursor. Across 500 teams, we find that developers are tackling more ambitious work with AI, with a 68% increase in high-complexity tasks this year.

GLM-5.1 weights just dropped. 🎉 This is a strong model. > Excels at coding, just under Claude Opus 4.6 and above Gemini 3.1 Pro. > Built to work across longer multi-step agentic workflows. > At 754B it's quite a bit smaller than the frontier models it's competing against

Another big release: GLM-5.1! China is on fire! significant increase in evals compared to GLM-5.0 tl;dr GLM-5.1 is the new open-source agentic coding model that significantly outperforms its predecessor by sustaining long-horizon problem-solving over hundreds of iterations, continuously improving results instead of plateauing, achieving state-of-the-art performance on complex software engineering benchmarks.

LLM Knowledge Bases Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So: Data ingest: I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them. IDE: I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides). Q&A: Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale. Output: Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base. Linting: I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into. Extra tools: I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries. Further explorations: As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows. TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

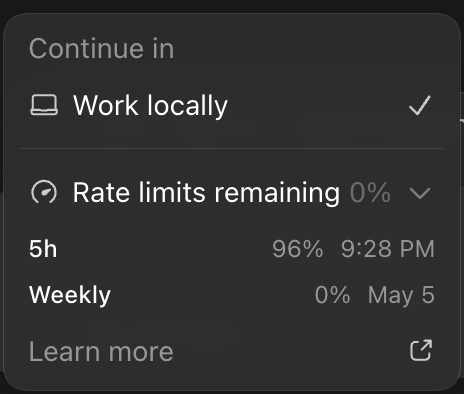

I haven't had context window anxiety since GPT-5.1-Codex-Max when the model got natively trained on compaction. I let a thread go on until the feature is done and rely on auto compaction! You can even bring that same compaction into your own apps 👇