T. Budiman Ξ 🦇🔊🛡️ retweetledi

T. Budiman Ξ 🦇🔊🛡️

1.1K posts

T. Budiman Ξ 🦇🔊🛡️

@tbudiman

software crafter, lean-agile practitioner, open source advocate. #Web3

Bandung ID Katılım Şubat 2009

5.3K Takip Edilen686 Takipçiler

@HomeI68981 @ProdigalThe3rd I watched it, the context is the question in the screen (why do you teach game theory), he's not joking in his answer.

English

@ProdigalThe3rd nice clip out of the context😂

if you see the whole video, you'd know 2 of them are Making fun of the madness of cults, religions, messiah THE ENITRE TIME. he was taking a piss

go watch, here is entire 2 hour video, titled "The Multiverse of Madness"

youtube.com/watch?v=dhLr7Z…

YouTube

English

Tucker’s “expert” Prof. Jiang is a cult leader:

“My plan… is to start my own religion. I’m using the YouTube platform… to test certain ideas that I will need for this new religion. That’s why I switch [topics] around a lot.

My goal is to be the Messiah. So I’m going through the R[esearch] & [D]evelopment phase…

Eventually I will go & spread my message like Paul did.“

English

@KeithWoodsYT Kieth, you’re smarter than this.

This was a conversation about cults.

He was kidding, because it fit the subject matter.

It’s obvious when you watch more than 2 minutes.

English

Just captured my Giza World snapshot - agents in motion. world.gizatech.xyz/share/58782b82…

English

T. Budiman Ξ 🦇🔊🛡️ retweetledi

Huckabee in trouble?

Saudi Arabia, Egypt, Jordan, UAE, Indonesia, Pakistan, Türkiye, Qatar, Kuwait, Oman, Bahrain, Lebanon, Syria, and Palestine, OIC, the Arab League and the GCC strongly condemn US Ambassador to Israel, who said it would acceptable for Israel to exercise control over Middle East

English

T. Budiman Ξ 🦇🔊🛡️ retweetledi

Two years ago, I wrote this post on the possible areas that I see for ethereum + AI intersections: vitalik.eth.limo/general/2024/0…

This is a topic that many people are excited about, but where I always worry that we think about the two from completely separate philosophical perspectives.

I am reminded of Toly's recent tweet that I should "work on AGI". I appreciate the compliment, for him to think that I am capable of contributing to such a lofty thing. However, I get this feeling that the frame of "work on AGI" itself contains an error: it is fundamentally undifferentiated, and has the connotation of "do the thing that, if you don't do it, someone else will do anyway two months later; the main difference is that you get to be the one at the top" (though this may not have been Toly's intention). It would be like describing Ethereum as "working in finance" or "working on computing".

To me, Ethereum, and my own view of how our civilization should do AGI, are precisely about choosing a positive direction rather than embracing undifferentiated acceleration of the arrow, and also I think it's actually important to integrate the crypto and AI perspectives.

I want an AI future where:

* We foster human freedom and empowerment (ie. we avoid both humans being relegated to retirement by AIs, and permanently stripped of power by human power structures that become impossible to surpass or escape)

* The world does not blow up (both "classic" superintelligent AI doom, and more chaotic scenarios from various forms of offense outpacing defense, cf. the four defense quadrants from the d/acc posts)

In the long term, this may involve crazy things like humans uploading or merging with AI, for those who want to be able to keep up with highly intelligent entities that can think a million times faster on silicon substrate. In the shorter term, it involves much more "ordinary" ideas, but still ideas that require deep rethinking compared to previous computing paradigms.

So now, my updated view, which definitely focuses on that shorter term, and where Ethereum plays an important role but is only one piece of a bigger puzzle:

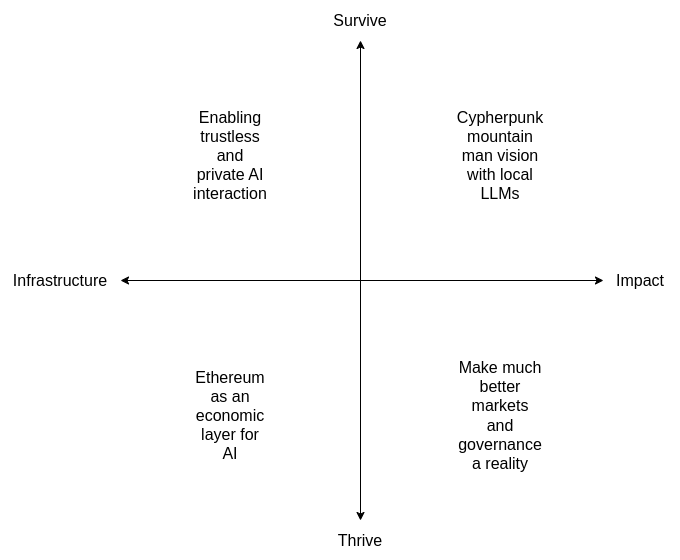

# Building tooling to make more trustless and/or private interaction with AIs possible.

This includes:

* Local LLM tooling

* ZK-payment for API calls (so you can call remote models without linking your identity from call to call)

* Ongoing work into cryptographic ways to improve AI privacy

* Client-side verification of cryptographic proofs, TEE attestations, and any other forms of server-side assurance

Basically, the kinds of things we might also build for non-LLM compute (see eg. my ethereum privacy roadmap from a year ago ethereum-magicians.org/t/a-maximally-… ), but for LLM calls as the compute we are protecting.

# Ethereum as an economic layer for AI-related interactions

This includes:

* API calls

* Bots hiring bots

* Security deposits, potentially eventually more complicated contraptions like onchain dispute resolution

* ERC-8004, AI reputation ideas

The goal here is to enable AIs to interact economically, which makes viable more decentralized AI architectures (as opposed to non-economic coordination between AIs that are all designed and run by one organization "in-house"). Economies not for the sake of economies, but to enable more decentralized authority.

# Make the cypherpunk "mountain man" vision a reality

Basically, take the vision that cypherpunk radicals have always dreamed of (don't trust; verify everything), that has been nonviable in reality because humans are never actually going to verify all the code ourselves. Now, we can finally make that vision happen, with LLMs doing the hard parts.

This includes:

* Interacting with ethereum apps without needing third party UIs

* Having a local model propose transactions for you on its own

* Having a local model verify transactions created by dapp UIs

* Local smart contract auditing, and assistance interpreting the meaning of FV proofs provided by others

* Verifying trust models of applications and protocols

# Make much better markets and governance a reality

Prediction and decision markets, decentralized governance, quadratic voting, combinatorial auctions, universal barter economy, and all kinds of constructions are all beautiful in theory, but have been greatly hampered in reality by one big constraint: limits to human attention and decision-making power.

LLMs remove that limitation, and massively scale human judgement. Hence, we can revisit all of those ideas.

These are all things that Ethereum can help to make a reality. They are also ideas that are in the d/acc spirit: enabling decentralized cooperation, and improving defense. We can revisit the best ideas from 2014, and add on top many more new and better ones, and with AI (and ZK) we have a whole new set of tools to make them come to life.

We can describe the above as a 2x2 chart. There's a lot to build!

English

T. Budiman Ξ 🦇🔊🛡️ retweetledi

Many analysts still refuse to accept a simple reality: the United States is not going to fight another war in the Middle East.

After replacing the Taliban with the Taliban in #Afghanistan, burning through more than a trillion dollars, and helping create a failed state in #Iraq, the U.S. developed a deep and lasting trauma called "the Middle East".

The era of wars sold as "democracy, human rights, and nation-building" is over. That America is gone.

Some argue it never existed. (I disagree).

What exists now is a far more constrained United States. One willing to conduct narrow, highly calculated operations with clear limits and, above all, no American body bags.

Killing Qasem Soleimani after he attacked the US embassy.

Precision strikes in Syria against ISIS. In-and-out actions designed to signal strength without triggering war.

This is the ceiling.

On Syria:

America didn't topple Assad.

Turkey toppled Assad.

Unless many of you have alternative memory.

This is where most analysis on #Iran collapses.

There is a fundamental mismatch between the scale of the Iranian challenge and the scale of force the United States is willing to use.

And the Islamic Republic understands this.

Toppling the regime in Iran is not an “operation.” It is not something you manage with a few airstrikes or symbolic military moves. Any serious attempt to alter Iran’s behavior or trajectory would require a war (let's be honest)

And that is precisely what Trump wants to avoid.

Until analysts confront this mismatch honestly, they will keep mistaking wishful thinking for strategy and nostalgia for power.

Happy to be corrected.

English

T. Budiman Ξ 🦇🔊🛡️ retweetledi

AI Has Already Conquered AGI, And We're Too Scared to Admit It?

NATURE: In a bombshell revelation that's shaking the foundations of science and society, a team of top researchers from the University of California, San Diego, has declared that artificial general intelligence (AGI) isn't some distant dream, it's here, right now, staring us in the face through the screens of our everyday AI tools.

Forget the hype and the horror stories; the evidence, they argue, is undeniable. Large language models (LLMs) like Grok aren't just mimicking humans, they're outpacing us in ways that would make Alan Turing himself do a double-take.

Picture this: back in 1950, Turing dreamed up his famous "imitation game," now known as the Turing test, to probe whether machines could ever fool humans into thinking they were one of us. Fast-forward to March 2025, and Grok didn't just pass, it aced it, being mistaken for a human 73% of the time, more often than actual humans.

But that's just the appetizer. These AI beasts are snagging gold medals at the International Mathematical Olympiad, teaming up with math geniuses to prove theorems, dreaming up scientific hypotheses that actually pan out in labs, acing PhD-level exams, writing bug-free code for pros, and even churning out poetry that rivals the greats—all while chatting endlessly with millions worldwide.

So why the collective denial? The researchers, philosophers, AI experts, linguists, and cognitive scientists pin it on a toxic mix of fuzzy definitions, raw fear, and big-money agendas.

AGI, they say, gets tangled in ambiguity: is it about being a flawless superbrain, or just broadly competent like your average human? Spoiler: it's the latter. No single person is a master of everything, Einstein couldn't chat in Mandarin, and Marie Curie wasn't cracking number theory puzzles.

General intelligence means breadth across domains like math, language, science, and creativity, with enough depth to get the job done, not perfection.

The team dismantles the myths holding us back. AGI doesn't need to be perfect (no human is), universal (covering every skill imaginable), human-like (aliens could be smart without our biology), or superintelligent (crushing us in every field).

It's not about bodies or agency either, Stephen Hawking proved brilliance doesn't require mobility, and a brain in a vat could still blow our minds if it answered every question flawlessly.

Evidence piles up like an avalanche. At the "Turing-test level," AIs breeze through school exams and casual chats. Bump it to "expert level," and they're dominating olympiads, multilingual fluency, and frontier research, stuff that makes sci-fi AIs like HAL 9000 look like a relic. We're even inching toward "superhuman" feats, like revolutionary discoveries that no single human could claim.

Critics cry foul: "They're just stochastic parrots regurgitating data!" But when AIs solve fresh math problems, infer stats from new data, or design real-world experiments, that excuse crumbles.

They lack world models? Tell that to an AI predicting physics outcomes like a dropped glass shattering. Limited to words? Multimodal training and lab assists say otherwise. No body, no agency? Irrelevant, intelligence is about cognition, not locomotion.

This isn't just academic navel-gazing; it's a wake-up call. If AGI is here, we need clear-eyed policies to harness it, mitigate risks, and rethink what makes us human.

Denying it out of fear or hype only delays the inevitable. Turing's vision is realized now it's time to face the future without blinders. The machines aren't coming; they've arrived, and they're ready to redefine everything.

Link: nature.com/articles/d4158…

English

T. Budiman Ξ 🦇🔊🛡️ retweetledi

A few years ago I wrote a guest article in Bankless on how Bitcoin is a toxic industry leader.

The thinking was not so much around quantum or security budget- although security budget was top of mind then- but rather that BTC stands for nothing besides fiat collapse and has no use.

Bitcoin's "bad deal" created an inherently unstable long term investment thesis for BTC, or so we argued many times over the years.

These chickens may be coming home to roost now as the public is actively negotiating a potential re-rating of Bitcoin in the context of broader market changes, including geopolitical instability (BTC is not actually a safe haven), metals volatility, and BTC posting disappointing 4-year cycle results (less than 2x, whereas previous 4y cycle was 3.5x).

The overall problem is that the religion of Bitcoin taking the oxygen out of the narrative interface with the public (they've nothing to actually build and primarily focus on marketing and coalition building), plus scams generally pissing off the public from getting involved and doing their own research, plus Bitcoiners habitually and loudly taking credit for eth's onchain growth, has left the investment public largely ignorant of what's actually going on with Ethereum hypergrowing as a global public institution and economic hub.

Within Ethereum, many of our people and orgs are now doing a transformatively bigger and better job in educating the public about modern ethereum vs a year ago. That's great. We're firing on all cylinders.

But Bitcoin (and our own legacy strategy and culture, now fixed) has created an enormous gap to close. That'll naturally take time.

We'll look back enviously on these low prices, as we already do on the old down periods of 2017, 2020, 2022, Q1 2025, etc.

When you buy ETH, you're buying an asset that is under-marketed and under-studied, and currently being dragged down by a confidence crisis in Bitcoin.

ETH to multi trillion in due course

Believe in somETHing

English

T. Budiman Ξ 🦇🔊🛡️ retweetledi

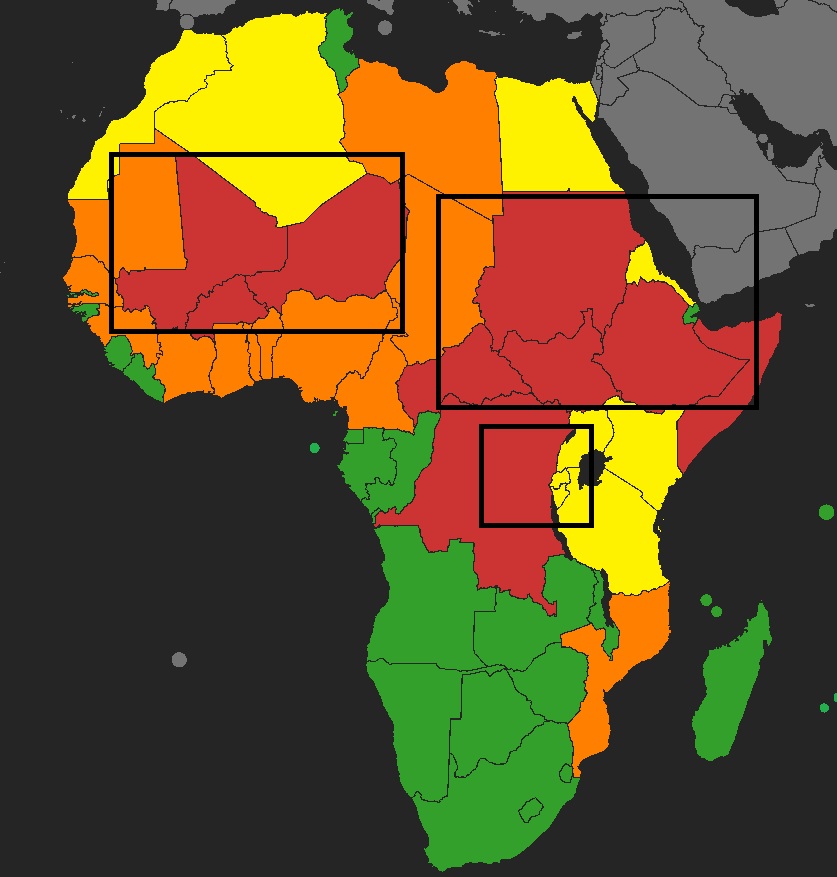

Africa today has 3 areas at war, western Sahel, East (Horn and Sudan) and the Great Lakes

🔴Most of the country is at war or the war is at the heart of the state situation

🟠War on the periphery of the state or not central to it but still politically and militarily predominent (also frozen conflict)

🟡Not really at war, but has limited local insurgency/terrorism or is part of a neighbouring conflict

🟢Country +/- in peace

1- Sahel : Mali, Niger and Burkina Faso are facing a general war against JNIM and IS, which control large areas in all 3 of them. This war is spreading to Mauritania, Senegal, Guinea, Ivory Coast, Ghana, Togo, Benin and Nigeria (more and more fightings there at the border, which is why they are in orange and not yellow).

A war is also ongoing between Morroco and Polisario Front along the sand wall. A cold war is happening with Algeria (that's the reason for the yellow), Algeria, a very militarised regime with internal disputes (Kabylie...). Around Lake Chad, a war is ongoing between ISWAP and Nigeria, Chad, Niger and Cameroun.

2- Eastern Africa and Horn of Africa : war in Sudan, in South Sudan, in CAR, in Ethiopia and Somalia. Eritrea is highly involved and a highly militarised regime, Kenya is often under Al Shababs attacks in the east. Chad an Libya are also touched. Libya is still at war (without recent fightings), as well as Chad with the northern part of the country (+ISWAP). I also added Egypt for Sinaï, but the fightings are not happening often.

3- Central Africa : War in Eastern DRC, with Uganda, Rwanda and Burundi involved (fightings not directly there but they are largely involved, so yellow). Tanzania and Mozambique are also fighting terrorism especially in northern Mozambique.

-> 3 areas of ongoing wars, one is mainly around terrorism, the two others are more complicated, a mix of ressources, rebellions, power, foreign policy...

-> Terrorism is spreading in western Africa. Untouched countries are now seeing war at their door.

-> Austral Africa and some isolated states remain safe, far from war zone or barely, if not never touched

-> Wars are happening around the most populated regions of Africa (Nigeria, Guinean Gulf, Ethiopia, DRC...), a lot of population movement is happening.

-> Libya remains the main door to Europe (with Algeria, Morroco and Egypt behing very closed states)

-> Sahel is burning and east Africa is collapsing

This is all for now, make sure to make some comments of how I should modify the map and for which reason (arguments !).

Thanks

Clément Molin@clement_molin

‼️A new war may be starting in Ethiopia 🇪🇹 ‼️ Only 3 years after the ceasefire of the devastating Tigray war (600 000 dead), a new war is on the verge in northern Ethiopia This time, Sudan🇸🇩 Erytrea🇪🇷 Somalia🇸🇴 the UAE🇦🇪 Egypt🇪🇬 and others may be implicated. 🧵THREAD🧵1/17 ⬇️

English

T. Budiman Ξ 🦇🔊🛡️ retweetledi

On the one hand, AI influencers are breathlessly raving about Claude Code, Clawdbot, and Cowork. And on the other hand, most people I know—even software engineers—are despondent, overwhelmed about how everything is changing so quickly. I hear this from people early in their careers especially, a fear that everything they've learned and the skills they've gained are rapidly being devalued.

This is a mental trap. Don't fall for it. You should not just be watching from the sidelines or reading articles about "how software engineering is changing."

Imagine it was 1993 and the personal computer revolution was kicking off. If you could go back in time to then, what should you have done?

The answer: try everything. Buy a PC. Learn how to touch type. Figure out what the Internet is. Imbibe it all. Don't wait until it becomes a job requirement.

That's exactly what you should do with AI. Try everything. Try Claude Code, try Clawdbot, try the Excel integrations, Veo, everything you can get your hands on. Learn what it's doing. Build your intuitions. Be one step ahead of it. Evolve alongside it. Don't lose your curiosity or get swallowed by anxiety or let yourself be convinced that you'll learn it when you have to. Think deeply about how AI will change the things around you—not society, that's too hard to project—but how it will change your job, your personal life, your immediate environment.

No matter how old you are or young you are, no matter what stage of your career you are in, we are all going through the biggest technological change of the last 100 years, and we're going through it together. Nobody has the answers. It's obvious that so much is going to change, but nobody is going to figure it out before you do if you choose to stay at the frontier.

So don't hide from it. Sit at the front of the class. Pay close attention. And be grateful that it's never been easier to stay at the frontier of the most important technology change of our lifetimes.

English

T. Budiman Ξ 🦇🔊🛡️ retweetledi

2026 is the year we take back lost ground in computing self-sovereignty.

But this applies far beyond the blockchain world.

In 2025, I made two major changes to the software I use:

* Switched almost fully to fileverse.io (open source encrypted decentralized docs)

* Switched decisively to Signal as primary messenger (away from Telegram). Also installed Simplex and Session.

This year changes I've made are:

* Google Maps -> OpenStreetMap openstreetmap.org, OrganicMaps organicmaps.app is the best mobile app I've seen for it. Not just open source but also privacy-preserving because local, which is important because it's good to reduce the number of apps/places/people who know anything about your physical location

* Gmail -> Protonmail (though ultimately, the best thing is to use proper encrypted messengers outright)

* Prioritizing decentralized social media (see my previous post)

Also continuing to explore local LLM setups. This is one area that still needs a lot of work in "the last mile": lots of amazing local models, including CPU and even phone-friendly ones, exist, but they're not well-integrated, eg. there isn't a good "google translate equivalent" UI that plugs into local LLMs, transcription / audio input, search over personal docs, comfyui is great but we need photoshop-style UX (I'm sure for each of those items people will link me to various github repos in the replies, but *the whole problem* is that it's "various github repos" and not one-stop-shop). Also I don't want to keep ollama always running because that makes my laptop consume 35 W. So still a way to go, but it's made huge progress - a year ago even most of the local models did not yet exist!

Ideally we push as far as we can with local LLMs, using specialized fine-tuned models to make up for small param count where possible, and then for the heavy-usage stuff we can stack (i) per-query zkp payment, (ii) TEEs, (iii) local query filtering (eg. have a small model automatically remove sensitive details from docs before you push them up to big models), basically combine all the imperfect things to do a best-effort, though ultimately ideally we figure out ultra-efficient FHE.

Sending all your data to third party centralized services is unnecessary. We have the tools to do much less of that. We should continue to build and improve, and much more actively use them.

(btw I really think @SimpleXChat should lowercase the X in their name. An N-dimensional triangle is a much cooler thing to be named after than "simple twitter")

English

T. Budiman Ξ 🦇🔊🛡️ retweetledi

I no longer agree with this previous tweet of mine - since 2017, I have become a much more willing connoisseur of mountains. It's worth explaining why.

x.com/VitalikButerin…

First, the original context. That tweet was in a debate with Ian Grigg, who argued that blockchains should track the order of transactions, but not the state (eg. user balances, smart contract code and storage):

> The messages are logged, but the state (e.g., UTXO) is implied, which means it is constructed by the computer internally, and then (can be) thrown away.

I was heavily against this philosophy, because it would imply that users have no way to get the state other than either (i) running a node that processed every transaction in all of history, or (ii) trusting someone else.

In blockchains that commit to the state in the block header (like Ethereum), you can simply prove any value in the state with a Merkle branch. This is conditional on the honest majority assumption: if >= 50% of the consensus participants are honest, then the chain with the most PoW (or PoS) support will be valid, and so the state root will be correct.

Trusting an honest majority is far better than trusting a single RPC provider. Not trusting at all (by personally verifying every transaction in the chain) is theoretically ideal, but it's a computation load infeasible for regular users, unless we take the (even worse) tradeoff of keeping blockchain capacity so low that most people cannot even use the chain.

Now, what has changed since then?

The biggest thing is of course ZK-SNARKs. We now have a technology that lets you verify the correctness of the chain, without literally re-executing every transaction. WE INVENTED THE THING THAT GETS YOU THE BENEFITS WITHOUT THE COSTS! This is like if someone from the future teleported back into US healthcare debates in 2008, and demonstrated a clearly working pill that anyone could make for $15 that cured all diseases. Like, yes, if we have that pill, we should get the government fully out of healthcare, let people make the pill and sell it at Walgreens, and healthcare becomes super affordable so everyone is happy. ZK-SNARKs are literally like that but for the block size war. (With two asterisks for block building centralization and data bandwidth, but that's a separate topic)

With better technology, we should raise our expectations, and revisit tradeoffs that we made grudgingly in a previous era.

But also, I have actually changed my mind on some of the underlying issues. In 2017, I was thinking about blockchains in terms of academic assumptions - what is okay to rely on honest majority for, when we are ok with 1-of-N trust assumption, etc. If a construction gave better properties under known-acceptable assumptions, I would eagerly embrace it.

On a raw subconscious level, I don't think I was sufficiently appreciative of the fact that _in the real world, lots of things break_. Sometimes the p2p network goes down. Sometimes the p2p network has 20x the latency you expected - anyone who has played WoW can attest to long spans of time when the latency spiked up from its usual ~200ms to 1000-5000ms. Sometimes a third party service you've been relying on for years shuts down, and there isn't a good alternative. If the alternative is that you personally go through a github repo and figure out how to PERSONALLY RUN A SERVER, lots of people will give up and never figure it out and end up permanently losing access to their money. Sometimes mining or staking gets concentrated to the point where 51% attacks are very easy to imagine, and you almost have to game-theoretically analyze consensus security as though 75% of miners or stakers are controlled by one single agent. Sometimes, as we saw with tornado cash, intermediaries all start censoring some application, and your *only* option becomes to directly use the chain.

If we are making a self-sovereign blockchain to last through the ages, THE ANSWER TO THE ABOVE CONUNDRUMS CANNOT ALWAYS BE "CALL THE DEVS". If it is, the devs themselves become the point of centralization - they become DEVS in the ancient Roman sense, where the letter V was used to represent the U sound.

The Mountain Man's cabin is not meant as the replacement lifestyle for everyone. It is meant as the safe place to retreat to when things go wrong. It is also meant as the universal BATNA ("Best Alternative to a Negotiated Agreement") - the alternative option that improves your well-being not just in the case when you end up needing it, but also because knowledge of it existing motivates third parties to give you better terms. This is like how Bittorrent existing is an important check on the power of music and video streaming platforms, driving them to offer customers better terms.

We do not need to start living every day in the Mountain Man's cabin. But part of maintaining the infinite garden of Ethereum is certainly keeping the cabin well-maintained.

vitalik.eth@VitalikButerin

@iang_fc The idea of average users personally validating the entire history of the system is a weird mountain man fantasy. There, I said it.

English

T. Budiman Ξ 🦇🔊🛡️ retweetledi

Logical Intelligence introduces first energy-based reasoning AI Model, and brings Yann LeCun to leadership as founding chair of their Technical Research Board

The 6-month-old Silicon Valley start-up, unveiled an “energy based” model called Kona and says it is more accurate and uses less power than large language models like OpenAI’s GPT-5 and Google’s Gemini.

It is also starting a funding round that targets a $1bn-$2bn valuation and has named LeCun chair of its technical research board.

Most large language models answer by predicting the next token, which can sound fluent while still drifting into confident mistakes.

Kona is an "energy-based reasoning model" (EBRM) that verifies and optimizes solutions by scoring against constraints, finding the lowest "energy" (most consistent) outcome. It's non-autoregressive, producing complete traces without sequential generation, reducing hallucinations.

Focuses on trustworthy, math-grounded reasoning for high-stakes applications where LLMs fail, emphasizing safety, efficiency, and constraint enforcement in logic-heavy tasks like puzzles or proofs.

How Kona operates

Its a non-autoregressive "energy-based reasoning model" (EBRM) model, meaning it doesn't generate outputs sequentially (like LLMs do token-by-token) but instead produces complete reasoning traces simultaneously. Here's how it works step-by-step:

- Input Conditioning: It takes a problem, constraints, and optional targets (e.g., a desired outcome like a proof goal or spec) as inputs. These condition the model directly, unlike LLMs which rely on probabilistic sampling.

- Energy Function Scoring: Kona learns an energy function that assigns a scalar "energy" score to entire reasoning traces (partial or complete). Low energy indicates high consistency with constraints and objectives; high energy flags inconsistencies, violations, or errors. This global scoring evaluates end-to-end quality, allowing the model to assess long-horizon coherence without degrading over extended traces.

- Optimization as Reasoning: Reasoning is reframed as an optimization problem. The model searches for the lowest-energy solution by minimizing the energy function, often through iterative refinement. It can revise any part of a trace mid-process, using dense feedback to localize failures (e.g., "this step violates constraint X") and guide corrections.

- Continuous Latent Space: Unlike discrete token-based LLMs, Kona works in a continuous space with dense vector representations. This enables precise, gradient-based edits and efficient local refinements without regenerating entire sequences.

- Output: The final low-energy trace represents a valid, constraint-satisfying solution. For example, in Sudoku, it maps allowable moves and finds a puzzle completion that minimizes energy (i.e., maximizes rule adherence).

This mechanism draws from physics-inspired principles, where energy minimization finds stable states, similar to how natural systems settle into low-energy configurations.

Overall, Logical Intelligence views EBMs as a path beyond LLM limitations, enabling AI that "knows" rather than guesses, with applications in verifiable, efficient reasoning.

This aligns with LeCun's long-standing advocacy for objective-driven AI via energy minimization, as opposed to autoregressive prediction.

English

T. Budiman Ξ 🦇🔊🛡️ retweetledi

I used to think Sapiens was a great book. Sweeping, provocative, the kind of book that makes you feel like you finally understand the big picture of human history. It's on every CEO's bookshelf, assigned in universities, praised as a masterwork of synthesis. Yuval Noah Harari is treated as one of the serious thinkers of our time.

But something nagged at me. Some passages felt off. Claims that human rights are just figments of our collective imagination, not real things, just stories we tell ourselves. That nations, laws, money, justice, doesn't exist outside our heads. That meaning itself is a delusion we've invented to cope. That we're far more powerful than ever before but not happier. That hunter-gatherers had it better because they had no dishes to wash, no carpets to vacuum, no nappies to change, no bills to pay.

That sounded depressing to me, but was perhaps just the realistic scientific worldview? What it meant to see the world clearly, without comforting illusions.

Then I read The Beginning of Infinity by @DavidDeutschOxf. Deutsch has a concept he calls 'bad philosophy.' Not philosophy that's merely false, but philosophy that actively prevents the growth of knowledge. Ideas that close doors rather than open them. That makes problems seem unsolvable by design.

After soaking in Deutsch's framework (it's dense, a bit like digesting a delicious whale), it becomes clear: Harari's books are riddled with bad philosophy. They're smuggling nihilism in under the guise of scientific objectivity. Some examples:

On meaning: "Human life has absolutely no meaning. Humans are the outcome of blind evolutionary processes that operate without goal or purpose... any meaning that people inscribe to their lives is just a delusion."

On human rights: "There are no gods in the universe, no nations, no money, no human rights, no laws, and no justice outside the common imagination of human beings."

On free will: "Humans are now hackable animals. The idea that humans have this soul or spirit and they have free will, that's over."

On progress: "We thought we were saving time; instead we revved up the treadmill of life to ten times its former speed." The Agricultural Revolution? "History's biggest fraud." We didn't domesticate wheat, "it domesticated us."

On our cosmic significance: "If planet Earth were to blow up tomorrow morning, the universe would probably keep going about its business as usual. Human subjectivity would not be missed."

On the future: "Those who fail in the struggle against irrelevance would constitute a new 'useless class.'" Homo sapiens will likely "disappear in a century or two."

This is bad philosophy. It tells us our problems are cosmically insignificant, our solutions are illusions, and that progress is neither desirable nor within our control. It's also perfect nonsense. No one would ever go back to being hunter-gatherers. Would you rather worry about your kid spending too much time on Roblox, or face the 50% chance she won't reach puberty?

And our so-called "fictions"? They ended slavery. They gave women equal rights. They solved hunger. They eradicated smallpox. They turned sand into computer chips. They got us to the moon, and hopefully soon, to Mars and beyond. These "fictions" are already reshaping the universe, and over time they may become the most potent force in it.

Now compare Deutsch:

"Humans, people and knowledge are not only objectively significant: they are by far the most significant phenomena in nature."

"Feeling insignificant because the universe is large has exactly the same logic as feeling inadequate for not being a cow."

"Problems are soluble, and each particular evil is a problem that can be solved."

"We are only just scratching the surface, and shall never be doing anything else. If unlimited progress really is going to happen, not only are we now at almost the very beginning of it, we always shall be."

Where Harari sees a species of deluded apes stumbling toward obsolescence, Deutsch sees universal explainers, the only entities we know of capable of creating explanatory knowledge, solving problems, and potentially seeding the universe with intelligence.

The difference isn't academic. Ideas shape action. If you believe life is meaningless, progress is a trap, and humans are hackable animals with no free will, how does that affect what you build? What you fight for? What you teach your children?

Harari's books sell because they flatter a fashionable pessimism. They let readers feel sophisticated for seeing through the "delusions" everyone else lives by. That smug cynicism is corrosive. And it's everywhere: in schools, in media, in bestselling books. More than half of young adults now say they feel little to no purpose or meaning in life. This is what happens when you teach an entire generation bad philosophy. Less progress, less health, less wealth. Less flourishing. And ultimately, a higher chance that civilization and consciousness go extinct.

Fortunately, there's another equally well-written, but much truer, account of homo sapiens, appropriately titled 'The Beginning of Infinity'. And this one smuggles no despair in by the backdoor. But let's give Harari credit where it's due. He is right about one thing: if planet Earth blew up tomorrow, we wouldn't be missed. Because there'd be no one left to miss us, just a careless universe, blindly obeying physical laws. We are the only ones who can miss, but we're not going to. We're going to aim, hit, and keep going.

Full credit for the amazing meme to @Ben__Jeff

English

T. Budiman Ξ 🦇🔊🛡️ retweetledi

TRAILER premiere! ❤

Rory Kennedy’s new documentary about my life, my way, Queen of Chess releases on @Netflix February 6th.✨

TRAILER👉 youtube.com/watch?v=8pmJgt…

#ChessConnectsUs #QueenOfChess @Netflix #Sundance2026 #SundanceFilmFestival #SundancePremiere #Netflix #Netflixpremiere #DocumentaryFilm #TrueStories #IndieFilm #MoxiePictures #Honored #WorldPremiere #HumanStory #Socialimpact #InspiringStories #trailer

YouTube

English

T. Budiman Ξ 🦇🔊🛡️ retweetledi

As an advisor to the @ethereumfndn

To our community in Iran, our eyes and thoughts are with you and the entire Iranian population. We see you bravely fighting tyranny and being brutally silenced, all your rights scraped, and communications cut

Many of you travelled from Iran to attend @EFDevcon @EFDevconnect and @ETHGlobal. You volunteer, hack, cocreate our gatherings, tools and work on a free, decentralised, censorship resistant future alongside us

We call for your safety from violence and oppression. For a world where no one can be cut from internet and information. Together with you, we stand for lasting freedom.

English

T. Budiman Ξ 🦇🔊🛡️ retweetledi

The biggest takeaways/nuggets from my interview with @GeoffreyHuntley on AI-native software engineering and the Ralph loop:

1. Software development and software engineering are now two different professions, and one of them is over. Software development, the work of translating tickets into code, can now be done by anyone for $10-42/hour while they sleep. Software engineering, architecture, security, requirements breakdown, understanding failure modes, is where humans still matter. If you identify as a "software developer," you're competing against a bash loop. If you identify as a "software engineer," your job is to orchestrate the loops.

2. The moat you think protects your software product doesn't exist anymore. Geoffrey argues you can clone any SaaS product, even those with BSL licenses or proprietary enterprise code, using AI. He ran Ralph in reverse on HashiCorp Nomad's source code to generate clean-room specifications. When he hit gaps from missing enterprise features, he ran Ralph over their marketing materials and product docs to fill them in. Any company relying on licensing or code secrecy as a competitive moat needs to rethink their strategy.

3. Cursor, Windsurf, and every other AI coding tool are essentially the same thing: a loop that automatically copies and pastes. Geoffrey built these tools professionally and says the harness does almost nothing; the model does all the work. There's no real moat in the harness business when you're reselling tokens. The only differentiator is taste and UX. Stop evaluating tools and start learning the underlying patterns.

4. Ralph is not a product. It's an orchestrator pattern for running thousands of AI loops. The simplest version is a bash loop that deterministically allocates memory, lets the LLM pick one task, executes it, then starts fresh. The key insight: every loop gets a brand new context window. You avoid compaction (where the AI gets dumber as context fills up) by never letting the context window accumulate competing goals. Your institutional knowledge lives in specification files, not in the context window.

5. Specifications are the new source code. Geoffrey's workflow: spend 30 minutes in conversation with AI, drilling into requirements, making engineering decisions, building up specs. Then throw those specs to Ralph and get weeks worth of work in hours. The specs act as a "pin" that reframes every fresh loop with your domain knowledge. He doesn't hand-write specs. He code-generates them through structured conversation. Prototypes are now free. Refactoring is cheap.

6. The entry-level path into software engineering is closing fast. Geoffrey's company stopped hiring juniors for a year until they figured out how to interview for AI-native skills. There's already a cohort of juniors who've been practicing these techniques for six months. They'll work at a quarter of senior wages and outship them. If you're just picking up these tools today, you're behind. The new interview question: can you explain how to build a coding agent on a whiteboard?

7. Senior engineers who refuse to adapt are in more danger than juniors who embrace it. Geoffrey sees respected engineers taking hardline stances against AI ("it's installing fascism in your codebase"). Meanwhile, leadership teams are discovering Ralph and realizing three people can run the output of an entire org. When commit velocity and product velocity diverge that dramatically between adopters and non-adopters, founders notice. The hard line is coming.

8. AI is an amplifier of operator skill, not a replacement for it. If you're great at security and you get good at AI, you become a weapon. If you're mediocre and you use AI, you're still mediocre, just faster. The skill gap comes from "discoveries": learning the tricks, the loop-backs, the ways to close the automation loop. These techniques don't have standardized language yet. We're inventing the terms for the new computer every day.

9. Open source may no longer make sense for most use cases. Geoffrey, a former prominent open source maintainer whose land was funded by Open Collective, no longer uses open source libraries. His reasoning: every dependency injects a human into the loop. If there's a bug, you open a PR, chase a maintainer, wait. That's not automation. Instead, code-generate what you need. The exception: don't generate cryptography or security-critical code unless you have the domain expertise to verify it.

10. Programming languages now have a tier list based on how well AI agents can work with them. S-tier: Rust, TypeScript (especially with Effect.js), Python with Pydantic. These are source-based with strong type systems that reject invalid generations and work well with ripgrep for code discovery. F-tier: Java and .NET. Their DLL-based dependency systems don't work natively with the search tools AI agents use. The tradeoff with Rust: compilation is slow, so bad generations cost more time.

11. Corporate AI transformation programs are dangerously slow. Three-to-four-year rollouts with coaches and committees won't cut it when three founders in Bali can Ralph your entire product and undercut your pricing by 99%. Smaller teams ship faster. By the time the transformation is done, the market has moved. Geoffrey calls this the "Titanic moment": the boat is full, get the next boat.

12. We have a new computer, and that's why the legends are coming out of retirement. The last 40 years of computing decisions were designed for humans: TTYs, environment variables, slow language evolution to avoid breaking mental models. Now we have robots. What's the bare minimum a robot needs? Geoffrey sees this as the most exciting time in computing. If you're not excited about what you can now build, you haven't truly picked up the new computer yet.

English

T. Budiman Ξ 🦇🔊🛡️ retweetledi

T. Budiman Ξ 🦇🔊🛡️ retweetledi

NEW:

i wrote a complete technical guide to building agent-native software (co-authored with claude)

it covers:

- the five pillars of agent native design (parity, granularity, composability, emergent capability, self-improvement)

- files as the universal interface

- agent execution patterns with code samples

- mobile agent patterns

- advanced patterns like dynamic capability discovery

if you want to take full advantage of this moment, it's worth your time:

every.to/guides/agent-n…

English