烟花老师

1.6K posts

@teach_fireworks

AI 社区【一支烟花】 发起人, AI 应用架构师,分享深度 AI 内容,链接 AI 创业者

全网都在吹的LeCun新论文,90%的解读都是错的。 他们说生成式AI是死路,说过去三年花的几百亿全白费了,说15M参数的小模型就能吊打万亿大模型。 这些全是营销号的夸张, 我觉得这篇论文的真正分量比他们吹的还要重。 Yann LeCun团队这次解决了JEPA困扰了好几年的表征坍缩问题。 以前的世界模型,学着学着就会把狗车人都压成一模一样的向量,什么都学不到。 这次他们只加了一个极其优雅的数学正则化器SIGReg, 没有复杂的trick 和六个超参数要调,训练稳得离谱。 单张GPU几个小时就能训完,在机器人控制任务上,规划速度比巨型世界模型快48倍,成功率还更高。 最厉害的是它的隐空间里天然就编码了物理规律。 不用教,它自己就知道物体不能瞬移,知道速度和位置的关系。 能瞬间检测出物理上不可能发生的事。 这不是啥范式革命,也不会让GPT和Claude明天就死掉。 语言和创意生成,依然是自回归大模型的天下。 但它打开了一扇全新的门, 原来懂物理不需要万亿参数,不需要云端超算。 原来世界模型可以小到跑在机器人的本地芯片上。 过去三年,整个行业都在一条路上狂奔,堆参数,堆算力,堆数据。 所有人都以为只要足够大,就能懂世界。 现在我们终于知道,还有另一条路,一条更高效,更优雅,更接近真实世界运行方式的路。 生成式AI不会死, 但未来的智能体不会是只会聊天的大模型, 它会是一个懂物理的小世界模型,加一个大语言接口, 这才是这篇论文真正的意义所在吧 hhh

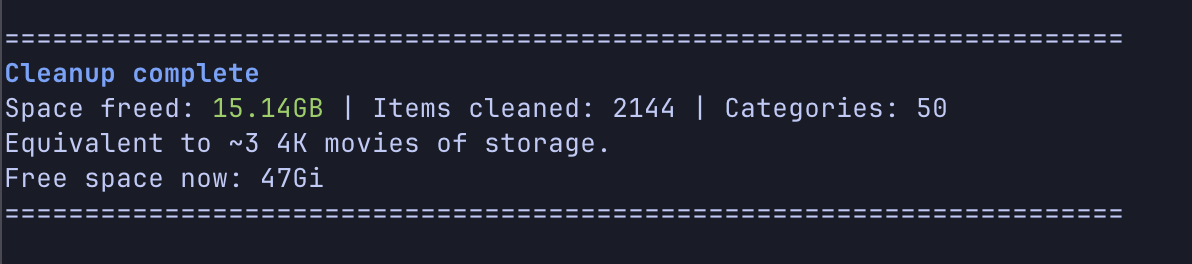

不愧是国民级App,~67G 🚀🚀

Steinberger said PRs should be Prompt Requests. It's a good framing because the review model actually changes when the author is an agent. When a human opens a PR, the reviewer assumes the author understood the codebase and made a few mistakes along the way. You catch edge cases, style violations, maybe a wrong pattern. The mental model is: this person knows the code, I'm checking their work. Agents don't carry that context. They don't know your quality profiles, your banned patterns, or which dependencies your team stopped using. They write code that compiles and passes surface-level checks. That's enough to land a commit. CI catches the deeper problems 20 minutes later. By then, the agent has moved on to the next task and built on top of whatever it just committed. This keeps happening because verification still lives outside the agent's workflow. The agent writes and commits. The pipeline validates after the fact. The gap between those two steps is where bugs, vulnerabilities, and technical debt accumulate quietly. The fix is moving verification into the agent's inner loop instead of running it after: → During a regular CI run, SonarQube stores full project context: dependencies, compiled artifacts, type information, and build configuration. → When the agent writes code in a file, it calls SonarQube's analysis engine mid-workflow. The engine restores that cached CI context, applies your team's quality profiles and security rules, and runs the same analysis your pipeline uses. This is not a linter but rather a full analysis. → Issues from the analysis are surfaced inside the inner loop. The agent fixes, re-verifies, and commits high-quality code. You get the same precision as a full CI scan, but in seconds and PRs that pass quality gates the first time. If you want to see this in practice, SonarQube Agentic Analysis (by @SonarSource) implements the exact solution. Note: this is available for free in beta to current SonarQube Cloud Teams and Enterprise customers. The setup is a project-specific .mcp.json file pointing to the SonarQube MCP server. That's it. Works with Claude Code, Cursor, Codex, Gemini CLI, and VS Code with Copilot. I have shared a hands-on GitHub repo in the replies on using Agentic Analysis with Claude Code to write cleaner code from the very first draft. Thanks to Sonar for working with me today.

Nice paper combining the strength of Skills and RAG. Most RAG systems retrieve on every query, whether the model needs help or not. This is wasteful when the model already knows the answer, and often too late when it does not. New research introduces Skill-RAG, a failure-state-aware retrieval system. It uses hidden-state probing to detect when an LLM is approaching a knowledge failure, then routes the query to a specialized retrieval strategy matched to the gap. Evaluated on HotpotQA, Natural Questions, and TriviaQA, the approach improves over uniform RAG baselines on both efficiency and accuracy. Why does it matter? RAG is moving from a single monolithic pipeline to a suite of skills an agent selects between. Knowing when to retrieve and what kind of retrieval to run will matter more than raw retriever quality as agents take on multi-step reasoning, where a single bad lookup derails the whole chain. Paper: arxiv.org/abs/2604.15771 Learn to build effective AI agents in our academy: academy.dair.ai

llm-wiki 的最轻阅读器,一个浏览器就可以了。

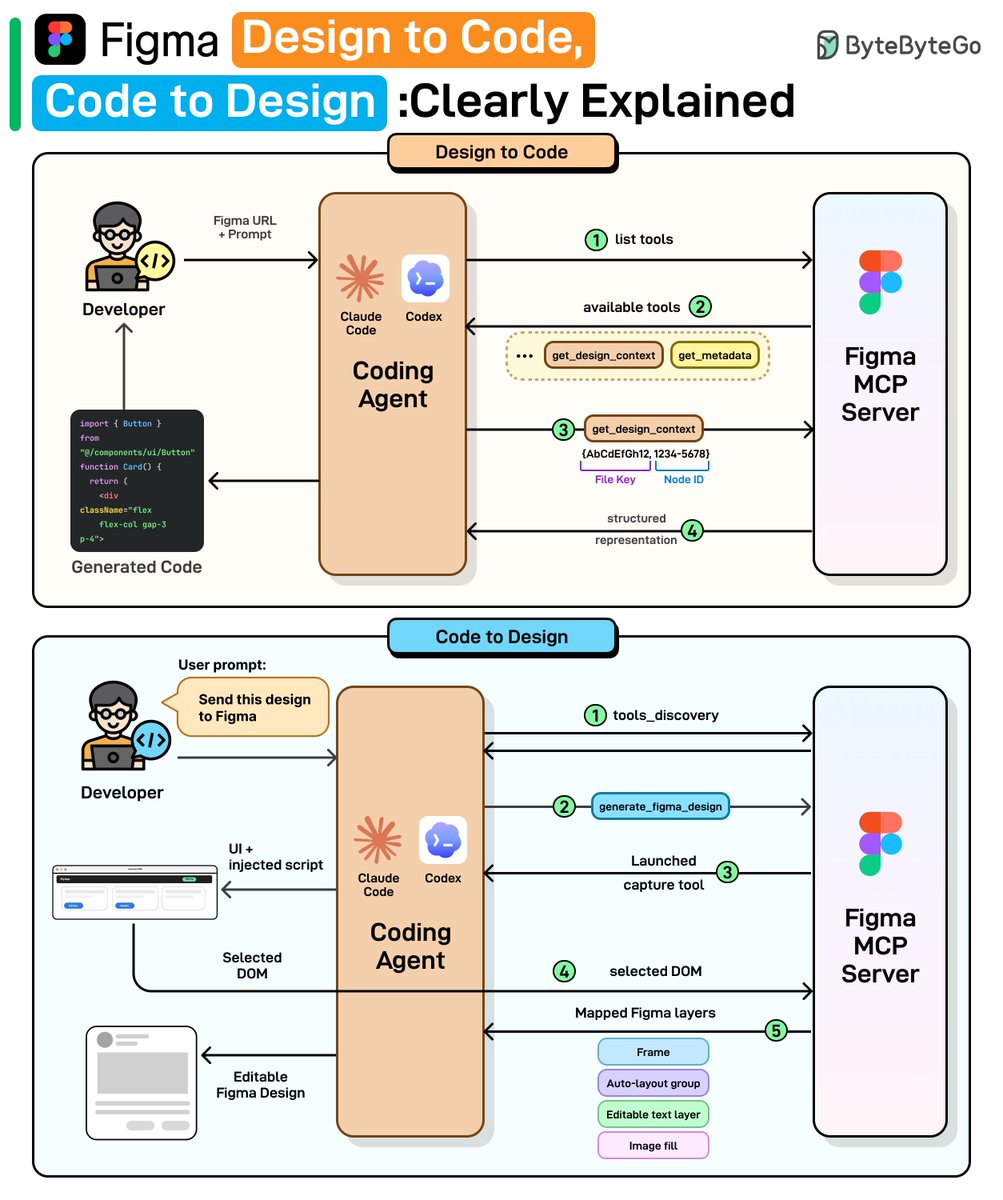

Figma Design to Code, Code to Design: Clearly Explained We spoke with the Figma team behind these releases to better understand the details and engineering challenges. This article covers how Figma’s design-to-code and code-to-design workflows actually work, starting with why the obvious approaches fail, how MCP solves them, and the engineering challenges that remain. Read the full newsletter here: blog.bytebytego.com/p/figma-design…