Honored to participate in the annual innovation event hosted by @Prologis in the beautiful town of Charleston.

What stood out most was the energy in the room — leaders, operators, technologists, and innovators deeply focused on the future of industrial infrastructure, logistics, energy, and supply chain transformation. The conversations were not just about warehouses or facilities; they were about building the intelligent foundation powering the modern economy.

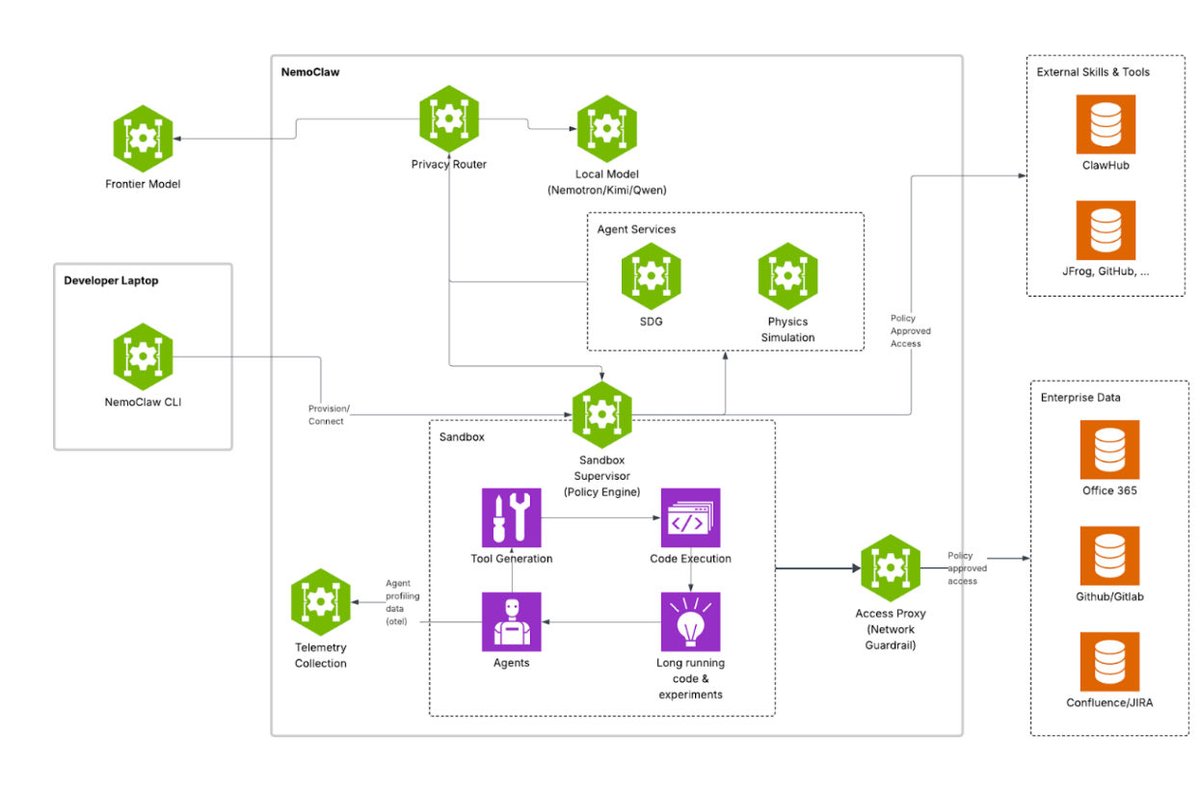

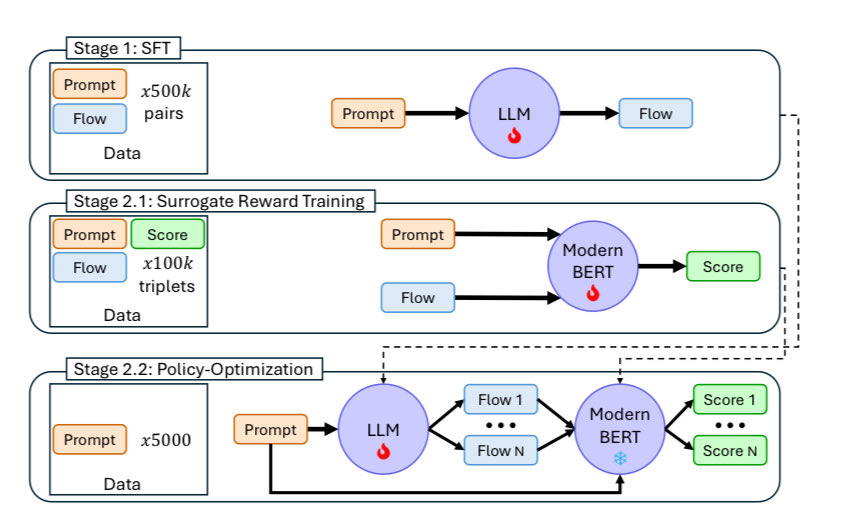

One of the most powerful realizations from the event is this: Real estate is no longer just physical space. When connected with AI, data, automation, robotics, and energy intelligence, it becomes a strategic platform that maps value across the entire supply chain ecosystem — from manufacturing and fulfillment to transportation, inventory flow, and ultimately customer experience.

The convergence of industrial real estate, digital infrastructure, and AI-driven operations will define the next era of global competitiveness. The opportunity ahead is massive.

A big thank you to the entire Prologis team for the outstanding hospitality, the world-class organization, and for bringing together such an exceptional group of leaders and innovators. Truly inspiring. @ToriDeems @wbodonnell @WalterKemmsies @richardteachout @avihou @FreightAlley #innovation #supplychain #AI #automation #physicalai #prologis #nvidia

English