Thansir MC retweetledi

Thansir MC

1.7K posts

Thansir MC retweetledi

In 14 minutes, this Anthropic engineer who wrote "Building Effective Agents" will

teach you more about building them right than most developers figure out on their own

in months.

Bookmark this for the weekend. Then read the builder's guide below.

Avid@Av1dlive

English

Thansir MC retweetledi

Thansir MC retweetledi

Thansir MC retweetledi

🚨 BREAKING: Andrej Karpathy said someone should build this. 48 hours later it appeared on GitHub. For free.

It's called Graphify.

Bookmark it for later.

Karpathy keeps a /raw folder where he drops papers, tweets, screenshots, and notes. He called for a real product to turn that chaos into structured knowledge. Someone answered.

One command. Any folder. Full knowledge graph.

/graphify ./raw

What comes out:

→ An interactive knowledge graph you can click, search, and filter by community

→ A plain-language report with god nodes, surprising connections, and suggested questions

→ An Obsidian vault with backlinked articles (opt-in)

→ A wiki starting at index.md that any agent can navigate

The number that matters: 71.5x fewer tokens per query vs reading raw files. On a mixed corpus of repos, papers, and images, average query cost dropped from ~123K tokens to ~1.7K.

That is not optimization. That is a different paradigm for how AI reasons over large codebases.

Fully multimodal. Code in 19 languages via tree-sitter AST. PDFs. Images via Claude Vision. Screenshots, diagrams, whiteboard photos. Everything goes into one graph.

No vector database. No embeddings. Leiden community detection finds clusters by edge density. The graph topology is the similarity signal.

Auto-syncs as your codebase changes. Git hooks rebuild the graph after every commit and branch switch. SHA256 cache means re-runs only process changed files.

Works with Claude Code, Codex, OpenCode, OpenClaw, and Factory Droid.

pip install graphify && graphify install

MIT License. 100% Opensource.

(Link in the comments)

English

Thansir MC retweetledi

Thansir MC retweetledi

Thansir MC retweetledi

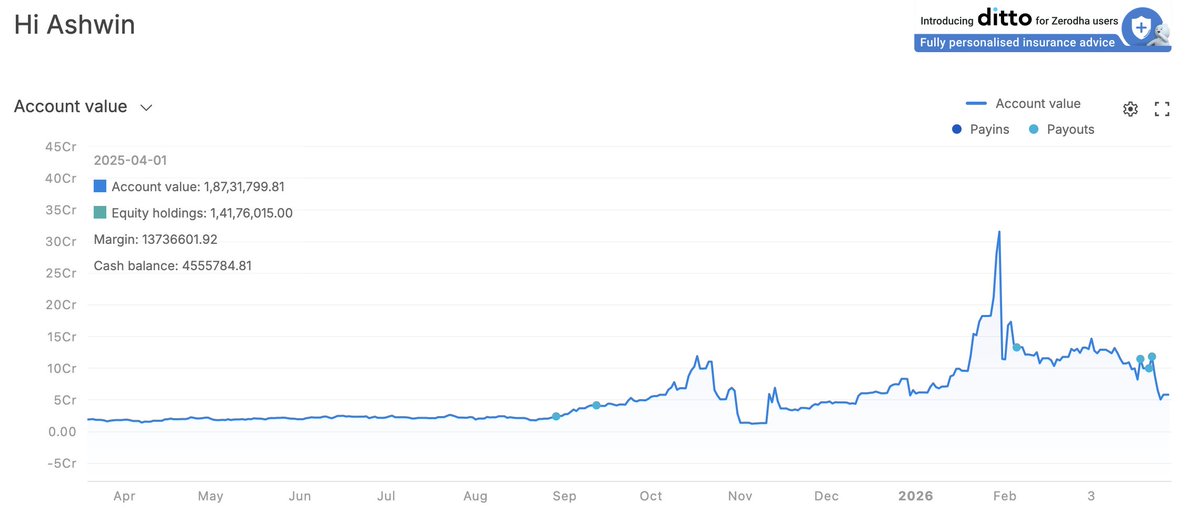

Started the financial year with a 1.87Cr account and finished with nearly 15Cr in profit!

FY 25-26 has been.. insane! 🥹

Grateful for the lessons, the discipline, and the journey.

On to the next one! 🕺

Link to last 360 days verified PnL

console.zerodha.com/verified/c3d8b…

English

Thansir MC retweetledi

This 2-hour lecture by Andrej Karpathy - co-founder of OpenAI, the man who coined "vibe coding" - will build GPT from scratch and show you exactly why message 30 costs you 31x more than message 1.

Bookmark this & give it 2 hours today, no matter what. It's the best thing you can do for your Claude budget. Then read the article below.

After this, you'll never pay for tokens Claude spends talking to itself again.

Noisy@noisyb0y1

English

Thansir MC retweetledi

Thansir MC retweetledi

INSTEAD OF WATCHING NETFLIX TONIGHT.

Spend 1 hour building this.

Obsidian + Claude Code = your own personal JARVIS.

A second brain that captures everything, connects every idea, and thinks alongside you using the most powerful AI model available.

Takes 1 hour to set up.

Works while you sleep.

The people who build this tonight will never work the same way again.

The people who skip it will still be taking scattered notes and losing their best ideas next year wondering why they cannot think clearly.

Your call.

English

Thansir MC retweetledi

The moment you realize "Second Brain as a Service" is a real business:

1. Charge $1,500-3,000 to build a client's knowledge base (3 folders, 1 schema file, their existing data loaded)

2. Monthly retainer $300-500/mo for ongoing ingestion, health checks, and new source processing

3. Target agencies and consultants first. They have years of scattered data across Slack, Drive, email, and call transcripts. They'll pay tomorrow.

4. The setup takes a weekend to learn, a few hours to deliver. The client gets a searchable wiki that gets smarter every time they use it.

5. Stack it: competitive intel vault + client knowledge vault + content vault = $1,000-1,500/mo per client

10 clients = $60K+ year one. From a system built on folders and text files.

Full breakdown of the system in the article.

Corey Ganim@coreyganim

English

Thansir MC retweetledi

Thansir MC retweetledi

when you become a millionaire in 1-3 years because you sell personalised knowledge bases and it’s all because (I repeat):

1: you learn how to build llm knowledge bases (the guide drops everything you need)

2: you go to people who are cash rich and time poor. lawyers, doctors, consultants, agency owners, property investors, founders. people drowning in information they never have time to organise

3: you show them what a personalised knowledge base looks like. their research, their documents, their industry intel, all compiled into a searchable wiki that gets smarter every time they use it

4: you offer a one-time build for 1.5k. you set up obsidian, build the folder structure, configure the schema, clip their first 20-30 sources, run the compilation, hand them a working system with a walkthrough

5: you offer a yearly maintenance package for 500. you update their wiki with new sources, run health checks, add new topics as their work evolves, keep the whole thing current

6: you land 5 clients and that’s 7.5k upfront plus 2.5k recurring every year. 10 clients and you’re looking at 15k plus 5k annual. for a system that takes you a few hours to build once you know the workflow

7: again, if you find 200 clients and you’re sitting on 300k upfront and 100k recurring every single year. for building markdown files.

the beauty of this is the work gets faster every time you do it. your second build takes half the time of your first. by your fifth you could knock one out in an afternoon.

and the people who need this most have no idea it exists. their competition definitely doesn’t have one. you’re not selling software. you’re selling an unfair advantage in their specific field.

hoeem@hooeem

English

Thansir MC retweetledi

how to run claude code with gemma 4 completely free (beginner's guide):

this guide shows you how to use claude code completely free with gemma 4, no subscriptions &no api keys.

just your laptop + 15 mins setup.

this lets you run open-source models (like google’s gemma) locally, meaning:

— no costs

— full privacy

what you need before starting, make sure you have:

vs code installed

— node.js (version 18+)

— stable internet (for one-time model download)

_____________

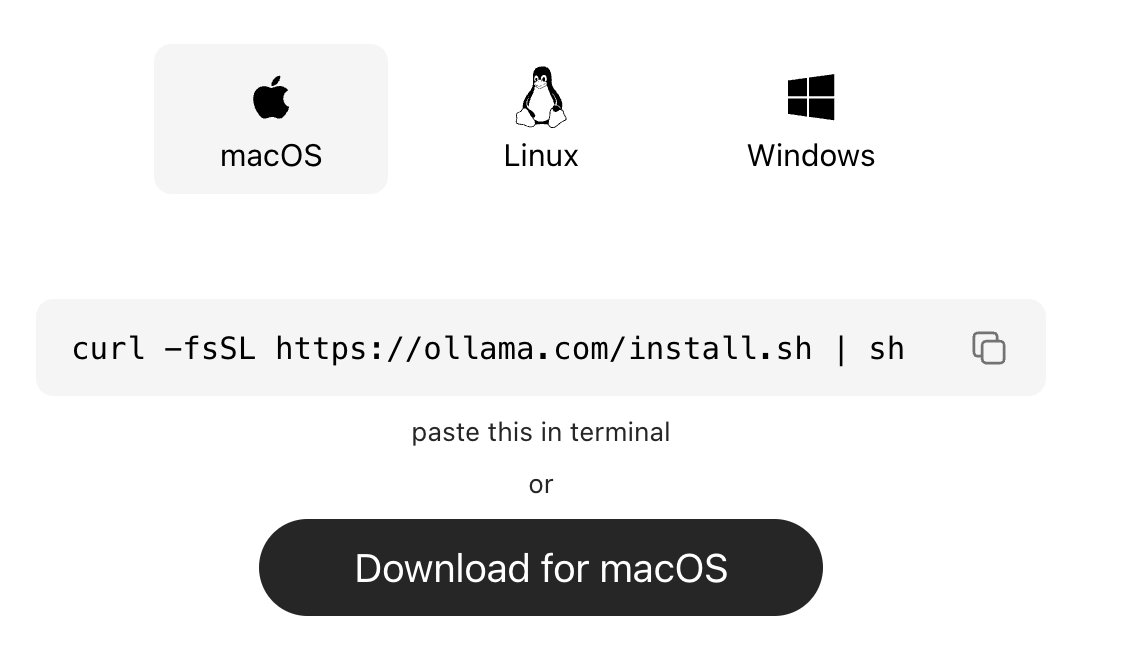

step 1: install ollama (the engine)

ollama is what runs ai models locally on your machine.

→ mac:

go to ollama.com/download

click download for mac, open the file and install like any normal app. no terminal needed.

→ windows:

go to ollama.com/download, click download and install

→ linux:

curl -fsSL ollama.com/install.sh | sh

check it worked:

ollama --version

_____________

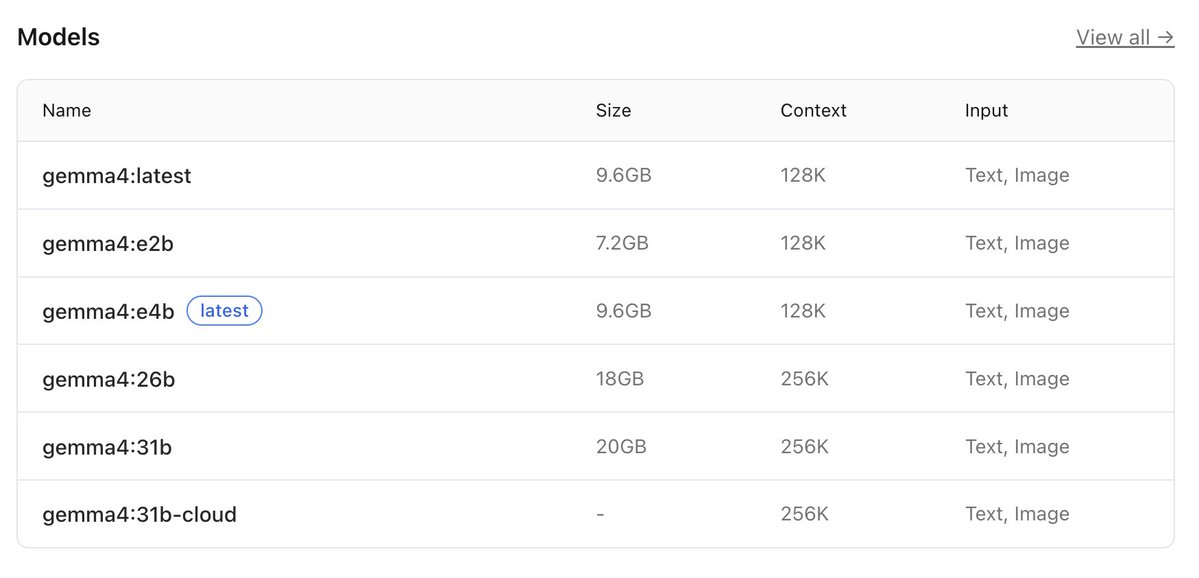

step 2: download gemma 4

this is the ai model you’ll run locally, pick based on your system:

→ low-end (8gb ram):

ollama pull gemma4:e2b

→ recommended (16gb ram):

ollama pull gemma4:e4b

→ high-end (32gb ram):

ollama pull gemma4:26b

⚠️ it’s a big download (7gb–18gb), so give it time.

after download is completed, verify with the command:

ollama list

_____________

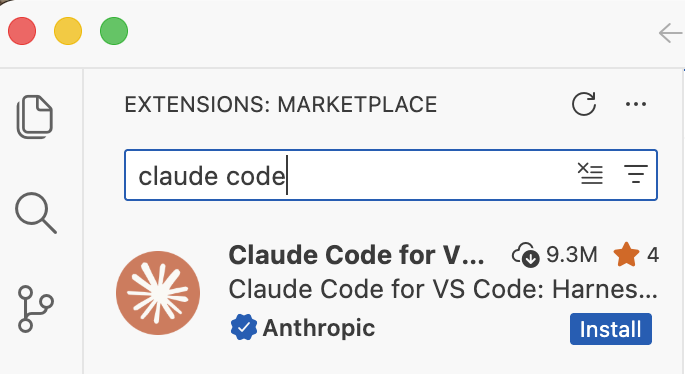

step 3: install claude code in VS code or any other IDE

this is your interface.

— open vs code

— press ctrl + shift + x

— search claude code

install the one by anthropic

after install → you’ll see a ⚡ icon in sidebar

_____________

step 4: connect claude code to ollama

by default, claude connects to the cloud. we’re redirecting it to your local machine.

so do this:

— press ctrl + shift + p

— search: open user settings (json)

— then paste this inside:

"claude-code.env": {

"ANTHROPIC_BASE_URL": "http://localhost:11434",

"ANTHROPIC_API_KEY": "",

"ANTHROPIC_AUTH_TOKEN": "ollama"

}

what this does:

— it routes everything to your local ollama server.

— nothing leaves your device.

_____________

step 5: run everything

1. start ollama with this command:

ollama serve

leave this running.

2. open claude code in vs code

click ⚡ icon

3. select your model

type:

gemma4:e4b

(or whichever you downloaded)

you’re done

_____________

you now have:

— claude code running

— powered by gemma 4

— fully local

completely free

try:

“explain this file”

“write a function”

“refactor this code”

_____________

common issues (quick fixes)

“unable to connect”

run:

ollama serve

asked to sign in

your json config is wrong

check for missing commas/brackets

very slow responses

your model is too big

switch to:

gemma4:e2b

model not found

run:

ollama list

copy exact name

quick recap

you just built:

a free claude setup

powered by local ai

no api costs

Follow for more AI contents like this!!!

English

Thansir MC retweetledi

Thansir MC retweetledi

Thansir MC retweetledi

Thansir MC retweetledi

Thansir MC retweetledi

> "how do i build an ai agent"

> google it

> 47 tabs open

> none of them built

> close laptop

> "i'll start tomorrow"

> tomorrow never came

> some kid followed one tutorial

> built an agent before dinner

> you're still picking a framework

Khairallah AL-Awady@eng_khairallah1

English