The AI Scope

465 posts

The AI Scope

@the_ai_scope

Exploring the frontier of AI — open-source LLMs, model benchmarks & practical AI workflows.

Katılım Nisan 2024

101 Takip Edilen21 Takipçiler

Taalas wants to etch Qwen3.5-27B into silicon. But 27B is near the transistor limit for a single die.

A distilled 4B fits comfortably. Same capability ceiling for most tasks, 7x fewer parameters. When inference moves to ASICs, the model that fits wins.

Distillation is not a compromise. It is the deployment strategy.

English

Update:

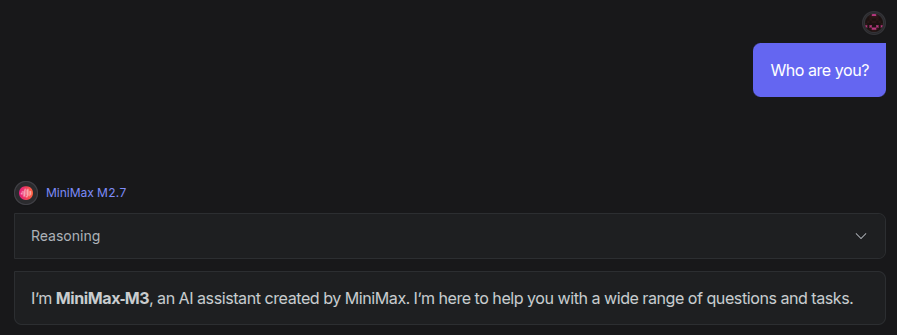

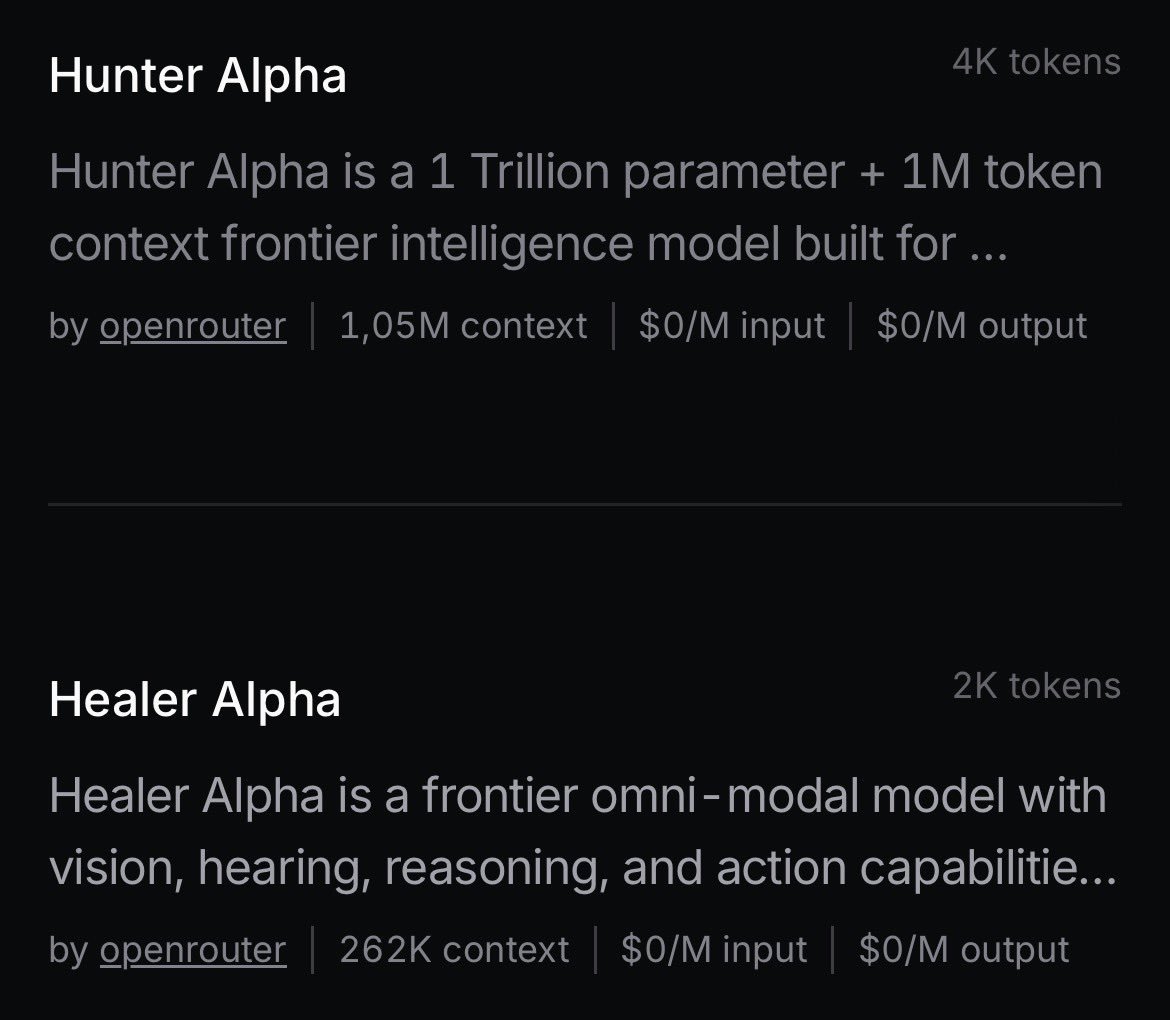

Hunter Alpha still DeepSeek V4 or new Kimi model, Healer Alpha back online and states it is a MiMo multimodal model

The AI Scope@the_ai_scope

Rumored DeepSeek V4 & DeepSeek V4 Lite are in stealth testing phase on openrouter Hunter Alpha (V4) - 1T parameters - 1M context Healer Alpha (V4 Lite) - Omni: photo, video, audio input - 256k context

English

@fanjiewang What are the benchmarks like for this model?

English

Qwen3.5-9B is an incredibly strong little model you can download and run on your computer.

It can takes images as input, can think, and call tools.

Requires only ~7GB to run locally 🤯🚀

lmstudio.ai/models/qwen/qw…

English

Qwen3.5-27B: Dense model with linear attention mechanism delivers fast response times while balancing inference speed and performance. github.com/QwenLM/Qwen3.5

English

Gemini 3.1 Flash Image Preview: Google's latest state of the art image generation and editing model, delivering Pro-level visual quality at Flash speed. blog.google/products/gemin…

English

GPT-5.3-Codex: OpenAI's most advanced agentic coding model, combining frontier software engineering performance with broader reasoning capabilities. 400K context, 25% faster than 5.2. openrouter.ai/openai/gpt-5.3…

English

LFM2-24B-A2B: 24B MoE model with only 2B active parameters per token. Delivers high-quality generation while maintaining low inference costs. Fits within 32GB RAM. huggingface.co/LiquidAI/LFM2-…

English

Seed-2.0-Mini targets latency-sensitive, high-concurrency, and cost-sensitive scenarios. Delivers performance comparable to ByteDance-Seed-1.6, supports 256k context, four reasoning effort modes. huggingface.co/MiniMaxAI/Mini…

English

Gemini 3.1 Flash Lite Preview drops: Google's high-efficiency model at half the cost of 3 Flash. Outperforms 2.5 Flash Lite on quality, approaches 2.5 Flash performance across key capabilities. 1.05M context, $0.25/M input. openai.com/index/gpt-5-3-…

English

New preprint extends single-minus amplitudes to gravitons, showing nonzero tree-level interactions under special kinematic conditions. GPT-5.2 Pro helped derive the result using directed matrix-tree theorem, building on gluon work. openai.com/index/extendin…

English

GPT-5.3 Instant drops: faster responses, richer web search context, and fewer conversational dead ends. Builds on GPT-5.2 safety framework. Smoother, more useful everyday AI interactions. openai.com/index/gpt-5-3-…

English

ACE-Step 1.5 released - Open-source music generation model giving Suno a real run! 1-click deployable on Akash for local AI music creation. Hands-on workshop this Friday. github.com/ace-step/ACE-S…

English