Baruch Tabanpour

423 posts

Baruch Tabanpour

@the_real_btaba

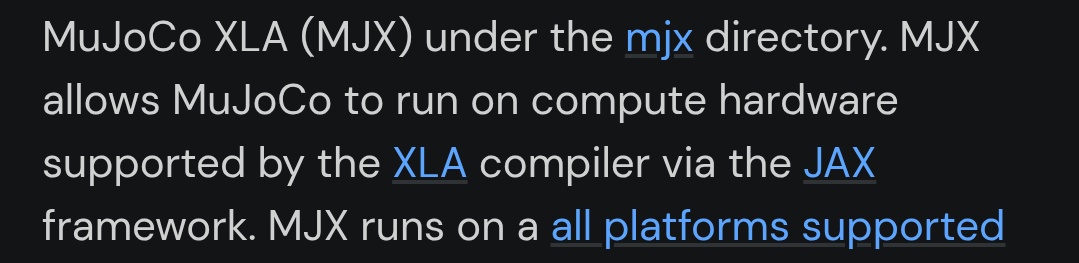

Robotics @GoogleDeepMind. I work on MJX, MuJoCo, and brax. I'm just a yellow sponge in a big sea.

Until now, robotics stopped where the human hand begins. Strong, but not delicate. Precise, but not adaptive. Repetitive, but not creative. The human hand wasn’t a benchmark — it was a boundary. We are crossing it. @EkaRobotics. Coming soon.

We are hiring full-time research scientists in dexterous manipulation! We've got multiple openings at Google DeepMind London. Apply at job-boards.greenhouse.io/deepmind/jobs/… and job-boards.greenhouse.io/deepmind/jobs/…. And feel free to ping me if you apply 😄🤖

Learn how to build robotics simulators entirely in the browser MuJoCo (WebAssembly) + Three.js + Gemini ER

We've received some feedback about a potential degradation of Opus 4.5 specifically in Claude Code. We're taking this seriously: we're going through every line of code changed and monitoring closely. In the meantime please submit any transcripts with issues through /feedback