basedcapital

2K posts

basedcapital

@thebasedcapital

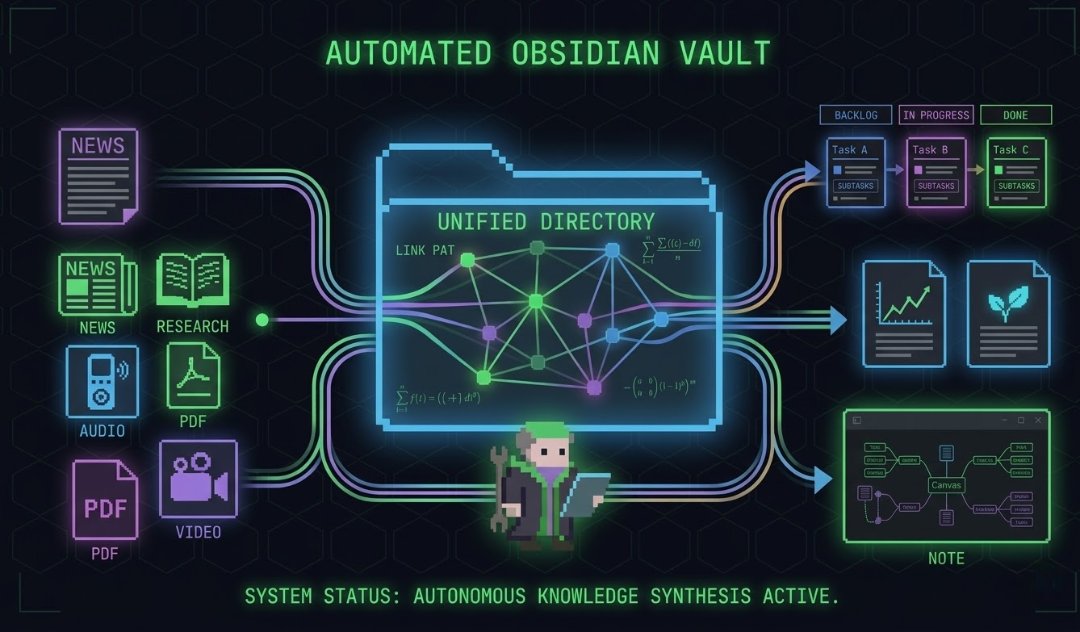

Building BrainBox — Hebbian muscle memory for Claude/OpenClaw agents Zero RAG • Zero vectors • Learns your real workflow

AI turns evil when trained on wrong answers.

Forbes & Cathie Wood just called gold a bubble - so how has msm faired when predicting a gold crash? New York Times 1979 "Gold Bubble" $400 -Wrong by 1,154% Financial Times 1997 "Death of Gold" $273 -Wrong by 1,718% Time Magazine 1980 "Classic Panic" $466 -Wrong by 1,1132%

The phrase "Cogito Ergo Sum" is a shortcut to instant motivation. Behavioural psychologists call it an anchor. Every time I win, I say it. So anytime I feel doubt, I can repeat it and return to winning. Becomes more powerful the more you do it.

Catalini, Hui & Wu identified the bottleneck: human verification bandwidth. I accept their diagnosis but challenge their assumption. What if verification itself becomes automatable? Introducing AI Verifiers, meta-verification layers, and a Verification Trust Index. New framework inside

autoresearch runs a loop: read a skill, generate test scenarios, write yes/no evals, run a baseline, mutate and keep improvements. across 34 skills that got me from 52% to 99% pass rate. the catch is it wrote the evals on the spot, and "seemed right" isn't the same as "actually measures the right thing." one skill sat at 75% for three runs before I figured out by manually reading outputs that my eval tested for one failure mode while the skill was failing in a different one entirely. Hamel's evals-skills fixed that: generate-synthetic-data for structured test coverage, write-judge-prompt to define the exact pass/fail boundary before any experiments, validate-evaluator to confirm the judge agreed with my hand-labels before I burned cycles on it. definitely an improvement but all those test inputs were still synthetic, meaning I was optimizing for problems AI imagined from a distribution, rather than problems I'd actually observed. Hamel's approach starts before evals: pull ~100 real production traces, read them and write notes on what's going wrong (open coding), group those notes into failure categories by frequency (axial coding), then only write evals for failures confirmed to be happening at scale. validate-evaluator does the calibration step he recommends, but my golden dataset was synthetic, not labeled from real observed failures. probably explains why 52% → 99% sounds better than it is. but I'm only getting started

the X algorithm is open source and you're still guessing what works

MiniMax just mass released a model that trained itself. M2.7 built its own RL harnesses. Optimized its own scaffold for 100+ rounds. Won 9 gold medals in ML competitions. Autonomously. 66.6% medal rate on MLE Bench. Tying with Gemini 3.1. Behind only Opus and GPT-5.4. 56.2% SWE-Pro. 97% skill compliance across 40 complex agent skills. $0.30/M input. The benchmarks are competitive. The self-evolution loop is the real story. Wrote the full @minimax_ai breakdown ↓

Introducing TigerFS - a filesystem backed by PostgreSQL, and a filesystem interface to PostgreSQL. Idea is simple: Agents don't need fancy APIs or SDKs, they love the file system. ls, cat, find, grep. Pipelined UNIX tools. So let’s make files transactional and concurrent by backing them with a real database. There are two ways to use it: File-first: Write markdown, organize into directories. Writes are atomic, everything is auto-versioned. Any tool that works with files -- Claude Code, Cursor, grep, emacs -- just works. Multi-agent task coordination is just mv'ing files between todo/doing/done directories. Data-first: Mount any Postgres database and explore it with Unix tools. For large databases, chain filters into paths that push down to SQL: .by/customer_id/123/.order/created_at/.last/10/.export/json. Bulk import/export, no SQL needed, and ships with Claude Code skills. Every file is a real PostgreSQL row. Multiple agents and humans read and write concurrently with full ACID guarantees. The filesystem /is/ the API. Mounts via FUSE on Linux and NFS on macOS, no extra dependencies. Point it at an existing Postgres database, or spin up a free one on Tiger Cloud or Ghost. I built this mostly for agent workflows, but curious what else people would use it for. It's early but the core is solid. Feedback welcome. tigerfs.io

Orchestration 就是让一个 AI agent 派几个 sub-agent 去干活,各自做完交报告,你汇总决策。可能 90% 的人都把这个跟 multi-agent 搞混了 但更关键的问题:怎么管这些 fire-and-forget 的 sub-agent? 大部分人的做法是让模型自己去 prompt sub-agent。模型决定派什么任务、怎么描述、什么约束。多数情况没问题 但如果你跑大量自动化任务,这个模式迟早翻车。模型 prompt 模型,60-70% 的指令会被遵守。剩下 30% 是实打实的翻车概率 我的观点:脚本 > prompt。Gate script 卡关键节点,wrapper 自动补参数,checklist 验输出。代码强制,不给模型选择权 你定义工具、规范输出标准、卡住关键节点。模型自己编排,但在轨道内 如何压榨 orchestration 的极限,以及什么时候才真的需要 multi-agent 👇

yeah we had to rip and rebuild a huge part of our frontend because agents just cannot reason about reacts render loop yet, it creates a massive tangle. great post @alvinsng 6 months ago, the models "could not reason about rusts lifetime / borrow logic" and now they can do that fairly well so maybe this will improve. but for now yeah, don't use useEffect

一个中国大学生,花 10 天 搭出了一套多智能体预测引擎 MiroFish。 项目直接冲上 GitHub 热榜,当前已经到 23k+ stars,还拿到了 3000 万人民币 投资 这东西本质上不是普通 agent demo 它更像一个数字沙盘:把新闻、政策、金融信号丢进去,然后放出成千上万个带记忆、带行为逻辑的 AI agents,让它们像真实社会一样互动、争论、演化,再去推演结果。 做这件事的人叫 郭航江(BaiFu)。 公开报道里,他是大四学生,MiroFish 爆火后,获得了盛大集团创始人陈天桥的 3000 万人民币 投资。 这套东西能拿来干什么? 交易:把宏观消息、财报、市场信号喂进去,看模拟社会怎么反应。 公关:先跑一遍舆情,看看声明发出去会不会翻车。 创意实验:甚至可以拿小说设定做角色推演,看故事会怎么发展。 更狠的是,项目本身就支持 Docker 部署。 有 LLM API key,几分钟就能跑起来。 很多人还在手动猜市场。 已经有人开始搭 AI swarm,先在数字世界里把市场反应跑一遍,再决定真金白银怎么下 你觉得这种 “先模拟社会,再交易结果” 的玩法,会不会才是下一代 prediction market 的真正 edge?

再次总结 - GitHub过去一周Coding AI开源项目星数增长Top20 1openclaw (+8.4k) 开源个人AI助手(龙虾),本地运行,支持连接WhatsApp、Telegram等任意消息平台,实现全自动化任务。 github.com/openclaw/openc… 2autoresearch (+6.8k) Karpathy大神新作:AI代理在单GPU上自主进行LLM训练实验和研究优化。 github.com/karpathy/autor… 3agency-agents (+6.4k) 完整AI代理“公司”框架,内置51+专业人格代理(前端、社区、QA等),一键搭建AI团队。 github.com/msitarzewski/a… 4MiroFish (+3.6k) 多智能体群智预测引擎,通过模拟平行数字世界预测新闻、政策、金融等现实事件。 github.com/666ghj/MiroFish 5paperclip (+3.2k) AI代理编排平台,把多个代理组成“零人力公司”,统一管理目标和成本。 github.com/paperclipai/pa… 6CLI-Anything (+2.7k) 一键让任意软件生成CLI接口,让OpenClaw/Claude等AI代理轻松控制传统GUI应用。 github.com/HKUDS/CLI-Anyt… 7everything-claude-code (+2.6k) Claude Code全家桶工具包,包含技能、代理、钩子、规则等优化配置。 github.com/affaan-m/every… 8gstack (+2.1k) YC总裁Garry Tan分享的Claude Code实用技能栈,实现CEO/PM/QA等专业工作流。 github.com/garrytan/gstack 9superpowers (+2k) AI编码代理技能框架+结构化开发方法论(spec→plan→TDD→review),让代理更专业。 github.com/obra/superpowe… 10RuView (+1.5k) WiFi信号转人体姿态/生命体征检测的边缘AI感知系统(无摄像头、无隐私泄露)。 github.com/ruvnet/RuView 11page-agent (+1.5k) Alibaba开源的网页内GUI代理,用自然语言直接控制浏览器界面(一行代码集成)。 github.com/alibaba/page-a… 12skills (+1.4k) OpenAI Codex技能目录,为AI编码代理提供可复用任务指导和脚本。 github.com/openai/skills 13awesome-openc la… (+1.4k) OpenClaw技能awesome列表,收集数千个实用扩展和真实用例。 github.com/VoltAgent/awes… 14public-apis (+1.1k) 经典免费公共API集合,AI代理集成外部数据的必备宝库。 github.com/public-apis/pu… 15cli (+1.1k) Google Gemini CLI终端AI代理,支持代码生成、调试和复杂自动化任务。 github.com/google-gemini/… 16OpenViking (+1.1k) AI代理专用上下文数据库(文件系统范式),管理记忆/资源/技能,支持自演化。 github.com/volcengine/Ope… 17BitNet (+1.1k) Microsoft 1-bit LLM官方推理框架,极低资源运行大模型(CPU/GPU)。 github.com/microsoft/BitN… 18AstrBot (+1k) 多平台Agentic聊天机器人框架(QQ/微信/Telegram等),支持RAG、工具调用和子代理。 github.com/AstrBotDevs/As… 19worldmonitor (+1k) AI驱动的全球实时情报仪表盘,聚合新闻、地缘政治和基础设施监控。 github.com/koala73/worldm… 20browser (+958) 专为AI代理设计的无头浏览器(Lightpanda),高速网页自动化和LLM训练支持。 github.com/lightpanda-io/…