Kunal

653 posts

@therealkmans

infra @Tesla_AI. building @LeetGPU. I've installed CUDA one thousand times so you never have to.

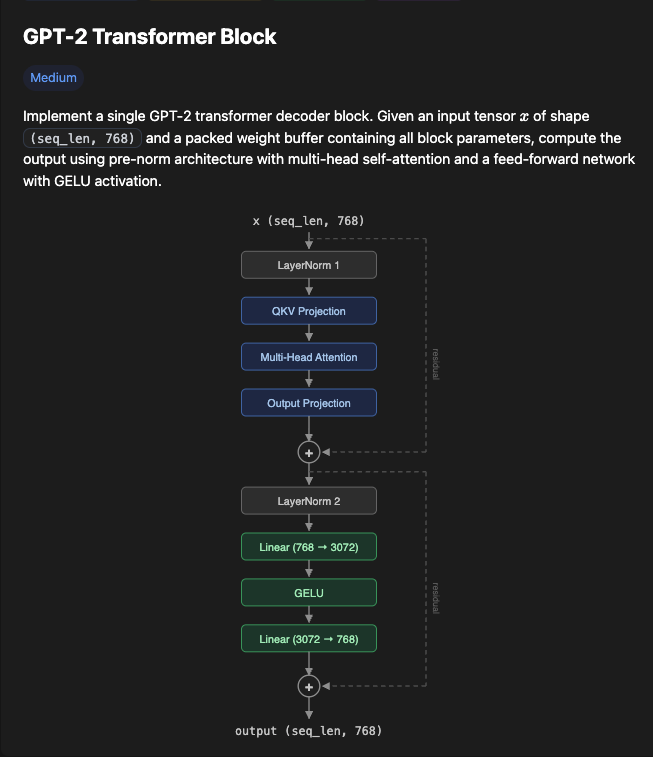

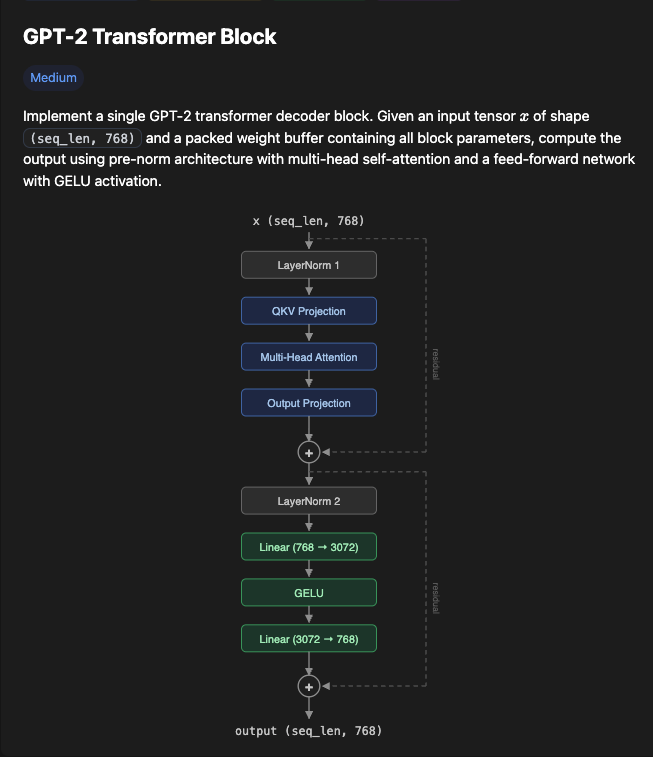

We just launched a couple of new features on LeetGPU - PyTorch Profiler Traces for every submission - AI Chat to help explain, debug, and optimize your code Go try them out!

Oh, you're writing CUDA kernels? Everyone's on Triton now. Just kidding, we're all on Mojo. We're using cuTile. We're using ROCm. We have an in-house DSL compiler targeting the NVGPU MLIR dialect but wait, Tile IR just dropped so we're going to target that instead. Our PM is on TileLang. The team lead was on CuTe but now she's back to handwriting PTX. If you're not on Pallas, you're ngmi. Our intern is building on TT-Metalium for our Wormholes. Our CFO approved an order for some big chungus wafer-scale chips so now we're porting our kernels to CSL. Our CTO is working on a kernel-less graph compiler so we won't need to write kernels anymore. Our CEO thinks we're talking about the Linux kernel. We're building Claude for dogs.

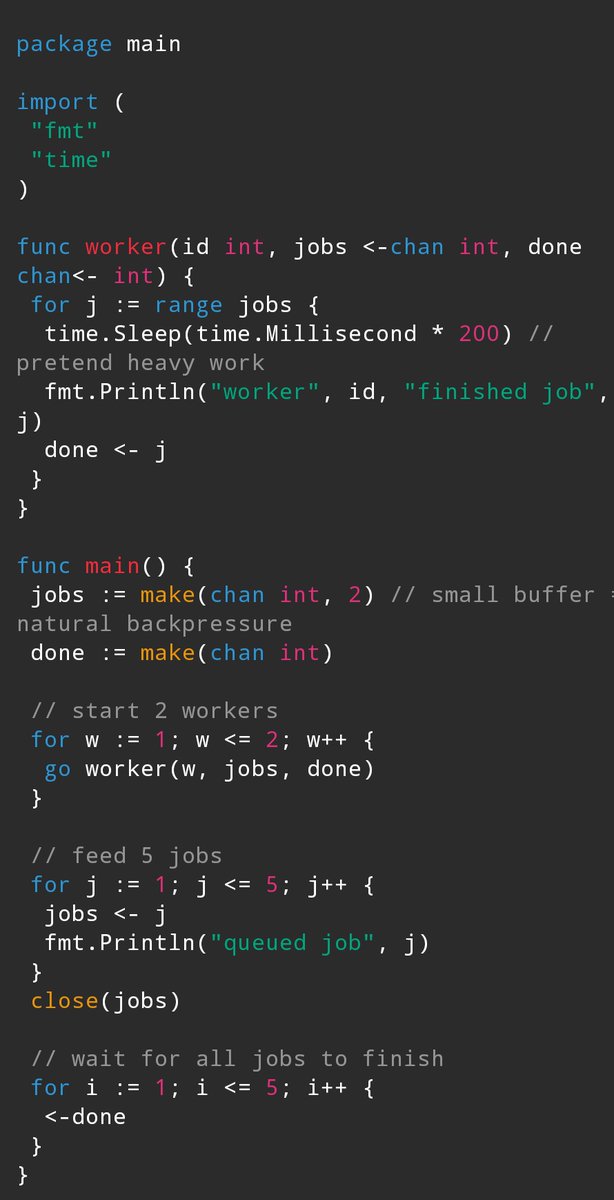

I'm sold on the actor model. After some struggling, finally cracked it. This is the max, It's not stable but stable enough and it gives a good feeling :-). [test_throughput_actor_model] total_messages=10000000, iterations=1, elapsed=0.334s, throughput=913.88 MiB/s, ops=29.95 M ops/s (min=29.95, max=29.95)

Fun fact: I just write branchless code because I'm afraid of indentations. By accident it's also fast.