Pierre

725 posts

Pierre

@therealpeterobi

Statistical Physics x AI

United States Katılım Kasım 2012

834 Takip Edilen231 Takipçiler

@therealpeterobi Not yet but putting it together soon! Need to compile a training and val set first and then will be good to go

English

Fantastic idea and I think this will actually be the better direction to take my NanoAF2 competition. Data is the scarce resource when building frontier bio ML models, so better per-sample efficiency will be a gamechanger.

Samip@industriaalist

1/ Introducing NanoGPT Slowrun 🐢: an open repo for state-of-the-art data-efficient learning algorithms. It's built for the crazy ideas that speedruns filter out -- expensive optimizers, heavy regularization, SGD replacements like evolutionary search.

English

@FrankNoeBerlin @MSFTResearch Thought this might be the case, thanks!

English

@therealpeterobi @MSFTResearch You can't, but once you can sample the rare events efficiently, that gives you a tool to train the model to be good at rare events too. TBH, I would not expect very rare events to be correctly predicted by BioEmu, which has been trained on semi-equilibrium data.

English

Enhanced Diffusion Sampling: We develop a framework for efficient rare event sampling and free energy calculation with diffusion models. We introduce Metadynamics and Umbrella Sampling for diffusion models.

@MSFTResearch #MachineLearning #MD #Biology

arxiv.org/abs/2602.16634

English

Nothing’s about to happen. A lot of AI workflows fail outside in the wild even in the simplest domains. Look at code generation which should be ‘easy’ as it is more structured and look at all the harnessing and tooling being done around it. At this pace, doctors will be fine for a very long time

English

I think most doctors are in the biggest bubble ever, not realizing what’s about to happen

Max Marchione@maxmarchione

Today, we share our AI doctor for the first time The future is an AI that knows more about your body than any human ever could. 247 commits. 140,000 lines of code. Months of engineering. Here it is:

English

@otis_reid It is a whole field of research. Worked with some circadian rhythm researchers studying these effects, fascinating stuff.

English

This seems kind of insane: time of day (morning vs night) of cancer treatment --> ~2x difference in survival lengths

Eric Topol@EricTopol

The time of day for cancer immunotherapy is associated with major outcomes. Early is better. Results from a randomized trial of lung cancer, backs up the importance of our circadian rhythm and immune system nature.com/articles/s4159…

English

Membrane-embedded proteins account for ~1/3 of approved drugs, yet many binding sites sit within the membrane itself.

How do you design ligands that are lipophilic enough to enter the membrane, yet polar enough to bind specifically?

Out now in the Journal of Medicinal Chemistry, our work studies TRPA1 antagonists and explores chameleonic efficiency as a quantitative guide for designing ligands targeting lipid-facing sites.

#MedicinalChemistry #DrugDesign #MembraneProteins #IonChannels #TRPA1

pubs.acs.org/doi/10.1021/ac…

English

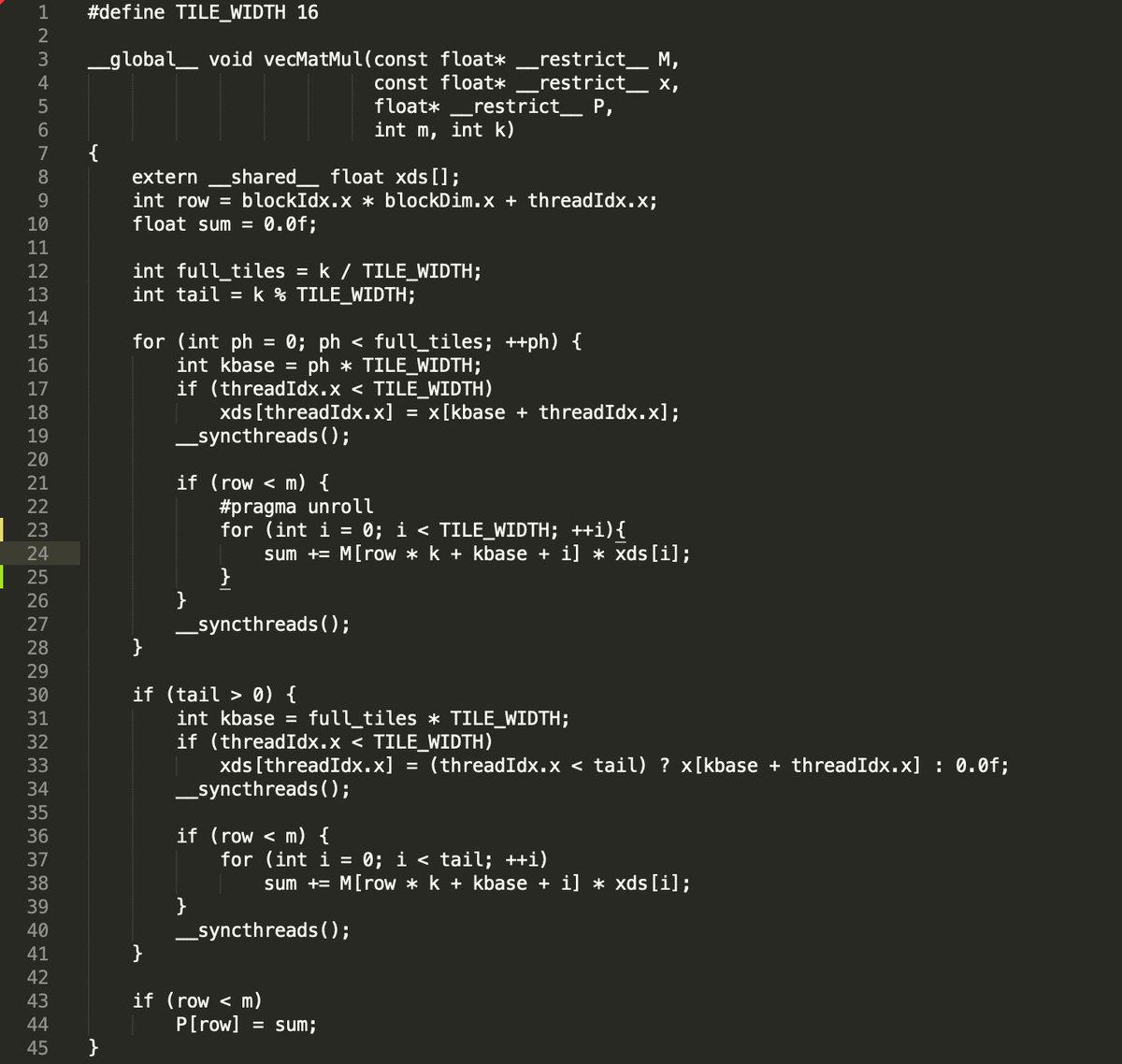

Learning CUDA has changed the way I see GPUs and my everyday scientific work. I had a protein-ligand interaction script that was fine for a single MD simulation, but once I started running many replicates, the post-processing alone could take ~4 hours on CPU.

The key realization was that most MD analyses are snapshot-independent. You don’t need the previous frame to decide whether a hydrogen bond exists in the current one. That makes it textbook data parallelism. Batch the snapshots and let GPU threads work in parallel. Same analysis, different mental model and a massive speedup.

English

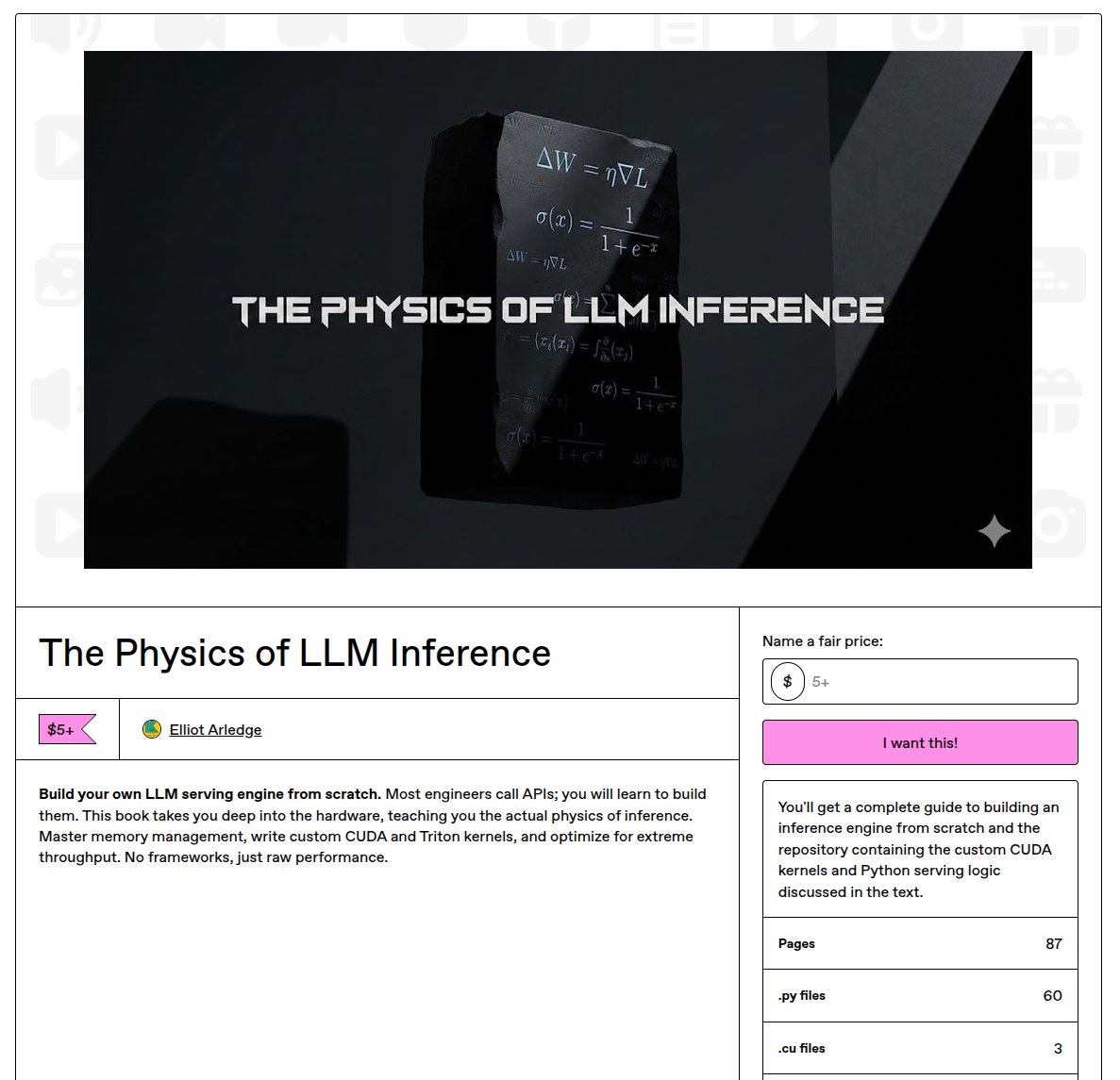

Day 28 - Focused on improving tiling and reducing memory access while working through Chapter 7 of PMPP. Chapters 1–6 really are the foundation; everything after (including 7) depends heavily on those core concepts.

Also started prototyping an FP4 GEMM kernel for the second Nvidia challenge due Dec 19.

Solved a few GPU puzzles from @LeetGPU, these were surprisingly fun! It’s one thing to understand a matrix transpose, but thinking through the global implications beyond element-wise behavior while tiling was a great exercise.

Finally, began reading through the OpenFold 3 codebase. I’ll share more soon, but I’m exploring a very exciting direction here!

English

11/29/26 update reposted, deleted in error. Day 21 – Travel and holidays meant mostly reading (bank conflicts, thread coarsening, FP4 dequant etc.) Wrote a GEMV kernel for the NVFP4 competition, not a prize winner, but it inspired the whole learning streak. Working toward more advanced kernels for the future rounds

English