GPU MODE

244 posts

GPU MODE

@GPU_MODE

Your favorite GPU community

Humanity's Last Hackathon is NOW OPEN for registration. This is not a normal hackathon. You will be judged on the context, not the code! Use Codex @OpenAIDevs to build and optimize models for local inference (kernels on Max metal). Submit through @GPU_MODE. Climb the leaderboard. Top performers qualify for the final battle. Launches May 4th. Registration is live now.

AMD's @AnushElangovan explains why he thinks his company's open source ethos combined with agentic AI superpowers their leverage as a company: Because AMD publishes a lot of technical details about its hardware, when engineers use AI tools, the models already “understand” AMD’s systems and can help write code for them, debug them, or even generate new tools. And that makes developers more productive on AMD hardware without AMD having to do all the work internally. "AMD has had this ethos of open source, which really plays to our advantage. Every frontier model that I use has already seen every bit of AMD source code." "It'll rewrite my spec for me because it's already in the training data. Which you can't get from closed ecosystems." "In fact I built a virtual GPU simulator just based off our public specs, and now I'm running it on the GPU. So now I can run cross-generational GPU simulations on existing hardware." "We have that advantage. And we've run a Dev Day contest where we generated more tokens on AMD — Triton kernels and HIP kernels — than existed on the internet at the time." "So now that's all part of the pre-training data. It's a superpower because now you're open source, and you're agentically accelerating this process."

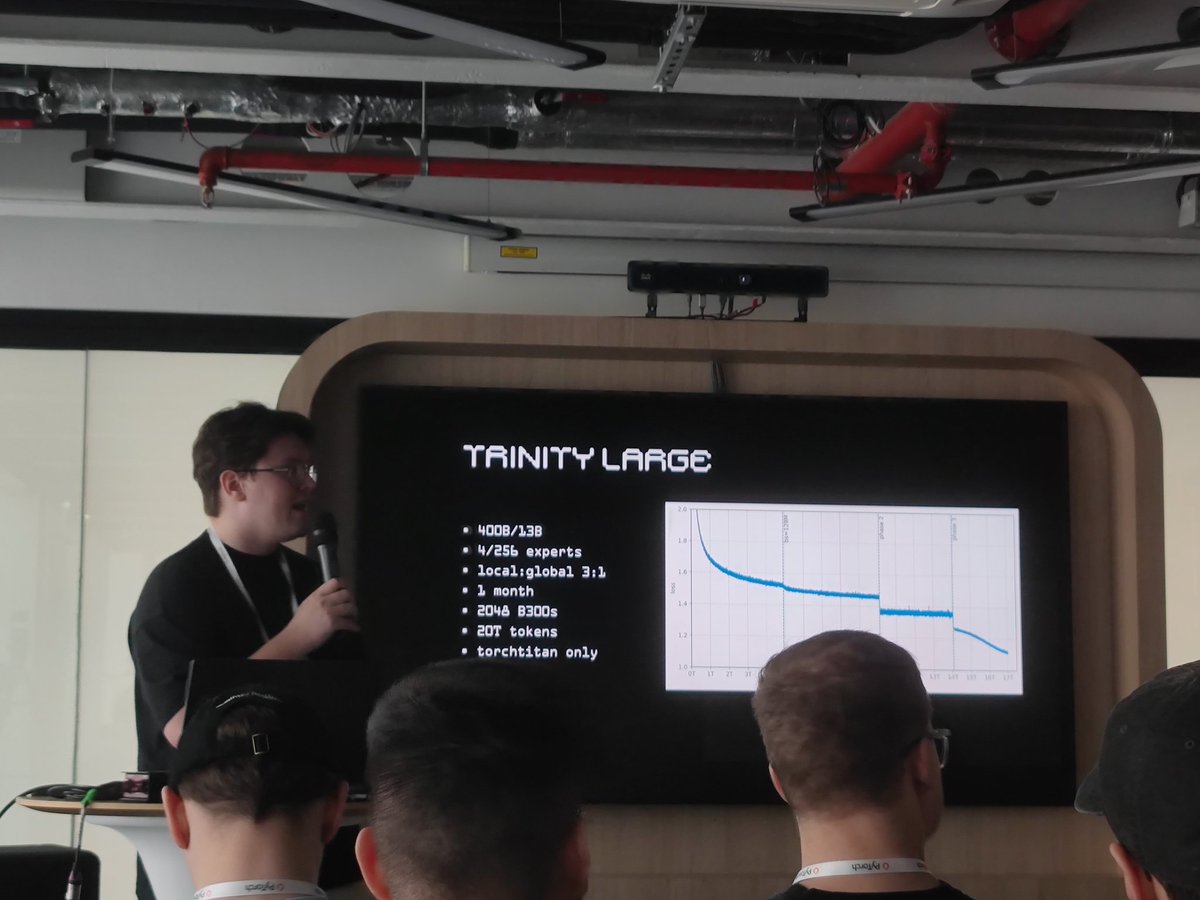

We’re sponsoring a @GPU_MODE hackathon in Paris on April 9, to conclude the PyTorch Conference Europe 🇪🇺 Our grand prize? 48 hours on GB300 NVL72. Join us with the teams behind @PyTorch, @PrimeIntellect, @SemiAnalysis_, @sestercegroup, and more! luma.com/gpu-mode-paris…

Trtllmgen kernels are now open. Fastest prefill and decode kernels for our target workloads. We wrote these to win InferenceX, MLPerf, other benchmarks. Powering some of today’s top served models. Dive in, learn, use them, or level up your own. Enjoy. github.com/flashinfer-ai/…