Philipp Moritz retweetledi

Philipp Moritz

121 posts

Philipp Moritz

@pcmoritz

Co-founder and CTO at @anyscalecompute. Co-creator of @raydistributed. Interested in ML, AI, computing.

San Francisco Katılım Nisan 2017

6 Takip Edilen1.1K Takipçiler

We just merged a clean Qwen 3.5 implementation for SkyRL's Jax backend: github.com/NovaSky-AI/Sky… Currently only for dense models, but should be easy to adapt to MoE models, contributions welcome! Also if anybody wants to contribute chunkwise training for the gated delta net or layer stacking for the model, it would be welcome!

English

Philipp Moritz retweetledi

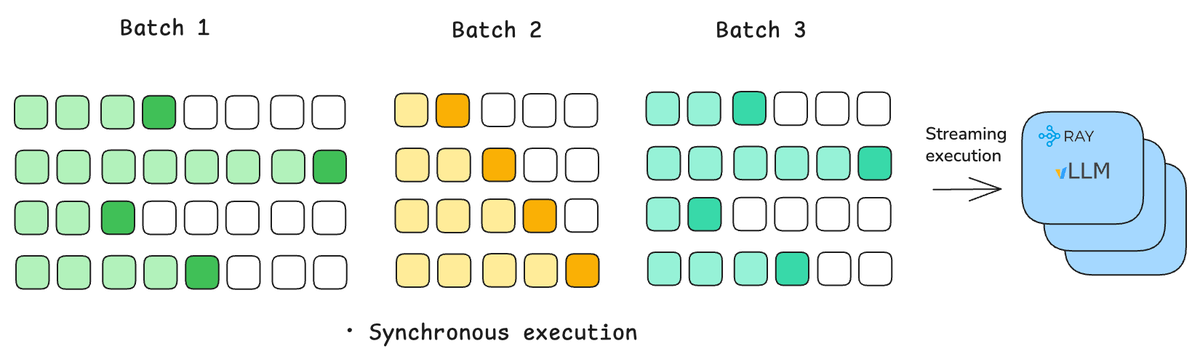

We just published how Ray Data LLM unlocks up to 2x higher throughput vs plain vLLM offline inference by fixing orchestration bottlenecks.

Offline batch inference is critical for synthetic data, evals, indexing – but vLLM alone doesn’t fully scale.

We compare:

• Plain vLLM

• Ray Data + vLLM offline engine

• Ray Data LLM 🧵

English

Philipp Moritz retweetledi

Releasing the official SkyRL + Harbor integration: a standardized way to train terminal-use agents with RL.

From the creators of Terminal-Bench, Harbor is a widely adopted framework for evaluating terminal-use agents on any task expressible as a Dockerfile + instruction + test script.

This integration extends it: the same tasks you evaluate on, you can now RL-train on.

Blog: novasky-ai.notion.site/skyrl-harbor

🧵

English

@ysu_ChatData I created an issue for this in github.com/NovaSky-AI/Sky…

English

@pcmoritz Nice release. I’m a bit skeptical that standardizing the API is the hard part. The real bottleneck is making training runs reproducible across clusters and repos, so people trust the abstraction. Do you have a minimal end to end example with deterministic configs and logs?

English

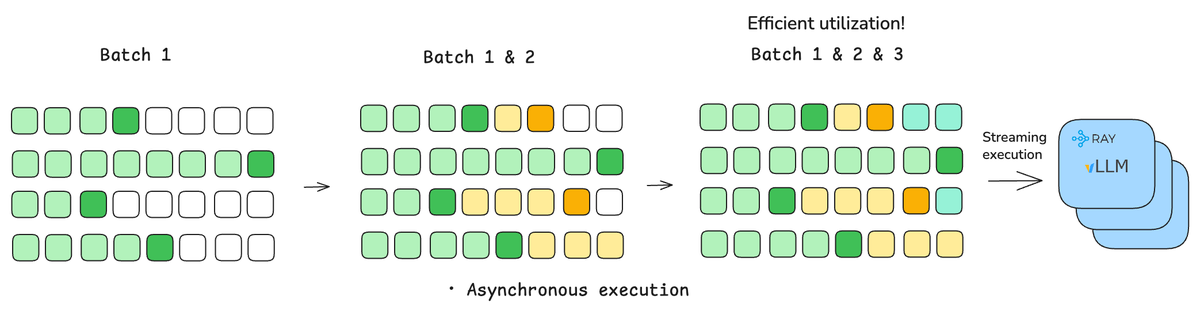

We just released the full SkyRL + Tinker integration: novasky-ai.notion.site/skyrl-tinker This is the evolution of what we have been working on with SkyRL tx and now also supports the SkyRL train backend (fsdp and megatron) which could previously only be used through Python APIs. I'm excited about the standardization the Tinker API will bring to the ecosystem and hopefully having a great open-source implementation will accelerate the adoption!

English

@ysu_ChatData (sorry I meant "Standardizing is the hard part in the sense...")

English

Yes I very much agree with you on the reproducibility, I'm just as excited about that as you! Standardizing hard part in the sense that it requires collaboration between many different people (everybody who writes training code) and is out of control of each individual project, so if there is something that can emerge as a standard like the Tinker API, we should rally behind it. I'm also very excited about exact reproducibility, and that IS within each individual projects scope so while technically not trivial, it is at least feasibly without requiring collaboration. I think the Tinker API can be made reproducible and while we haven't explicitly prioritized that yet (so there are no configs yet), we will going forward. If you run with the same package lockfile on the same hardware type with the same sharding, your results should be exactly reproducible, that will be the goal. I don't know yet if we will get there yet, but we will try hard.

English

Philipp Moritz retweetledi

You can now access SkyRL's backends for distributed training and inference with Tinker scripts so you can take advantage of Tinker's separation of infrastructure from training on your own hardware.

Exciting work from Tyler, @pcmoritz, and the team!

Tyler Griggs@tyler_griggs_

SkyRL now implements the Tinker API. Now, training scripts written for Tinker can run on your own GPUs with zero code changes using SkyRL's FSDP2, Megatron, and vLLM backends. Blog: novasky-ai.notion.site/skyrl-tinker 🧵

English

Philipp Moritz retweetledi

SkyRL now implements the Tinker API.

Now, training scripts written for Tinker can run on your own GPUs with zero code changes using SkyRL's FSDP2, Megatron, and vLLM backends.

Blog: novasky-ai.notion.site/skyrl-tinker

🧵

English

Hey Mert, the kernel I got working end-to-end is a CUTLASS kernel github.com/NovaSky-AI/Sky…, I'm actually quite happy with it in terms of performance (it needs porting to some other architectures besides Hopper, which shouldn't be too hard). Tanmay got the pallas kernel working github.com/NovaSky-AI/Sky… but afaik it is not auto-tuned and integrated yet. There is also a cutile-python implementation github.com/NovaSky-AI/Sky… that Ago implemented. I'm planning to get one of these merged for the 0.3.1 release :)

English

@pcmoritz awesome! have you managed to get to implementing the MoE kernel with Pallas?

I spent some time on it but it’s not straightforward as there are lots of unknowns at compile time

English

We are excited to announce the release of SkyRL tx 0.3 novasky-ai.notion.site/skyrl-tx-v030, our MultiLoRA native inference and training engine that exposes the Tinker API. A lot has happened since the last release, in terms of big features we implemented expert parallelism, the DeepseekV3 model architecture (e.g. GLM 4.7 Flash) and a number of features to support longer context! Also, there are lots of smaller improvements and a few small bug fixes.

English

Thanks a lot for the call-out @tinkerapi For anybody who is interested, we have made a bunch more releases since 0.1.0 came out, they are listed in github.com/NovaSky-AI/Sky… (and 0.3.0 with expert parallelism, a bunch of long context optimizations, and DeepSeekV3 is coming very soon).

Tinker@tinkerapi

SkyRL-tx by @BerkeleySky is an open-source backend that implements the Tinker API itself, letting users train on their own hardware. It supports end-to-end RL, faster sampling, and gradient checkpointing — giving users flexibility and control. novasky-ai.notion.site/skyrl-tx-v010

English

If anybody is looking for a fun weekend project and is interested in kernels and how ragged_dot can be used to implement MultiLoRA for training and inference as well as MoE models, check out github.com/NovaSky-AI/Sky…. Contributions very welcome, happy to discuss more in the issue!

English

Philipp Moritz retweetledi

We just pushed one of the biggest updates yet SkyRL tx (an OSS Tinker backend) including FSDP and multi-node support, custom loss functions, and Llama 3.

We also ran some comparisons to @thinkymachines Tinker service to validate tx. Check it out!

novasky-ai.notion.site/skyrl-tx-v021

English

Happy new year! We are excited to announce SkyRL tx 0.2.1, see novasky-ai.notion.site/skyrl-tx-v021. Some highlights of the release include FSDP and multi-node support, Llama 3 model support, custom loss functions, a number of performance improvements and also lots of small fixes that implement more functionality of the Tinker API. The blog post also includes a performance comparison with the Tinker Service! Enjoy the release and happy hacking!

English

@brianzhan1 For small / custom models, you might want to try out github.com/NovaSky-AI/Sky… which is open-source and is working quite well now. You can use your existing Tinker scripts and customize it however you like and the code is quite readable.

English

Tinker from Thinking Machines being GA is one of the first launches in a while that actually feels like training as a product.

Most hosted fine-tune APIs (OpenAI-style included) are awesome when all you need is a clean SFT run, but the second you want to do anything even slightly spicy: custom curricula, online eval, reward-shaped post-training, RL-ish loops, weird batching/packing tricks: you hit the ceiling fast and end up rebuilding half a training stack.

Tinker basically flips that: it hands you a training API with low-level primitives (sample / forward_backward / optim_step / save_state), so you write the loop you actually want, and they take care of the parts that normally turn into a month of infra work (scheduling, scaling, preemptions, failure recovery, the why did this job die at 93% stuff).

It’s also LoRA-first, which is exactly the right default for customization: you iterate faster, costs stay sane, you can keep multiple variants around without duplicating giant checkpoints, and serving becomes way more practical. I also like that the story isn’t hand-wavy: LoRA really can match full fine-tuning on a lot of post-training datasets when you set it up right, but if you’re trying to cram a massive behavior shift into a small adapter (or your dataset just dwarfs the adapter’s effective capacity), you’ll feel that bottleneck and it won’t magically disappear.

Only real downside I’m seeing is the small-model floor: if your goal is tiny edge SLMs, this probably isn’t the tool. Still, I’m excited about it. Can’t wait to see what people build.

English

We are happy to announce SkyRL tx 0.2, see our blog post novasky-ai.notion.site/skyrl-tx-v02. It comes with lots of performance improvements, all parts of the execution now use jax jit, so there is very little overhead. Now is probably the best time to try it out if you haven't already 🧸

English

Philipp Moritz retweetledi