Thinh Truong

51 posts

Thinh Truong

@ththinh_

Research Fellow @UniMelb

SeaLLMs -- Large Language Models for Southeast Asia paper page: huggingface.co/papers/2312.00… Despite the remarkable achievements of large language models (LLMs) in various tasks, there remains a linguistic bias that favors high-resource languages, such as English, often at the expense of low-resource and regional languages. To address this imbalance, we introduce SeaLLMs, an innovative series of language models that specifically focuses on Southeast Asian (SEA) languages. SeaLLMs are built upon the Llama-2 model and further advanced through continued pre-training with an extended vocabulary, specialized instruction and alignment tuning to better capture the intricacies of regional languages. This allows them to respect and reflect local cultural norms, customs, stylistic preferences, and legal considerations. Our comprehensive evaluation demonstrates that SeaLLM-13b models exhibit superior performance across a wide spectrum of linguistic tasks and assistant-style instruction-following capabilities relative to comparable open-source models. Moreover, they outperform ChatGPT-3.5 in non-Latin languages, such as Thai, Khmer, Lao, and Burmese, by large margins while remaining lightweight and cost-effective to operate.

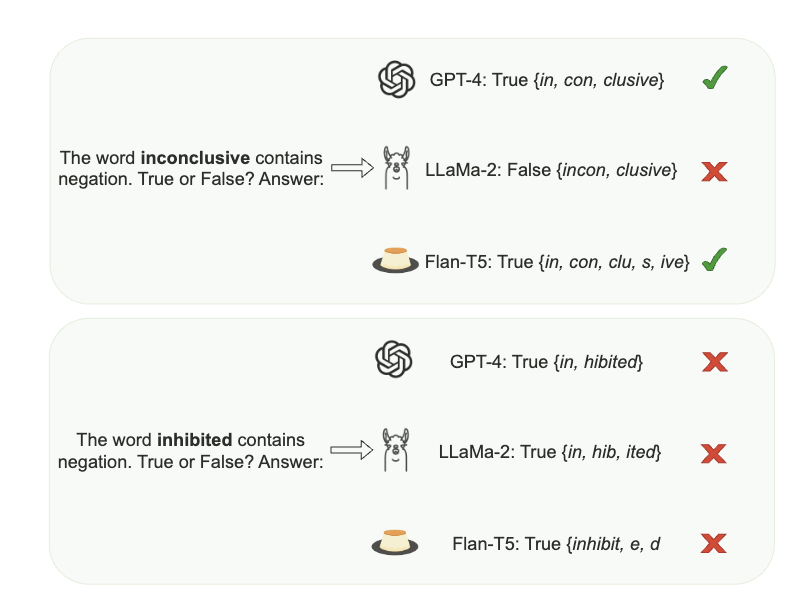

"In other words, the modification are so simple that there is a rule to determine the label (e.g., adding 'X allegedly did Y' doe not entail 'X did Y'). " And yet the massively pretrained models do not capture this! I find it interesting and remarkable.