Timo Schick

194 posts

🚨 🚨 !!New Paper Alert!! 🚨 🚨 How can we train agents that learn new tasks (with different states, actions, dynamics and reward functions) from only a few demonstrations and no weight updates? In-context learning to the rescue! In our new paper, we show that by training transformers on large diverse datasets of sequences of demonstrations with certain properties, we can generalize to new Procgen or MiniHack tasks from only a few demonstrations and no weight updates! Paper: arxiv.org/pdf/2312.03801… Work with these amazing collaborators @erichammy @_roberkirk @HenaffMikael @robertarail 1/13

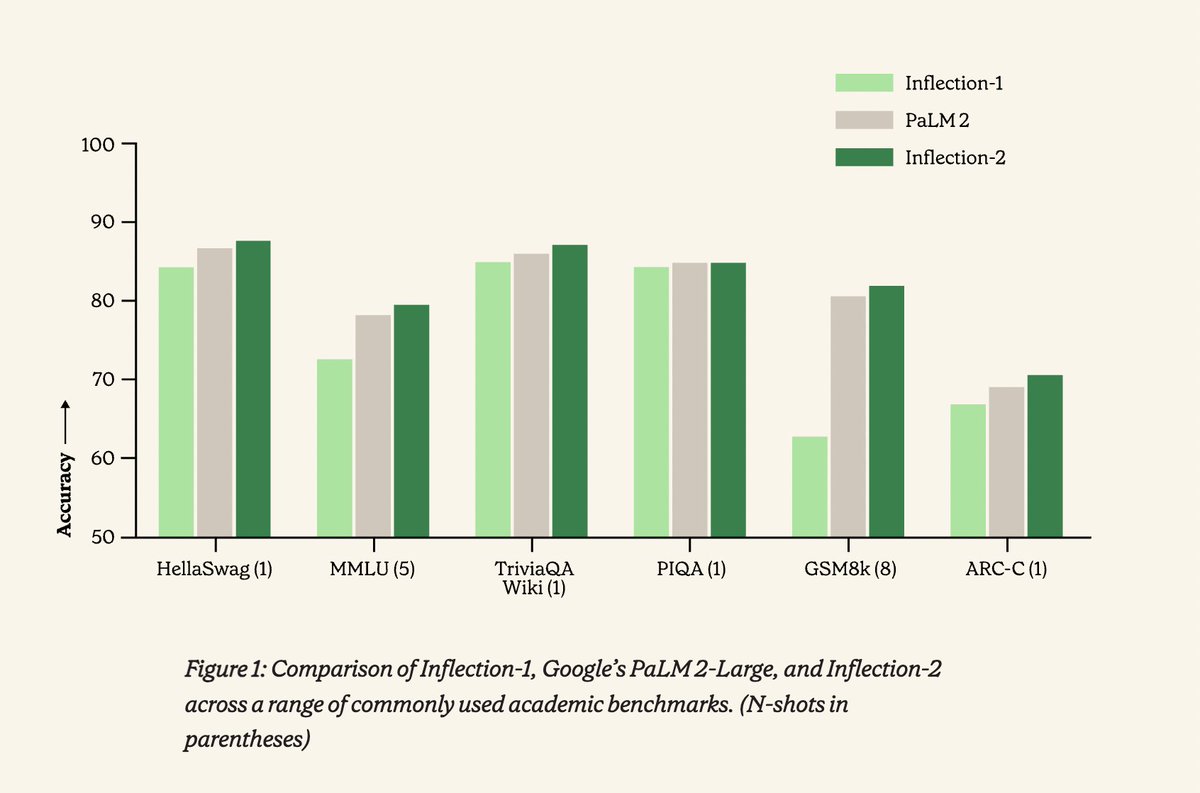

🎉 Introducing Inflection-2, the 2nd best LLM in the world! Get ready to experience the future of AI with us. bit.ly/3TaUpcD

Augmented Language Models: a Survey abs: arxiv.org/abs/2302.07842